Content Replication in I2-DSI using Rsync+ Bert J Dempsey Debra - - PDF document

Content Replication in I2-DSI using Rsync+ Bert J Dempsey Debra - - PDF document

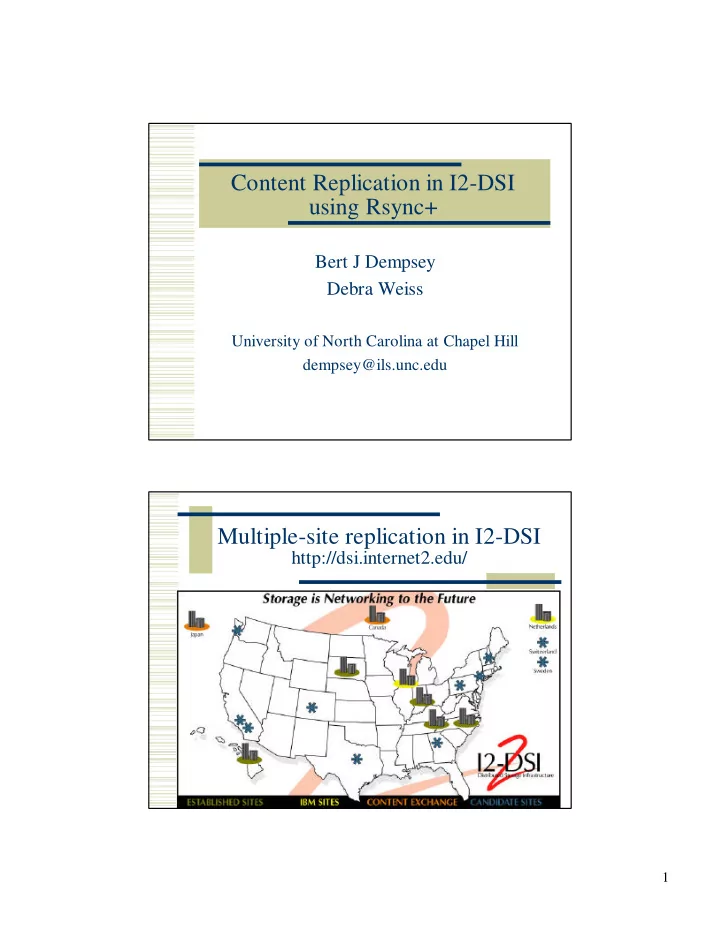

Content Replication in I2-DSI using Rsync+ Bert J Dempsey Debra Weiss University of North Carolina at Chapel Hill dempsey@ils.unc.edu Multiple-site replication in I2-DSI http://dsi.internet2.edu/ 1 Replicating Channels Channel provider

2

Replicating Channels

clients Channel provider

Master Node S1 S2 S3

clients

Content import from channel provider to master I2-DSI node Content Replication to all replication sites that carry the channel

Rsync+ for Content Replication

clients Channel provider

At M: Master-side rsync+ -F srcLatest/ src/ updates

M S1 S2 S3

at each of S1, S2, S3: Slave-side updates rsync+ -f src/

clients

(m)ftp updates

Rsync is popular filesystem sync tool Rsync+ is our mods to enable local capture of update info for store-and-forward communication

3

Server Experiment

Instrumented Mirror

Active Linux repository (8 GB, 25,000 files) Twice daily synchronization

On dsi.ncni.net:

rsync+ -F: Perform master-side rsync+ processing

between two local directories to create updates file

rsync+ -f: Use updates to perform slave-side rsync+

processing

Content Change Patterns

Data here from 1-month Linux mirror

Update per 12-hour period No files to change 13 of 60 periods (21%)

Average size of updated data (all periods)

0.144% of aggregate archive 0.104% under rsync+

Maximum size of updated data

2.42% of mirror

4

Rsync+ processing cost

1 3 5 7 9 11 13 15 0.5 1 1.5 2

Run time as % of rsync Mirror updates (one per 12 hours)

rsync+ -F rysnc+ -f

Rsync+ Local Throughput

5 10 15 20 25 30 4 8 12 16

Mirror Update (12 hours/update) Tput (Mbits/sec)

runtime (sec) unnormalized tput normalized tput

5

Network Throughput: ttcp experiment parameters

240KB / 240KB shared Buffer Policy 240 KB Receiver socket buffer size (KB) 1,2,4,8,16,24,32 Concurrent ttcp connections dsi.ncni.net ils.unc.edu Network Path (100 Mbit/s min) 5.45 MB File Size Values Parameter

Network Throughput: concurrent ttcp transmits

27.03944 8.23088 9.62592 6.29856 4.50688 2.8464 2.24 5 10 15 20 25 30 1 2 4 8 16 24 32 ttcp concurrent transmits Tput (Mbits/s)

6

Network experiments: setting socket buffer sizes

dsi2ils sept14 (1 ttcp, avg. over 6 runs)

1000 2000 3000 4000 5000 6000 7000 8000 9000 50000 100000 150000 200000 250000 300000 350000 Buffer Size (bytes) Tput (Kbytes/s)

Network Throughput: concurrent ttcp tputs

1 10 100 1 2 4 8 16 24 32 Concurrent ttcp transmits (avg over runs) Tput (Mbits/s) Tput, Buffer Policy 1 Tput, Buffer Policy 2 Aggregate Tput, Buffer Policy 1 Aggregate Tput, Buffer Policy 2

7

Baseline Scalability Analysis using empirical inputs

Update of content

0.1 % avg, 2.4 % maximum

Network tput

8 Servers, thus 6.2 Mbits/sec to each

Server tput (local rsync actions)

Master: 11.4 Mbits/sec Slave: 8.18 Mbits/sec

Baseline Scalability Analysis: end-to-end update latency

97 sec 38.8 min 49.3 min 19.72 hrs 16.1 min 386.4 min 21.5 min 516 min 11.7 min 280.8 min 1 GB 24 GB 1 TB 296 sec 118.3 min 129 sec 51.5 min 70 sec 28 min 100 MB 2.4 GB 100 GB 29.6 sec 710 s 9.7 secs 233 s 12.9 secs 309 sec 7 secs 168 sec 10 MB 240 MB 10 GB End-to- end update latency Slave processing latency Network latency Master processing latency Updates Avg Max Content Channel Size

8

Conclusions

Our work creates scalable design for filesystem- level tool for data synchronization Current systems without tuning suggest O(100 GB) content can be handled for initial server set For TB content, system advances will need to provide speed-ups

Tuning Hardware Distributed processing