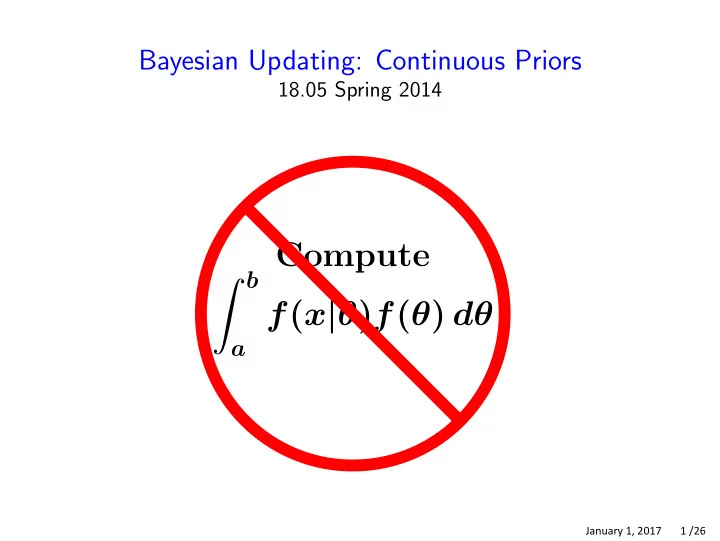

Bayesian Updating: Continuous Priors

18.05 Spring 2014

Compute b

a

f(x|θ)f(θ) dθ

January 1, 2017 1 /26

Compute b f ( x | ) f ( ) d a January 1, 2017 1 /26 Beta - - PowerPoint PPT Presentation

Bayesian Updating: Continuous Priors 18.05 Spring 2014 Compute b f ( x | ) f ( ) d a January 1, 2017 1 /26 Beta distribution Beta ( a , b ) has density ( a + b 1)! a 1 (1 ) b 1 f ( ) = ( a 1)!( b

January 1, 2017 1 /26

January 1, 2017 2 /26

January 1, 2017 3 /26

January 1, 2017 4 /26

9!

4! 4!

10

6

January 1, 2017 5 /26

20! θ11(1 − θ)7 . Since the pdf of beta(12, 9) integrates to 1 we have 11! 8!

0 11! 8!

0 10! 8!

January 1, 2017 6 /26

January 1, 2017 7 /26

January 1, 2017 8 /26

n n

i=1 i=1

January 1, 2017 9 /26

January 1, 2017 10 /26

−(y−µ)2/2σ2

−(y −µ)2/2σ2

January 1, 2017 11 /26

−(y−µ)2/2σ2

January 1, 2017 12 /26

−(θ−3)2/2

−(x−θ)2/8

−(5−θ)2/8

[θ2− 34 −(θ−3)2/2 − 5 θ+61] − 5 [(θ−17/5)2+61−(17/5)2]

−(5−θ)2/8 dθ dx = c3e

8 5

8

− 5 (61−(17/5)2) − 5 (θ−17/5)2

8

8 (θ−17/5)2

− 5 (θ−17/5)2 −

2· 4

8

5

4

5 , 5

January 1, 2017 13 /26

January 1, 2017 14 /26

January 1, 2017 15 /26

1 θ dθ θ c θ dθ

0.25

January 1, 2017 16 /26

January 1, 2017 17 /26

θ

x1

January 1, 2017 18 /26

1

θ

0.25 θ2

x2

January 1, 2017 19 /26

January 1, 2017 20 /26

0 θ dθ = 1/2

January 1, 2017 21 /26

January 1, 2017 22 /26

n

i=1

January 1, 2017 23 /26

n

i=1

January 1, 2017 24 /26

x density 1/8 3/8 5/8 7/8 .5 1 1.5 2 x density .5 1 1.5 2

January 1, 2017 25 /26

MIT OpenCourseWare https://ocw.mit.edu

18.05 Introduction to Probability and Statistics

Spring 2014 For information about citing these materials or our Terms of Use, visit: https://ocw.mit.edu/terms.