1

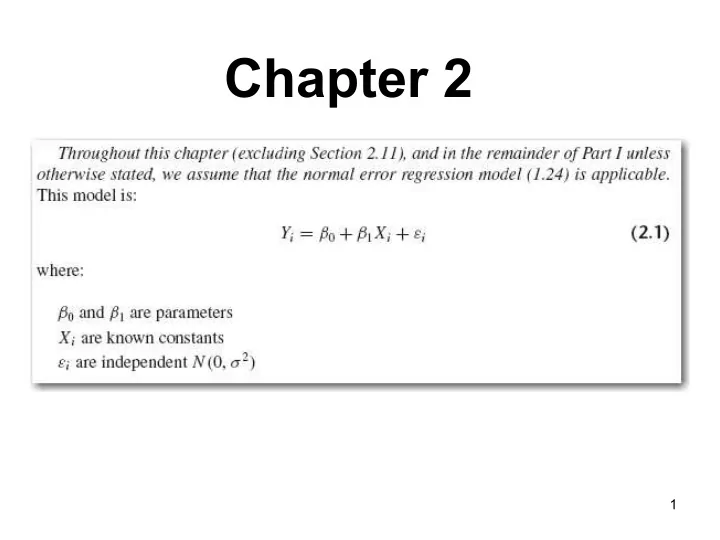

Chapter 2 1 2.1: Inferences about 1 Test of interest throughout - - PowerPoint PPT Presentation

Chapter 2 1 2.1: Inferences about 1 Test of interest throughout - - PowerPoint PPT Presentation

Chapter 2 1 2.1: Inferences about 1 Test of interest throughout regression: Need sampling distribution of the estimator b 1 . Idea: If b 1 can be written as a linear combination of the responses (which are independent and normally

2

2.1: Inferences about β1

Test of interest throughout regression: Need sampling distribution of the estimator b1. Idea: If b1 can be written as a linear combination of the responses (which are independent and normally distributed), then from A.40, we will now have the probability (sampling) distribution of b1!

3

Easy:

where: So immediately from (A.40): But we still need to find E{b1} and Var{b1} b1 ~ Normal (E{b1}, Var{b1})

4

Fun facts about the ki

Fun Fact 1. Fun Fact 2. Fun Fact 3.

5

Using the “fun facts”, find E{b1} and Var{b1}

= ?

6

Major Results

So it follows from (A.59) that: b1 ~ Normal (β1, σ2 )

7

Picture of t density functions with various degrees of freedom (df)

- 4

- 3

- 2

- 1

1 2 3 4 0.0 0.1 0.2 0.3 0.4

t f(t)

8

t distribution

- Ratio of a standard normal to the square

root of a scaled chi-squared distribution with n degrees of freedom.

- n of about 30 is “close” to a standard

normal but not exactly so

- n → ∞, tends to a normal distribution

- n = 1, we get a t with 1 degree of freedom,

- therwise known as the _____ distribution.

9

t distribution

- Ratio of a standard normal to the square

root of a scaled chi-squared distribution with n degrees of freedom.

- n of about 30 is “close” to a standard

normal but not exactly so

- n → ∞, tends to a normal distribution

- n = 1, we get a t with 1 degree of freedom,

- therwise known as the _____ distribution.

10

Confidence interval for β1

From the sampling distribution of b1: Rearranging inside the brackets: Result:

11

Hypothesis Tests for β1

Step 1: Null and alternative hypotheses: Step 2: Test statistic: Step 3: Critical Region (or see p-value):

12

S = sqrt (2384) = 48.82 Var(b1) = sqrt (2384/19800) = 0.3470 t = 3.57 / 0.3470 = 10.29 = sqrt (105.8757)

Note that 19800 = Var(X) times 24

2.3 Considerations for Inferences on β0 and β1

- Non-normality of errors

– Small departure not too big of a deal – For very large n, asymptotically okay

- Confidence coefficients interpretation

– The predictor variables are fixed (not random)

- Xi spacing impacts variance of b1

- Power computable via non-centrality parameter

- f a t-distribution

13

Predictions…and their uncertainties

- Mean function at given value of the predictor

variable (with confidence limits) – For many future lots of size 80, what should the average number of hours be?

- Future response at given value of the predictor

variable (with prediction limits) – A client dropped off an order of size 80, what do we expect for the number of hours this

- rder will take?

14

15

2.4--Interval Estimation of E{Yh}

Point estimate for the mean at X = Xh: For the interval estimate for the mean at X = Xh, we require the sampling distribution of Yh: ^

- 1. Distribution?

- 2. Mean?

16

Variance: Show Yh is a linear combination of the responses:

^ Yh is b0 + b1 Xh ; the b0 term is y-bar – b1 x-bar ^

17

18

i.e., a good idea to show b1 and b2 are linear combinations of the Yi’s

19

20

Results:

Mean: Variance: Estimated Variance: Result:

21

Major Result

Estimated variance of prediction: So:

22

1 – α confidence limits for E{Yh}:

Question: At which value of Xh is this confidence interval the smallest? (i.e., where is your estimation most precise?)

23

Predicting a Future Observation at Xh

We will use Yh, and our prediction error will be: pred = Yh(new) - Yh The variance of our prediction error is: Estimated by: ^ ^

24

1 – α confidence limits for E{Yh(new)}:

where:

25

Example: GPA data for 2000

CH01PR19

26

JMP Pro 11 Analysis

27

Obtain Estimation Interval and Prediction Interval at Xh = 25

And do all of the other Xh-points while you’re at it….

28

“Easy” with Fit Y by X

29

30 Y hours = 62.365859 + 3.570202*X lot size RSquare RSquare Adj Root Mean Square Error Mean of Response Observations (or Sum Wgts) 0.821533 0.813774 48.82331 312.28 25

Summary of Fit

Model Error

- C. Total

Source 1 23 24 DF 252377.58 54825.46 307203.04 Sum of Squares 252378 2384 Mean Square 105.8757 F Ratio <.0001* Prob > F

Analysis of Variance

Intercept X lot size Term 62.365859 3.570202 Estimate 26.17743 0.346972 Std Error 2.38 10.29 t Ratio 0.0259* <.0001* Prob>|t|

Parameter Estimates

S = sqrt (2384) = 48.82

- St. Dev.(b1) = sqrt

(2384/19800) = 0.3470 t = 3.57 / 0.3470 = 10.29 = sqrt (105.8757)

Note that 19800 = Var(X) times 24

- Lot size, variance is 825.

- 825*24 = 19,800

31

Variance 825 times 24 = 19,800

32

33

Partitioning total sums of squares

34

Variation “Explained” by Regression

Unexplained variation before reg. Unexplained variation after reg. So what variation was explained by regression? SSR = SSTO - SSE Amazing fact:

35

Aside: Show SSTO = SSR + SSE

But: = ? So:

36

Mean Squares

37

ANOVA Table

38

F test

Test Statistic: Decision Rule:

39

Result: F = t2

See text page 71. t is a Normal/sqrt(Chi-sq) so if you square t you get ______

40

Example: GPA 2000 Data

F* = Critical value at .05 level:

GPA = 2.1140493 + 0.0388271*ACT RSquare RSquare Adj Root Mean Square Error Mean of Response Observations (or Sum Wgts) 0.07262 0.064761 0.623125 3.07405 120

Summary of Fit

Model Error

- C. Total

Source 1 118 119 DF 3.587846 45.817608 49.405454 Sum of Squares 3.58785 0.38828 Mean Square 9.2402 F Ratio 0.0029* Prob > F

Analysis of Variance

Intercept ACT Term 2.1140493 0.0388271 Estimate 0.320895 0.012773 Std Error 6.59 3.04 t Ratio <.0001* 0.0029* Prob>|t|

Parameter Estimates Linear Fit

41

2.8---General Linear Test Approach

Compares any “full” and “reduced” models and answers the question: Do the additional terms in the full model explain significant additional variation? (Are they needed?) Examples: Full Model Reduced Model

42

General Linear Test Approach

How much additional variation is explained by the full model? Result: Amazingly general test H0: Reduced model is true (R) Ha: Full model is true (F)

43

Full model: Yi = β0 + β1 Xi + εi

corresponding SSE(F) SSE Reduced model: Yi = β0 + εi (model under H0) corresponding SSE(R) SSTO Test statistic is F = MSR/MSE (see pages 72-73)

Example: Test: β1 = 0 versus β1 ≠ 0

44

2.9--Measures of Association

R2: Coefficient of determination Variation explained by the regression is: Total variation is: SSTO What fraction of total variation was explained by the regression? R2 = SSR/SSTO = 1 - SSE/SSTO (Rsquare) (Over-used, over-rated, possibly misleading statistic!) do not confuse with causation. As a screening tool in model selection, it is helpful.

Correlation illustrations

45

46

34.4509 47.0473 32.1399 47.1475 8.9460 10.5759 35.0287 47.0223 34.4509 47.0473 12.5144 18.5112 32.1399 47.1475 8.9460 10.5759 35.0287 47.0223 12.5144 18.5112 Y1 Y2 Y3 Y4 X

R2?

47

Answers:

S = 0.8194 R-Sq = 97.7% R-Sq(adj) = 97.6% S = 4.097 R-Sq = 63.4% R-Sq(adj) = 62.6% S = 0.8194 R-Sq = 0.1% R-Sq(adj) = 0.0% S = 0 R-Sq = 100.0% R-Sq(adj) = 100.0%

48

34.4509 47.0473 32.1399 47.1475 8.9460 10.5759 35.0287 47.0223 34.4509 47.0473 12.5144 18.5112 32.1399 47.1475 8.9460 10.5759 35.0287 47.0223 12.5144 18.5112 Y1 Y2 Y3 Y4 X

R2 ?

0.977 0.634 0.001 0.99+

49

Misunderstandings about R2:

- 1. High R2 implies precise

- predictions. (Not necessarily!)

- 2. High R2 implies good

- fit. (Not necessarily!)

10 15 20

- 100

100 200 300 400 500 600 700

X Y5

Y5 = -634.823 + 53.5260 X S = 45.7285 R-Sq = 90.6 % R-Sq(adj) = 90.4 %

Regression Plot

10 15 20 32 33 34 35 36 37 38X1 Y6

50

Misunderstandings about R2:

- 3. Low R2 implies X and Y not related.

(Not necessarily!) Example 1: GPA data! Example 2: Wrong model:

15 20 25 30 1 2 3 4ACT GPA

GPA = 1.40765 + 0.0635624 ACT S = 0.567359 R-Sq = 14.1 % R-Sq(adj) = 13.5 %Regression Plot

10 15 20 10 20 30 40 50X Y7

Y7 = 21.2853 - 0.294808 X S = 9.14570 R-Sq = 0.7 % R-Sq(adj) = 0.0 %Regression Plot

51

Misunderstandings about R2:

- 3. Low R2 implies X and Y not related.

(Not necessarily!) Example 3: Low R2 may result from truncation

10 15 20 25 35 45 55

X Y2

52

Coefficient of Correlation r = ±√R2

where the sign is given by the sign of b1

Anscombe’s data if time permits now

53

- Resides in 8 columns in Help/Data Sets/

Regression

- Can stack x columns, y columns, add

count and fit all 4 at once

- Fasten your seatbelt

- You should do these fits yourself!

54

Anscombe’s data if time permits now

55

More Good Stuff Material in Chapter 2 but glossed over here

- Bivariate normal model (X also a random

variable)

- Fisher’s z transformation for correlation in

bivariate normal model

- Spearman’s rank correlation coefficient

(replace data with marginal ranks; run usual analysis)

56

- Already did mean function, future

- bservation (JMP).

57

2.10 Cautionary Notes

- Inferences for the future

- X needs to be estimated, as well (not fixed)

- Levels of X outside range of observations

- β1 ≠ 0 does not imply cause-effect

- E(Y) and future Y go with single Xh

- X subject to measurement errors

58

2.11 Normal Correlation Models

- Marginals are normal,

conditional dists. are

- normal. Tests for can

be applied (Fisher’s z transformation).

59

Rank Regression

- Rank as in sorted not sordid

- Spearman’s rank correlation coefficient is

regular correlation coefficient with raw X’s replaced by their ranks.

- Ranking procedures under-utilized

compared to normal theory methods that are over-utilized.

60

Exercises

- 1.6 (draw plot by hand), 1.7, 1.13, 1.16,

1.18, 1.19 (needs software and data disc that comes with the book), 1.23 (continuation of 1.19), 1.29, 1.32, 1.33, 1.34, 1.35, 1.36, 1.43

61

Exercises

- 2.1, 2.4, 2.6, 2.10, 2.13, 2.23, 2.34, 2.50,

2.51, 2.57,

- Feel free to do more if this gets you more

comfortable with the material.

- For example, you may wish to do the

series of problems for copier maintenance all the way through as was done for the GPA data

62

- Exercises. Chap. 1

- 1.6. intercept 200, slope is 5. small sigma.

10, 20, and 40 give 250, 300, 400 for y on

- 1.7. a. key word is exact. Plus/minus 1

standard deviation, … cannot say much without the distribution; b. 0.68 for the normal error distribution

- 1.13. a. observational…no control over the

amount of time each person supposed to devote; b. “caused” v. “seem to be associated”;

- c. …. (seminar leader measures productivity)

- d. Control participation level

63

Exercises

- 1.16 Least squares estimates do not depend on

- normality. MLEs with normal distribution error

give same estimators but LS validity not dependent on normality.

- 1.18 ei are observed values, εi are random

variables whose means are each 0 but whose sum is a random variable

- GPA data…sounds familiar

- Lets look at other ones as well (copier, airfreight,

and plastic hardness) same issues

64

Exercises

- 1.29 “true” intercept happens to be zero.

Collect data, fit intercept, could be > or <

- 0. If zero, do we know intercept is zero?

Check on constrain through origin.

- 1.32 did this on an earlier handout; just

in case see next slide

65

66

- 1.33 and 1.34 easier than with both

intercept and slope

- 1.35 did that on the handout also since

sum of residuals are zero

67

Appendix C.2 data set saved the file with col. headings

68

Exercises

- 2.1, 2.4, 2.6, 2.10, 2.13, 2.23, 2.34, 2.50,

2.51, 2.57,

- Feel free to do more if this gets you more

comfortable with the material.

- For example, you may wish to do the

series of problems for copier maintenance all the way through as was done for the GPA data

69

Exercises Chap 2

- 2.1 a. CI for slope strictly positive; b.

Present results that make sense

- 2.4 GPA data set. Look back at the CI

70

Exercises, chap 2.; 2.4 contin.

- p-value is low so null must go.

71

Exercise 2.6 airfreight baggage; 2.10

72

73