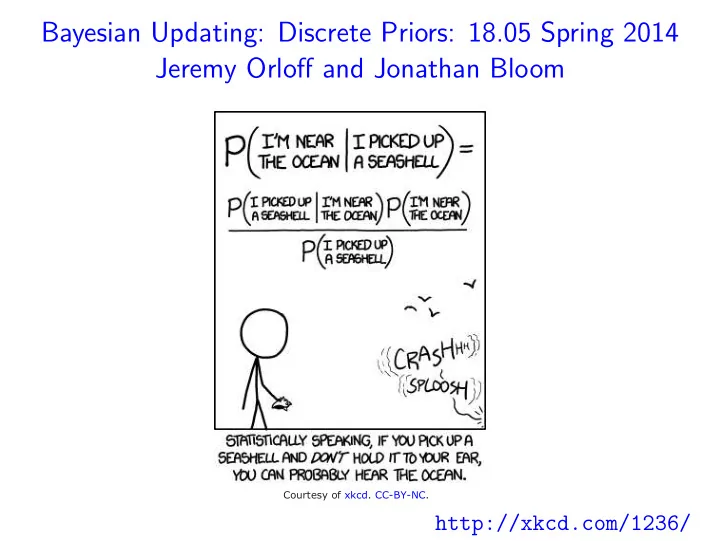

Bayesian Updating: Discrete Priors: 18.05 Spring 2014 Jeremy Orloff and Jonathan Bloom

Courtesy of xkcd. CC-BY-NC.

Bayesian Updating: Discrete Priors: 18.05 Spring 2014 Jeremy Orloff - - PowerPoint PPT Presentation

Bayesian Updating: Discrete Priors: 18.05 Spring 2014 Jeremy Orloff and Jonathan Bloom Courtesy of xkcd. CC-BY-NC. http://xkcd.com/1236/ Which treatment would you choose? 1. Treatment 1: cured 3 out of 3 patients in a trial. 2. Treatment 2:

Courtesy of xkcd. CC-BY-NC.

May 29, 2014 2 / 16

May 29, 2014 2 / 16

May 29, 2014 3 / 16

May 29, 2014 3 / 16

May 29, 2014 3 / 16

May 29, 2014 4 / 16

May 29, 2014 5 / 16

May 29, 2014 6 / 16

May 29, 2014 7 / 16

May 29, 2014 8 / 16

May 29, 2014 9 / 16

May 29, 2014 10 / 16

May 29, 2014 11 / 16

May 29, 2014 12 / 16

May 29, 2014 13 / 16

May 29, 2014 14 / 16

May 29, 2014 15 / 16

May 29, 2014 16 / 16

MIT OpenCourseWare http://ocw.mit.edu

Spring 201 For information about citing these materials or our Terms of Use, visit: http://ocw.mit.edu/terms.