ARS Workshop Lucas Létocart Context Exact total variation minimization Total variation and regularization TV models Minimization Minimal cut (graph cut) as energy minimization Notations General principle Maximum flow / minimal cut Energy representation Results More results Further results for 3D images Conclusion Conclusion Perspectives 1

ARS Workshop

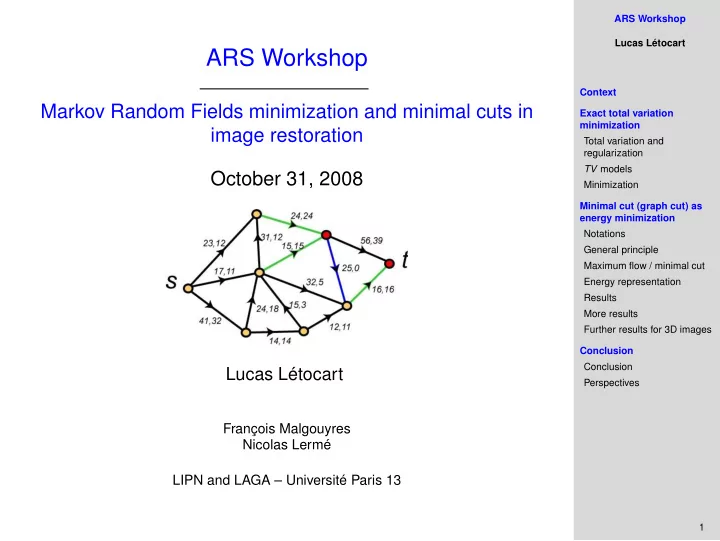

Markov Random Fields minimization and minimal cuts in image restoration October 31, 2008

Lucas Létocart

François Malgouyres Nicolas Lermé LIPN and LAGA – Université Paris 13