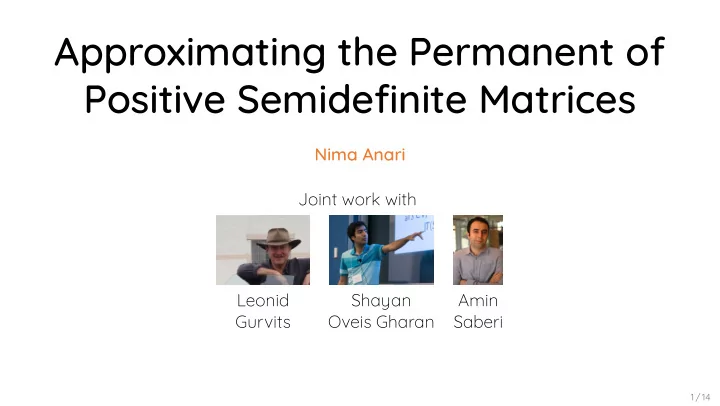

Approximating the Permanent of Positive Semidefinite Matrices Nima - PowerPoint PPT Presentation

Approximating the Permanent of Positive Semidefinite Matrices Nima Anari Joint work with Leonid Shayan Amin Gurvits Oveis Gharan Saberi 1 / 14 Example a b M c d det M ad bc per M ad bc Determinant Permanent det ( M )

Approximating the Permanent of Positive Semidefinite Matrices Nima Anari Joint work with Leonid Shayan Amin Gurvits Oveis Gharan Saberi 1 / 14

Example a b M c d det M ad bc per M ad bc Determinant Permanent ∑ ∑ det ( M ) = sgn ( σ ) M 1 ,σ (1) . . . M n ,σ ( n ) per ( M ) = M 1 ,σ (1) . . . M n ,σ ( n ) σ ∈ S n σ ∈ S n 2 / 14

Determinant Permanent ∑ ∑ det ( M ) = sgn ( σ ) M 1 ,σ (1) . . . M n ,σ ( n ) per ( M ) = M 1 ,σ (1) . . . M n ,σ ( n ) σ ∈ S n σ ∈ S n 2 × 2 Example [ a ] b M = c d det ( M ) = ad − bc per ( M ) = ad + bc 2 / 14

#P-hard to compute sign of per M [Aaronson’11]. #P-hard to compute per M for M [Grier-Schaefger’16]. Complexity of Permanent #P-hard to compute per ( M ) for 0/1 matrices [Valiant’79]. 3 / 14

#P-hard to compute per M for M [Grier-Schaefger’16]. Complexity of Permanent #P-hard to compute per ( M ) for 0/1 matrices [Valiant’79]. #P-hard to compute sign of per ( M ) [Aaronson’11]. 3 / 14

Complexity of Permanent #P-hard to compute per ( M ) for 0/1 matrices [Valiant’79]. #P-hard to compute sign of per ( M ) [Aaronson’11]. #P-hard to compute per ( M ) for M ⪰ 0 [Grier-Schaefger’16]. • • • 3 / 14

Positive Matrices (M ) PSD Matrices (M ) Permanent is always nonnegative: Permanent is always nonnegative: per M per M Deterministic n -approximation Randomized -approximation [Marcus’63]: M M n n . (FRPAS) n [Jerrum-Sinclair-Vigoda’04]. Improved to k n k -approximation in O k log n n -approximation time [Lieb’66]. Deterministic [Gurvits-Samorodnitsky’14]. Approximating the Permanent Additive ± ϵ | M | n approximation [Gurvits’05]. 4 / 14

PSD Matrices (M ) Permanent is always nonnegative: per M Deterministic n -approximation Randomized -approximation [Marcus’63]: M M n n . (FRPAS) n [Jerrum-Sinclair-Vigoda’04]. Improved to k n k -approximation in O k log n n -approximation time [Lieb’66]. Deterministic [Gurvits-Samorodnitsky’14]. Approximating the Permanent Additive ± ϵ | M | n approximation [Gurvits’05]. Positive Matrices (M ≥ 0 ) Permanent is always nonnegative: per ( M ) ≥ 0 . 4 / 14

PSD Matrices (M ) Permanent is always nonnegative: per M Deterministic n -approximation [Marcus’63]: M M n n . n Improved to k n k -approximation in O k log n n -approximation time [Lieb’66]. Deterministic [Gurvits-Samorodnitsky’14]. Approximating the Permanent Additive ± ϵ | M | n approximation [Gurvits’05]. Positive Matrices (M ≥ 0 ) Permanent is always nonnegative: per ( M ) ≥ 0 . Randomized (1 + ϵ ) -approximation (FRPAS) [Jerrum-Sinclair-Vigoda’04]. 4 / 14

Deterministic n -approximation [Marcus’63]: M M n n . n Improved to k n k -approximation in O k log n time [Lieb’66]. Approximating the Permanent Additive ± ϵ | M | n approximation [Gurvits’05]. Positive Matrices (M ≥ 0 ) PSD Matrices (M ⪰ 0 ) Permanent is always nonnegative: Permanent is always nonnegative: per ( M ) ≥ 0 . per ( M ) ≥ 0 . Randomized (1 + ϵ ) -approximation (FRPAS) [Jerrum-Sinclair-Vigoda’04]. Deterministic 2 n -approximation [Gurvits-Samorodnitsky’14]. 4 / 14

n Improved to k n k -approximation in O k log n time [Lieb’66]. Approximating the Permanent Additive ± ϵ | M | n approximation [Gurvits’05]. Positive Matrices (M ≥ 0 ) PSD Matrices (M ⪰ 0 ) Permanent is always nonnegative: Permanent is always nonnegative: per ( M ) ≥ 0 . per ( M ) ≥ 0 . Deterministic n ! -approximation Randomized (1 + ϵ ) -approximation [Marcus’63]: M 1 , 1 . . . M n , n . (FRPAS) [Jerrum-Sinclair-Vigoda’04]. Deterministic 2 n -approximation [Gurvits-Samorodnitsky’14]. 4 / 14

Approximating the Permanent Additive ± ϵ | M | n approximation [Gurvits’05]. Positive Matrices (M ≥ 0 ) PSD Matrices (M ⪰ 0 ) Permanent is always nonnegative: Permanent is always nonnegative: per ( M ) ≥ 0 . per ( M ) ≥ 0 . Deterministic n ! -approximation Randomized (1 + ϵ ) -approximation [Marcus’63]: M 1 , 1 . . . M n , n . (FRPAS) n ! [Jerrum-Sinclair-Vigoda’04]. Improved to k ! n / k -approximation in time 2 O ( k + log ( n )) [Lieb’66]. Deterministic 2 n -approximation [Gurvits-Samorodnitsky’14]. 4 / 14

Theorem [A-Gurvits-Oveis Gharan-Saberi'17] The permanent of PSD matrices M ∈ C n × n can be approximated, in deterministic polynomial time, within ( e γ +1 ) n ≃ 4 . 84 n . 5 / 14

Standard multivariate complex Gaussian: z z z n i.i.d. and z i . General (circularly-symmetric) complex Gaussian: g Cz g CC Wick's Formula g g n per CC Complex Gaussians Im ( z ) Re ( z ) z ∼ C N (0 , 1) P [ z ] = 1 π e −| z | 2 6 / 14

General (circularly-symmetric) complex Gaussian: g Cz g CC Wick's Formula g g n per CC Complex Gaussians Standard multivariate complex Im ( z ) Gaussian: z = ( z 1 , . . . , z n ) i.i.d. and z i ∼ C N (0 , 1) . Re ( z ) z ∼ C N (0 , 1) P [ z ] = 1 π e −| z | 2 6 / 14

Wick's Formula g g n per CC Complex Gaussians Standard multivariate complex Im ( z ) Gaussian: z = ( z 1 , . . . , z n ) i.i.d. and z i ∼ C N (0 , 1) . General (circularly-symmetric) complex Gaussian: Re ( z ) g = Cz , g ∼ C N (0 , CC † ) . z ∼ C N (0 , 1) P [ z ] = 1 π e −| z | 2 6 / 14

Complex Gaussians Standard multivariate complex Im ( z ) Gaussian: z = ( z 1 , . . . , z n ) i.i.d. and z i ∼ C N (0 , 1) . General (circularly-symmetric) complex Gaussian: Re ( z ) g = Cz , g ∼ C N (0 , CC † ) . z ∼ C N (0 , 1) P [ z ] = 1 π e −| z | 2 Wick's Formula [ | g 1 | 2 . . . | g n | 2 ] = per ( CC † ) . E 6 / 14

n . The Schur power is a minor of M M schur M The permanent is an eigenvalue: schur M per M M per M Permanent is monotone w.r.t. : Permanent is Loewner-Monotone M M per M per M = = = Schur Power The Schur power of an n × n matrix M is . . . . . . . . . . . . . n ! . M σ (1) ,τ (1) . . . M σ ( n ) ,τ ( n ) . . . . . . . . . . � �� � n ! 7 / 14

The permanent is an eigenvalue: schur M per M M per M Permanent is monotone w.r.t. : Permanent is Loewner-Monotone M M per M per M = = Schur Power The Schur power of an n × n matrix M is The Schur power is a minor of M ⊗ n . . . . . . . . . . M ⪰ 0 = ⇒ schur ( M ) ⪰ 0 . . . . n ! . M σ (1) ,τ (1) . . . M σ ( n ) ,τ ( n ) . . . . . . . . . . � �� � n ! 7 / 14

Permanent is monotone w.r.t. : Permanent is Loewner-Monotone M M per M per M = Schur Power The Schur power of an n × n matrix M is The Schur power is a minor of M ⊗ n . . . . . . . . . . M ⪰ 0 = ⇒ schur ( M ) ⪰ 0 . . . . n ! . M σ (1) ,τ (1) . . . M σ ( n ) ,τ ( n ) . The permanent is an eigenvalue: . . . . . . . . . schur ( M )1 = per ( M )1 . � �� � n ! M ⪰ 0 = ⇒ per ( M ) ≥ 0 7 / 14

Schur Power The Schur power of an n × n matrix M is The Schur power is a minor of M ⊗ n . . . . . . . . . . M ⪰ 0 = ⇒ schur ( M ) ⪰ 0 . . . . n ! . M σ (1) ,τ (1) . . . M σ ( n ) ,τ ( n ) . The permanent is an eigenvalue: . . . . . . . . . schur ( M )1 = per ( M )1 . � �� � n ! M ⪰ 0 = ⇒ per ( M ) ≥ 0 Permanent is monotone w.r.t. ⪰ : Permanent is Loewner-Monotone M 1 ⪰ M 2 ⪰ 0 = ⇒ per ( M 1 ) ≥ per ( M 2 ) ≥ 0 7 / 14

Theorem [A-Gurvits-Oveis Gharan-Saberi'17] For any M there exist diagonal matrix D and rank-1 matrix vv such that D M vv n per vv . and per D Approximation using Monotonicity Permanent is monotone w.r.t. ⪰ : D ⪰ M ⪰ vv † = ⇒ per ( D ) ≥ per ( M ) ≥ per ( vv † ) . 8 / 14

Approximation using Monotonicity Permanent is monotone w.r.t. ⪰ : D ⪰ M ⪰ vv † = ⇒ per ( D ) ≥ per ( M ) ≥ per ( vv † ) . Theorem [A-Gurvits-Oveis Gharan-Saberi'17] For any M ⪰ 0 there exist diagonal matrix D and rank-1 matrix vv † such that D ⪰ M ⪰ vv † , and per ( D ) ≤ 4 . 85 n per ( vv † ) . 8 / 14

Equivalently solve the convex program log per D inf D subject to M D No such convex program for the best rank-1 matrix. Computing the Approximation Solve and output the following per ( D ) , inf D subject to D ⪰ M . 9 / 14

Computing the Approximation Solve and output the following per ( D ) , inf D subject to D ⪰ M . Equivalently solve the convex program log ( per (( D − 1 ) − 1 ) , inf D − 1 M − 1 ⪰ D − 1 ⪰ 0 . subject to No such convex program for the best rank-1 matrix. 9 / 14

By duality, there is B with diag B such that I M B : B MB B is called a correlation matrix. Let P proj imag B . Then M P because x imag B x By = Mx MBy By x Px Prove the “PSD Van der Waerden” PSD Van der Waerden [A-Gurvits-Oveis Gharan-Saberi'17] If B is a correlation matrix and P the orthogonal projection onto the image of B, then n per P = Sketch of Proof Renormalize rows and columns to assume D = I. 10 / 14

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.