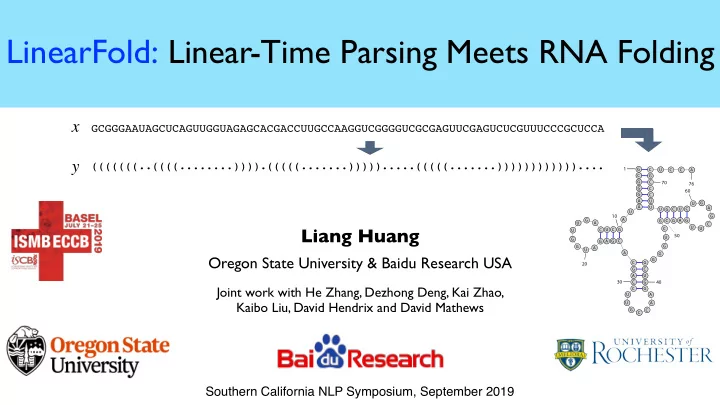

LinearFold: Linear-Time Parsing Meets RNA Folding

Liang Huang

Oregon State University & Baidu Research USA

Joint work with He Zhang, Dezhong Deng, Kai Zhao, Kaibo Liu, David Hendrix and David Mathews

G C G G G A A U A G C U C A G U U G G U A G A G C A C G A C C U U G C C A A G G U C G G G G U C G C G A G U U C G A G U C U C G U U U C C C G C U C C A

1 10 20 30 40 50 60 70 76

x y

GCGGGAAUAGCUCAGUUGGUAGAGCACGACCUUGCCAAGGUCGGGGUCGCGAGUUCGAGUCUCGUUUCCCGCUCCA (((((((..((((........)))).(((((.......))))).....(((((.......))))))))))))....

Southern California NLP Symposium, September 2019