Announcements

- Homework

k 6: Baye yes’ s’ Nets s I (lead TA: Eli)

- Due Fri 1 Nov at 11:59pm

- Homework

k 7: Baye yes’ s’ Nets s II (lead TA: Eli)

- Due Mon 4 Nov at 11:59pm

- Offi

Office H Hours

- Iris:

s: Mon 10.00am-noon, RI 237

- Ja

Jan-Wi Willem: m: Tue 1.40pm-2.40pm, DG 111

- Zh

Zhaoqi qing: : Thu 9.00am-11.00am, HS 202

- El

Eli: Fri 10.00am-noon, RY 207

Post Midterm Feedback Form (< 5 mins)

https://forms.gle/TFw1D1SbGRfxw2TB8

CS 4100: Artificial Intelligence Bayes’ Nets: Inference

Jan-Willem van de Meent, Northeastern University

[These slides were created by Dan Klein and Pieter Abbeel for CS188 Intro to AI at UC Berkeley. All CS188 materials are available at http://ai.berkeley.edu.]

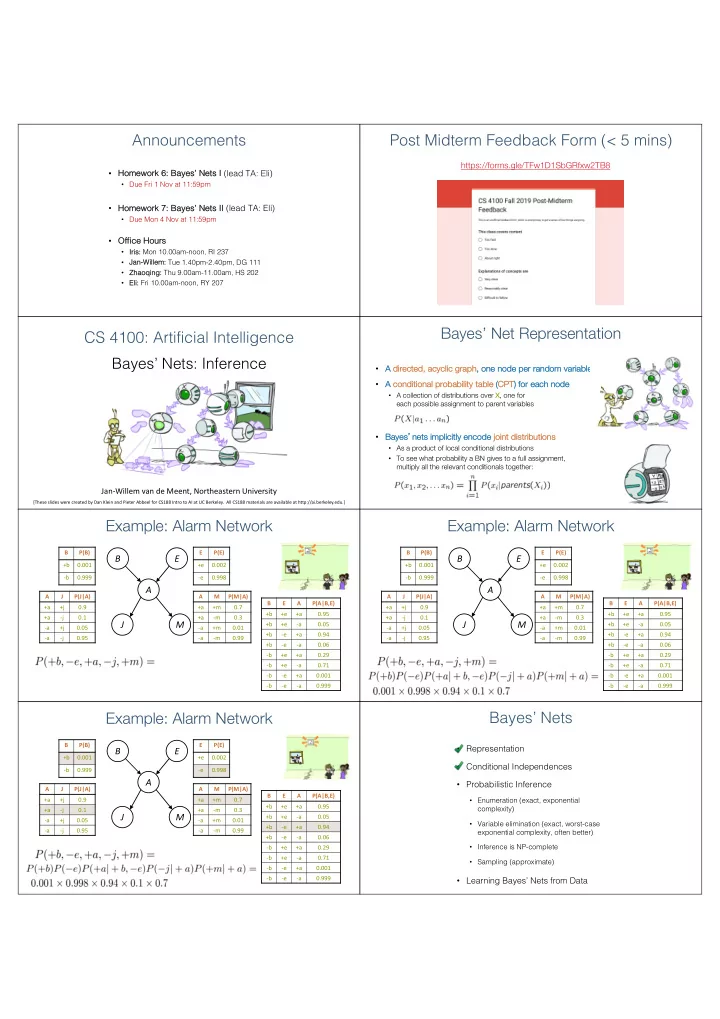

Bayes’ Net Representation

- A

A di directed, d, acyclic graph ph, o , one n node p per r random v variable

- A

A co conditional al probab ability tab able (CP CPT) ) for each node de

- A collection of distributions over X, one for

each possible assignment to parent variables

- Ba

Bayes’ne nets implicitly enc ncode jo join int dis istrib ributio ions

- As a product of local conditional distributions

- To see what probability a BN gives to a full assignment,

multiply all the relevant conditionals together:

Example: Alarm Network

B P(B) +b 0.001

- b

0.999 E P(E) +e 0.002

- e

0.998 B E A P(A|B,E) +b +e +a 0.95 +b +e

- a

0.05 +b

- e

+a 0.94 +b

- e

- a

0.06

- b

+e +a 0.29

- b

+e

- a

0.71

- b

- e

+a 0.001

- b

- e

- a

0.999 A J P(J|A) +a +j 0.9 +a

- j

0.1

- a

+j 0.05

- a

- j

0.95 A M P(M|A) +a +m 0.7 +a

- m

0.3

- a

+m 0.01

- a

- m

0.99

B E A M J

Example: Alarm Network

B P(B) +b 0.001

- b

0.999 E P(E) +e 0.002

- e

0.998 B E A P(A|B,E) +b +e +a 0.95 +b +e

- a

0.05 +b

- e

+a 0.94 +b

- e

- a

0.06

- b

+e +a 0.29

- b

+e

- a

0.71

- b

- e

+a 0.001

- b

- e

- a

0.999 A J P(J|A) +a +j 0.9 +a

- j

0.1

- a

+j 0.05

- a

- j

0.95 A M P(M|A) +a +m 0.7 +a

- m

0.3

- a

+m 0.01

- a

- m

0.99

B E A M J

Example: Alarm Network

B P(B) +b 0.001

- b

0.999 E P(E) +e 0.002

- e

0.998 B E A P(A|B,E) +b +e +a 0.95 +b +e

- a

0.05 +b

- e

+a 0.94 +b

- e

- a

0.06

- b

+e +a 0.29

- b

+e

- a

0.71

- b

- e

+a 0.001

- b

- e

- a

0.999 A J P(J|A) +a +j 0.9 +a

- j

0.1

- a

+j 0.05

- a

- j

0.95 A M P(M|A) +a +m 0.7 +a

- m

0.3

- a

+m 0.01

- a

- m

0.99

B E A M J

Bayes’ Nets

- Representation

- Conditional Independences

- Probabilistic Inference

- Enumeration (exact, exponential

complexity)

- Variable elimination (exact, worst-case

exponential complexity, often better)

- Inference is NP-complete

- Sampling (approximate)

- Learning Bayes’ Nets from Data