SLIDE 1

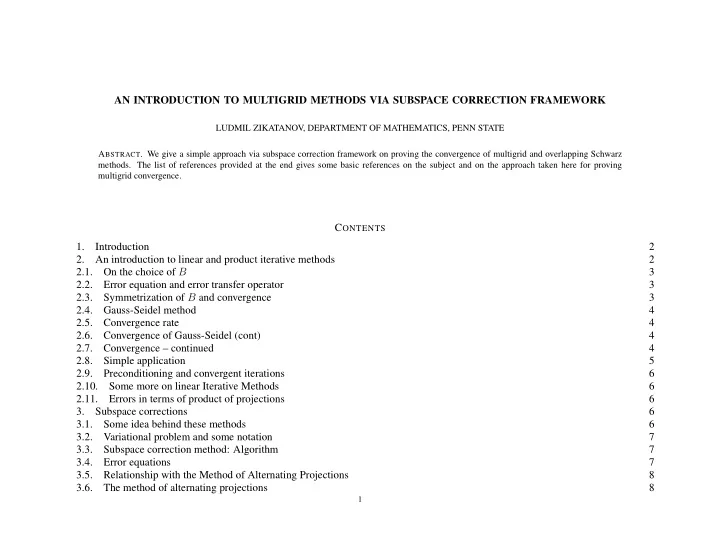

AN INTRODUCTION TO MULTIGRID METHODS VIA SUBSPACE CORRECTION FRAMEWORK

LUDMIL ZIKATANOV, DEPARTMENT OF MATHEMATICS, PENN STATE

- ABSTRACT. We give a simple approach via subspace correction framework on proving the convergence of multigrid and overlapping Schwarz

- methods. The list of references provided at the end gives some basic references on the subject and on the approach taken here for proving