SLIDE 1

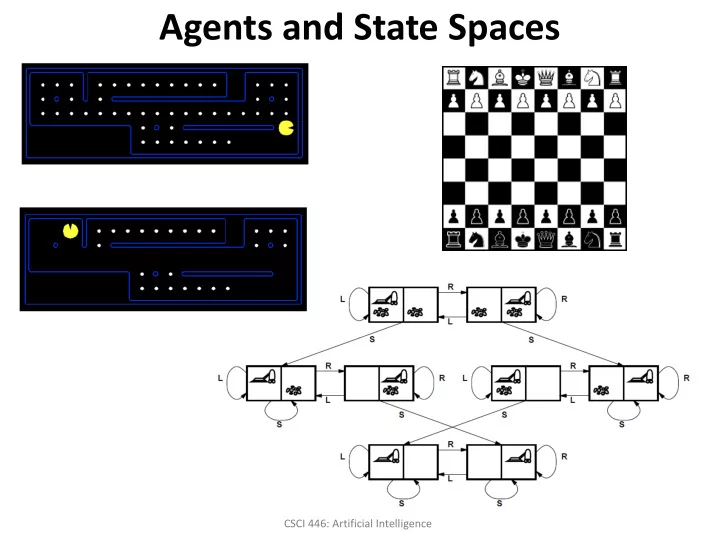

Agents and State Spaces

CSCI 446: Artificial Intelligence

Agents and State Spaces CSCI 446: Artificial Intelligence Overview - - PowerPoint PPT Presentation

Agents and State Spaces CSCI 446: Artificial Intelligence Overview Agents and environments Rationality Agent types Specifying the task environment Performance measure Environment Actuators Sensors Search

CSCI 446: Artificial Intelligence

2

3

4

5

6

10 10 20 20 30 30 40 40 50 50 60 60 70 80

9 12 1 4 6 7 2 10 3 11 5 8

Planned Measurements per Target Three or More Measurements in a Two Second Window per Target Balanced Measurements Across Multiple Targets Total Number of Measurements Taken Average Tracking Error

8

9

10

11

12

13

14

15

16

17

18

19

20

Search graph for a tiny search problem.

21

22

23

24