Computational Thinking www.ugrad.cs.ubc.ca/~cs100

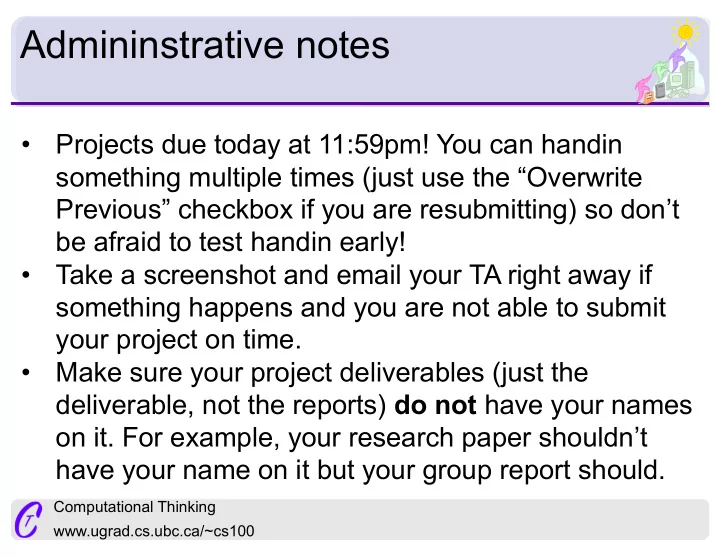

Admininstrative notes

- Projects due today at 11:59pm! You can handin

something multiple times (just use the “Overwrite Previous” checkbox if you are resubmitting) so don’t be afraid to test handin early!

- Take a screenshot and email your TA right away if

something happens and you are not able to submit your project on time.

- Make sure your project deliverables (just the

deliverable, not the reports) do not have your names

- n it. For example, your research paper shouldn’t