SLIDE 2 Singular Value Thresholding (SVT)

Ref: Cai et al, A singular value thresholding algorithm for matrix completion, SIAM Journal

} ; } ; 1 ); ( ) ; ( { met) not criterion ergence while(conv 1 k { ) , (

) ( * ) ( ) 1 ( ) ( ) 1 ( ) ( ) ( *

2 1

k k k k k k k n n

k k P Y Y Y threshold soft R Y SVT

} ) , ( ˆ } ); ) , ( , max( ) , ( { )) ( : 1 ( for ) svd using ( { ) ; ( ˆ

) ( 1

2 1

Y rank i t k k T n n

v u k k S Y k k S k k S Y rank k USV Y R Y threshold soft Y

* 2

2 1 min arg ) ; ( X Y X Y threshold soft

F X

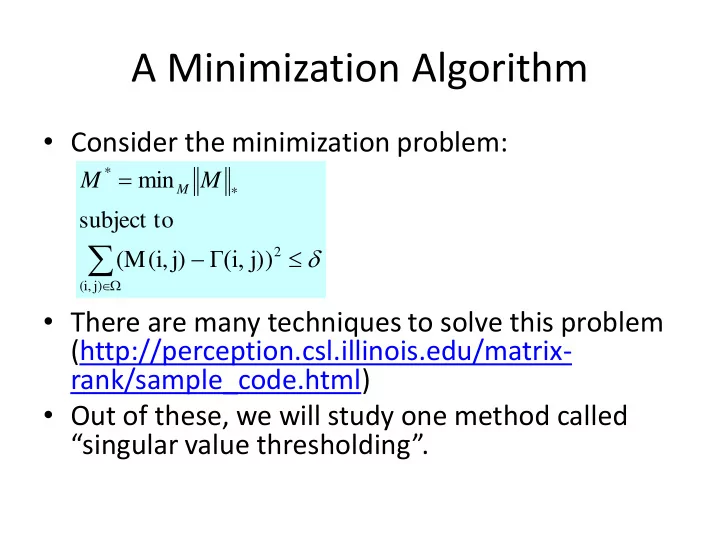

The soft- thresholding procedure obeys the following property (which we state w/o proof).