SLIDE 45 May 10, 2011

45

- Understanding how real systems work

- Modeling workloads and infrastructure

- Compare grids and clouds with other platforms (parallel production env.,…)

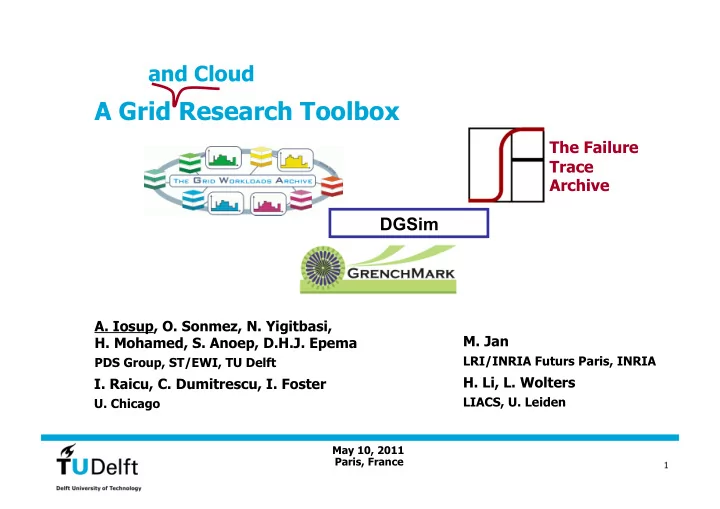

- The Archives: easy to share system traces and associated research

- Grid Workloads Archive

- Failure Trace Archive

- Cloud Workloads Archive (upcoming)

- Testing/Evaluating Grids/Clouds

- GrenchMark

- ServMark: Scalable GrenchMark

- C-Meter: Cloud-oriented GrenchMark

- DGSim: Simulating Grids (and Clouds?)

Publications Publications 2006 2006: Grid, CCGrid, JSSPP 2007 2007: SC, Grid, CCGrid, … 2008 2008: HPDC, SC, Grid, … 2009 2009: HPDC, CCGrid, … 2010 2010: HPDC, CCGrid (Best Paper Award), EuroPar, … 2011 2011: IEEE TPDS, IEEE Internet Computing, CCGrid, …