SLIDE 36 Quantitative Results

Our method Volumetric decoder Nearest retrieval Hausdorff distance 0.120 0.638 0.242 Chamfer distance 0.023 0.052 0.045 normal distance 34.27 56.97 47.94 depth map error 0.028 0.048 0.049 volumetric distance 0.309 0.497 0.550

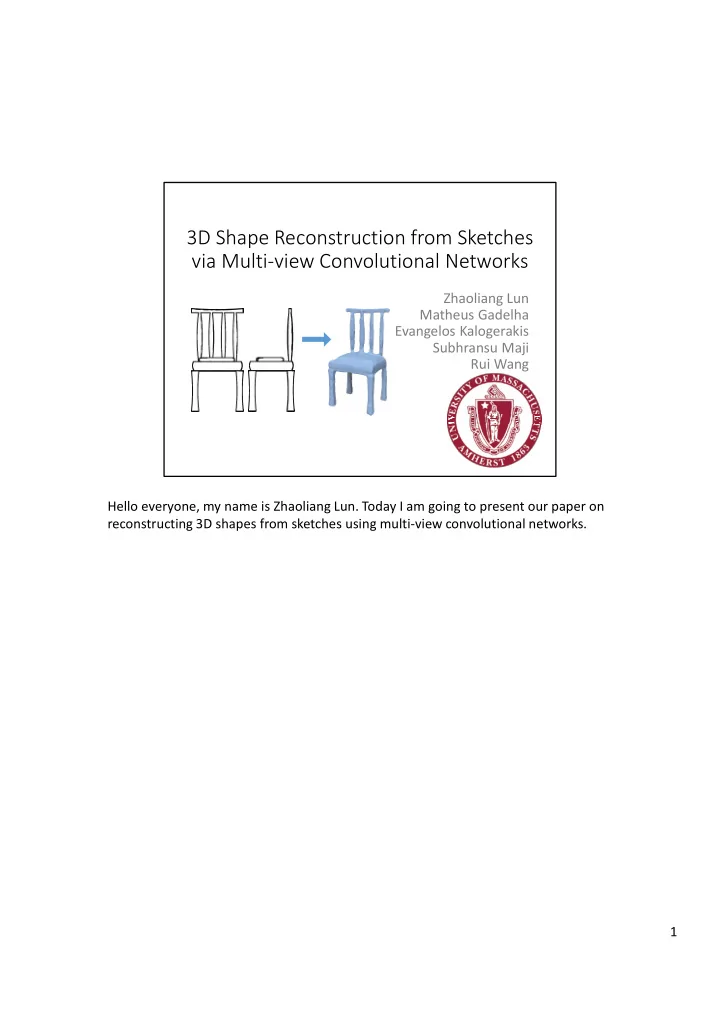

Character (human drawing)

Quantitatively, we can compare the reconstructed shapes and the reference shapes, using various metrics, such as Hausdorff distance, Chamfer distance, angles between normals, depth map error, voxel-based intersection over union. These are the results for character models. According to all metrics, our reconstruction errors are much smaller compared to nearest retrieval and the volumetric network. 36