2012-07-10 1

CSE 332 Data Abstractions: B Trees and Hash Tables Make a Complete Breakfast

Kate Deibel Summer 2012

July 9, 2012 CSE 332 Data Abstractions, Summer 2012 1

HASH TABLES

The national data structure of the Netherlands

July 9, 2012 CSE 332 Data Abstractions, Summer 2012 2

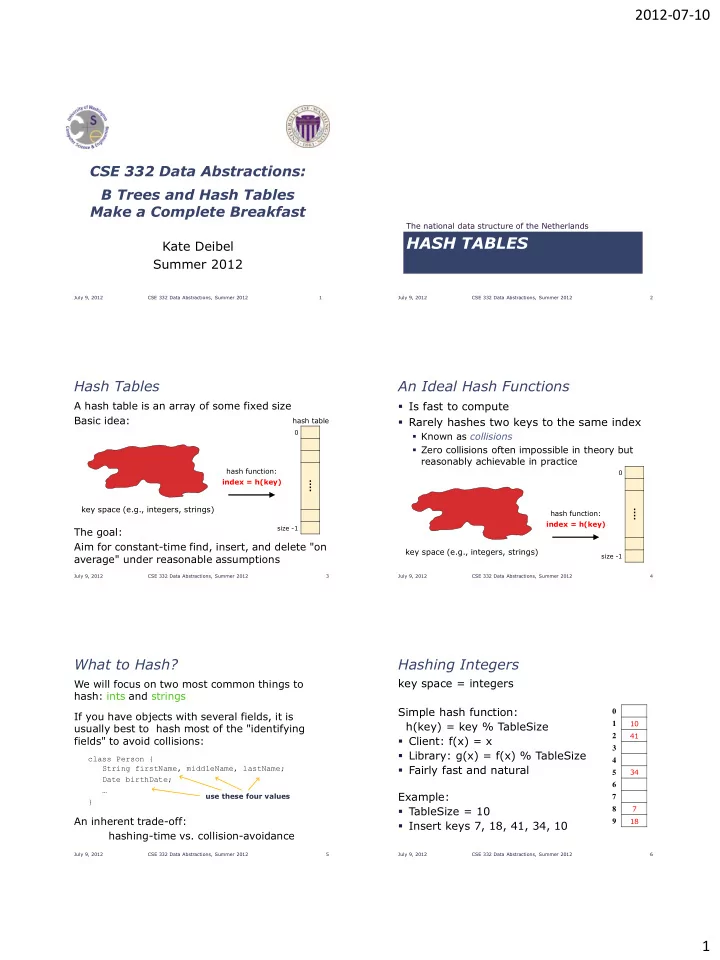

Hash Tables

A hash table is an array of some fixed size Basic idea: The goal: Aim for constant-time find, insert, and delete "on average" under reasonable assumptions

⁞

size -1

hash function: index = h(key) hash table

key space (e.g., integers, strings)

July 9, 2012 CSE 332 Data Abstractions, Summer 2012 3

An Ideal Hash Functions

- Is fast to compute

- Rarely hashes two keys to the same index

- Known as collisions

- Zero collisions often impossible in theory but

reasonably achievable in practice

July 9, 2012 CSE 332 Data Abstractions, Summer 2012 4

⁞

size -1

hash function: index = h(key)

key space (e.g., integers, strings)

What to Hash?

We will focus on two most common things to hash: ints and strings If you have objects with several fields, it is usually best to hash most of the "identifying fields" to avoid collisions:

class Person { String firstName, middleName, lastName; Date birthDate; … }

An inherent trade-off: hashing-time vs. collision-avoidance

July 9, 2012 CSE 332 Data Abstractions, Summer 2012 5

use these four values

Hashing Integers

key space = integers Simple hash function: h(key) = key % TableSize

- Client: f(x) = x

- Library: g(x) = f(x) % TableSize

- Fairly fast and natural

Example:

- TableSize = 10

- Insert keys 7, 18, 41, 34, 10

July 9, 2012 CSE 332 Data Abstractions, Summer 2012 6

1 2 3 4 5 6 7 8 9

7 18 41 34 10