1

DL - 2004 Compression3 – Beeri/Feitelson 1

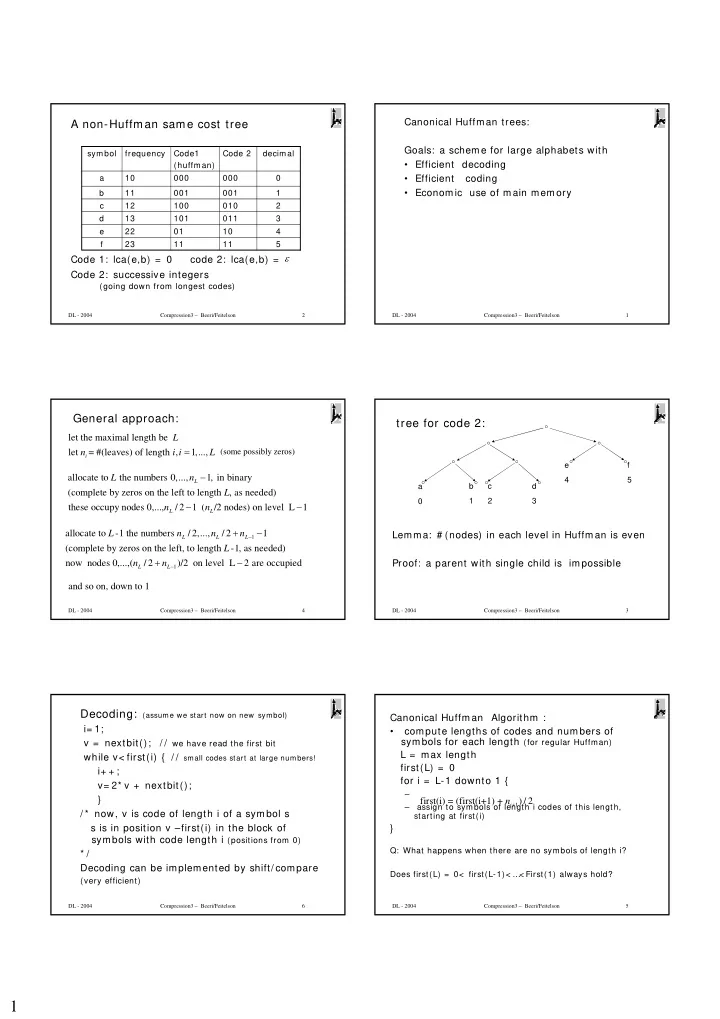

Canonical Huffman trees: Goals: a scheme for large alphabets with

- Efficient decoding

- Efficient coding

- Economic use of main memory

DL - 2004 Compression3 – Beeri/Feitelson 2

A non-Huffman same cost tree

Code 1: lca(e,b) = 0 code 2: lca(e,b) = Code 2: successive integers

(going down from longest codes) 5 4 3 2 1 decimal 11 11 23 f Code 2 Code1 (huffman) frequency symbol 10 01 22 e 011 101 13 d 010 100 12 c 001 001 11 b 000 000 10 a

ε

DL - 2004 Compression3 – Beeri/Feitelson 3

tree for code 2:

Lemma: # (nodes) in each level in Huffman is even Proof: a parent with single child is impossible

a b 1 c 2 d 3 e 4 f 5

DL - 2004 Compression3 – Beeri/Feitelson 4

General approach:

(some possibly zeros)

let the maximal length be let = #(leaves) of length , 1,...,

i

L n i i L = allocate to the numbers 0,..., 1, in binary (complete by zeros on the left to length , as needed) these occupy nodes 0,..., / 2 1 ( /2 nodes) on level L 1

L L L

L n L n n − − −

1 1

allocate to -1 the numbers / 2,..., / 2 1 (complete by zeros on the left, to length -1, as needed) now nodes 0,...,( / 2 )/2 on level L 2 are occupied

L L L L L

L n n n L n n

− −

+ − + − and so on, down to 1

DL - 2004 Compression3 – Beeri/Feitelson 5

Canonical Huffman Algorithm :

- compute lengths of codes and numbers of

symbols for each length (for regular Huffman) L = max length first(L) = 0 for i = L-1 downto 1 {

– – assign to symbols of length i codes of this length, starting at first(i)

}

Q: What happens when there are no symbols of length i? Does first(L) = 0< first(L-1)< … < First(1) always hold?

1

first(i) = (first(i+1) + ) / 2

i

n +

DL - 2004 Compression3 – Beeri/Feitelson 6