1

Slide 1Decision graphs II (Limited Memory) Influence Diagrams

Advanced Herd Management Anders Ringgaard Kristensen

Slide 2Outline

Decision trees Optimization methods

- Decision tree

- Strong junction tree

- Single Policy Updating

Markov property versus “no forgetting” Decision node ordering Advantages and disadvantages of decision graphs

Slide 3Decision trees

A very common technique for evaluation of alternative decisions over time. In particular popular in the veterinary community. Example diseased calf:

- Treat: Yes/ no

- Cost of treatment: 100 DKK

- Value of surviving calve: 1650 DKK

- Cost of dead calf: 70 DKK

- Survival of animals:

- Treated: 0.88

- Untreated: 0.60

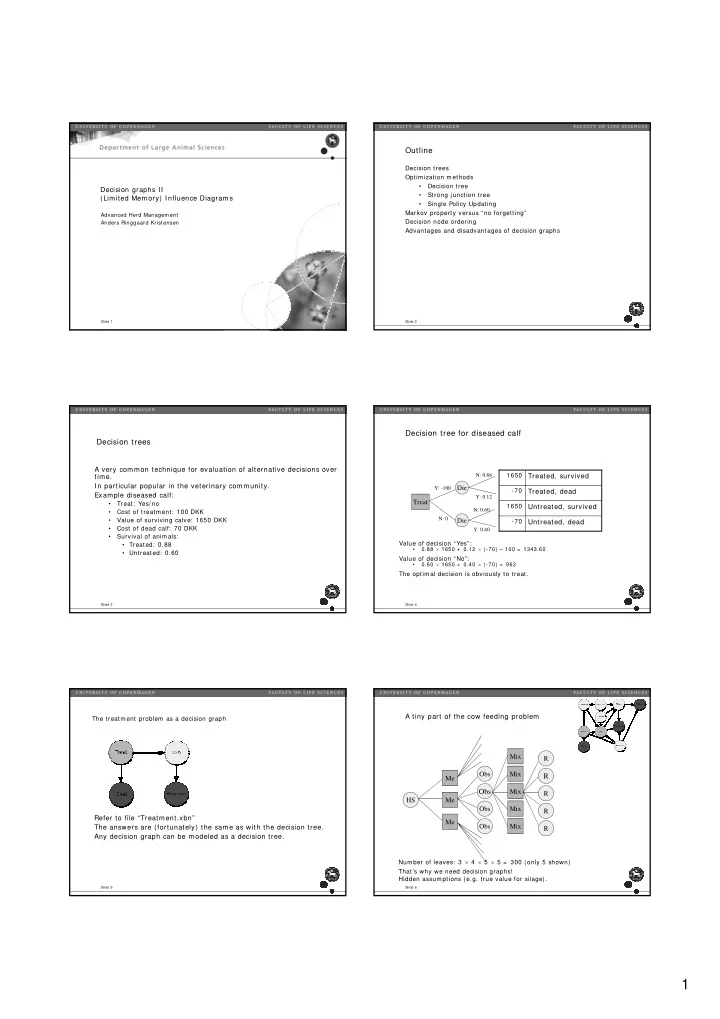

Decision tree for diseased calf

Value of decision “Yes”:

- 0.88 × 1650 + 0.12 × (-70) – 100 = 1343.60

Value of decision “No”:

- 0.60 × 1650 + 0.40 × (-70) = 962

The optimal decision is obviously to treat.

Treat Die Die

Y: -100 N: 0 N: 0.88 Y: 0.12

- 70

1650

- 70

1650

Untreated, dead Untreated, survived Treated, dead Treated, survived

N: 0.60 Y: 0.40

Slide 5The treatment problem as a decision graph

Refer to file “Treatment.xbn” The answers are (fortunately) the same as with the decision tree. Any decision graph can be modeled as a decision tree.

Slide 6A tiny part of the cow feeding problem

Number of leaves: 3 × 4 × 5 × 5 = 300 (only 5 shown) That’s why we need decision graphs! Hidden assumptions (e.g. true value for silage).

Me HS Me Me Obs Obs

Obs

Obs Mix Mix Mix R R

R

R R Mix Mix