1

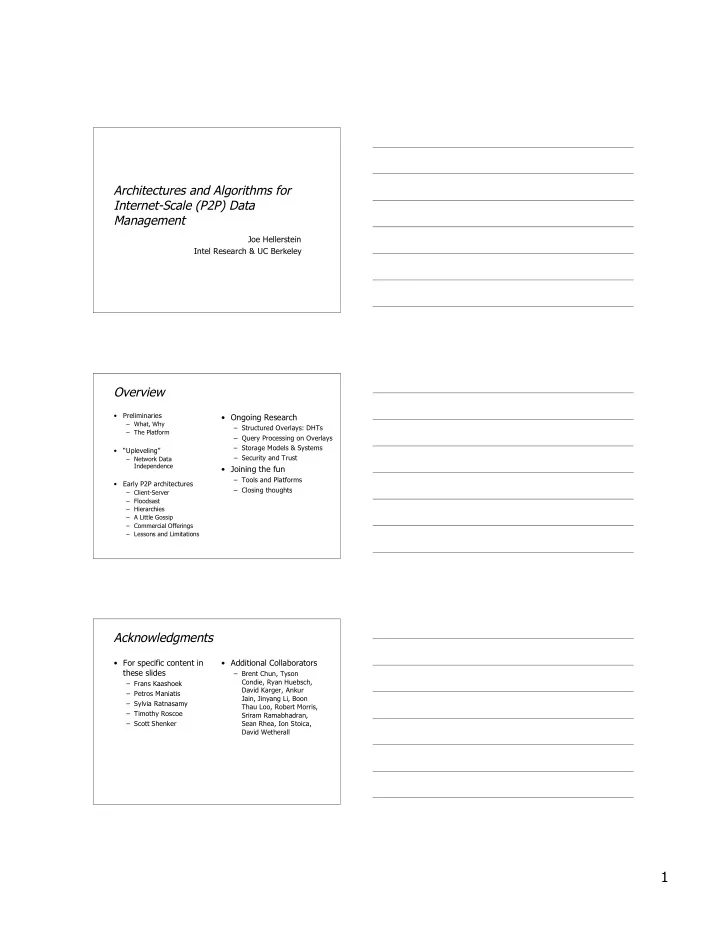

Architectures and Algorithms for Internet-Scale (P2P) Data Management

Joe Hellerstein Intel Research & UC Berkeley

Overview

- Preliminaries

– What, Why – The Platform

- “Upleveling”

– Network Data Independence

- Early P2P architectures

– Client-Server – Floodsast – Hierarchies – A Little Gossip – Commercial Offerings – Lessons and Limitations

- Ongoing Research

– Structured Overlays: DHTs – Query Processing on Overlays – Storage Models & Systems – Security and Trust

- Joining the fun

– Tools and Platforms – Closing thoughts

Acknowledgments

- For specific content in

these slides

– Frans Kaashoek – Petros Maniatis – Sylvia Ratnasamy – Timothy Roscoe – Scott Shenker

- Additional Collaborators

– Brent Chun, Tyson Condie, Ryan Huebsch, David Karger, Ankur Jain, Jinyang Li, Boon Thau Loo, Robert Morris, Sriram Ramabhadran, Sean Rhea, Ion Stoica, David Wetherall