02110

Inge Li Gørtz

- Balanced binary search trees: Red-black trees and 2-3-4 trees

- Amortized analysis

- Dynamic programming

- Network flows

- String matching

- String indexing

- Computational geometry

- Introduction to NP-completeness

- Randomized algorithms

Overview

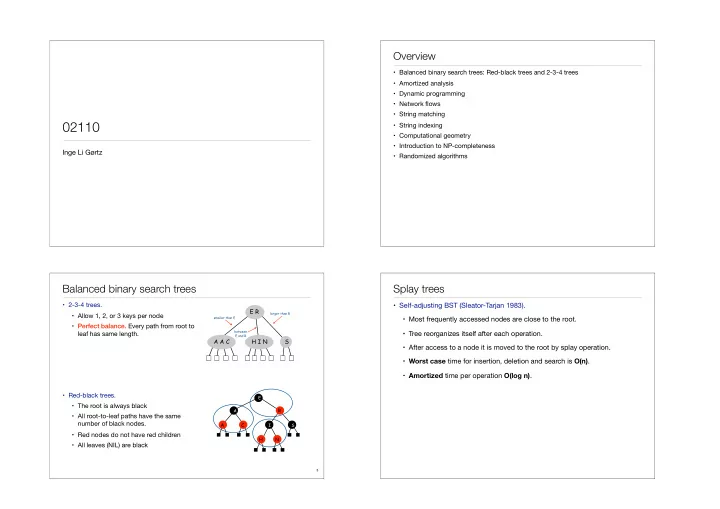

- 2-3-4 trees.

- Allow 1, 2, or 3 keys per node

- Perfect balance. Every path from root to

leaf has same length.

- Red-black trees.

- The root is always black

- All root-to-leaf paths have the same

number of black nodes.

- Red nodes do not have red children

- All leaves (NIL) are black

Balanced binary search trees

3

R H N C A

E I A S

A A C H I N E R S

smaller than E between E and R larger than R

- Self-adjusting BST (Sleator-Tarjan 1983).

- Most frequently accessed nodes are close to the root.

- Tree reorganizes itself after each operation.

- After access to a node it is moved to the root by splay operation.

- Worst case time for insertion, deletion and search is O(n).

- Amortized time per operation O(log n).