SLIDE 1

1

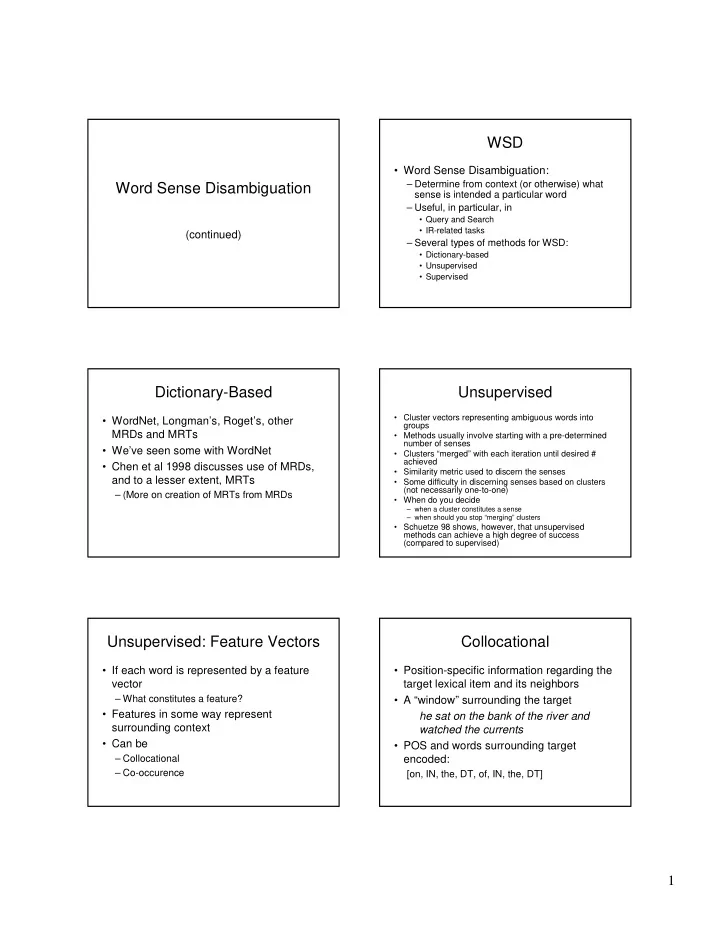

Word Sense Disambiguation

(continued)

WSD

- Word Sense Disambiguation:

– Determine from context (or otherwise) what sense is intended a particular word – Useful, in particular, in

- Query and Search

- IR-related tasks

– Several types of methods for WSD:

- Dictionary-based

- Unsupervised

- Supervised

Dictionary-Based

- WordNet, Longman’s, Roget’s, other

MRDs and MRTs

- We’ve seen some with WordNet

- Chen et al 1998 discusses use of MRDs,

and to a lesser extent, MRTs

– (More on creation of MRTs from MRDs

Unsupervised

- Cluster vectors representing ambiguous words into

groups

- Methods usually involve starting with a pre-determined

number of senses

- Clusters “merged” with each iteration until desired #

achieved

- Similarity metric used to discern the senses

- Some difficulty in discerning senses based on clusters

(not necessarily one-to-one)

- When do you decide

– when a cluster constitutes a sense – when should you stop “merging” clusters

- Schuetze 98 shows, however, that unsupervised

methods can achieve a high degree of success (compared to supervised)

Unsupervised: Feature Vectors

- If each word is represented by a feature

vector

– What constitutes a feature?

- Features in some way represent

surrounding context

- Can be

– Collocational – Co-occurence

Collocational

- Position-specific information regarding the

target lexical item and its neighbors

- A “window” surrounding the target

he sat on the bank of the river and watched the currents

- POS and words surrounding target