Knowledge Engineering

Semester 2, 2004-05 Michael Rovatsos mrovatso@inf.ed.ac.uk

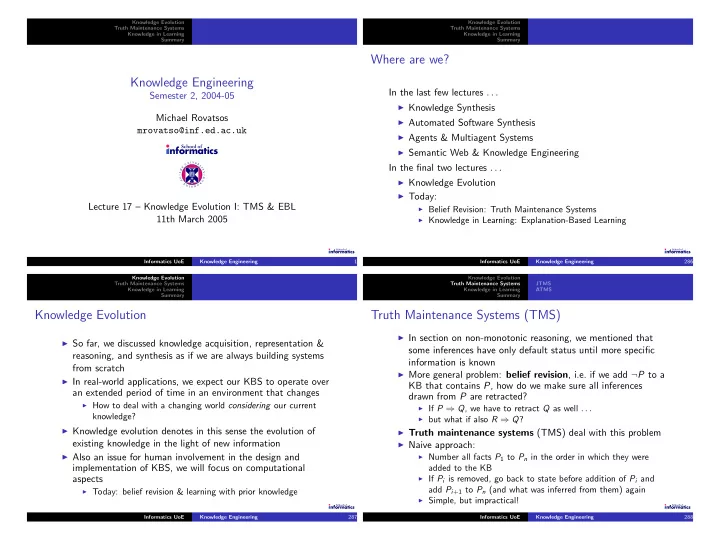

T H E U N I V E R S I T Y O F E D I N B U R G HLecture 17 – Knowledge Evolution I: TMS & EBL 11th March 2005

Informatics UoE Knowledge Engineering 1 Knowledge Evolution Truth Maintenance Systems Knowledge in Learning SummaryWhere are we?

In the last few lectures . . .

◮ Knowledge Synthesis ◮ Automated Software Synthesis ◮ Agents & Multiagent Systems ◮ Semantic Web & Knowledge EngineeringIn the final two lectures . . .

◮ Knowledge Evolution ◮ Today: ◮ Belief Revision: Truth Maintenance Systems ◮ Knowledge in Learning: Explanation-Based Learning Informatics UoE Knowledge Engineering 286 Knowledge Evolution Truth Maintenance Systems Knowledge in Learning SummaryKnowledge Evolution

◮ So far, we discussed knowledge acquisition, representation &reasoning, and synthesis as if we are always building systems from scratch

◮ In real-world applications, we expect our KBS to operate overan extended period of time in an environment that changes

◮ How to deal with a changing world considering our currentknowledge?

◮ Knowledge evolution denotes in this sense the evolution ofexisting knowledge in the light of new information

◮ Also an issue for human involvement in the design andimplementation of KBS, we will focus on computational aspects

◮ Today: belief revision & learning with prior knowledge Informatics UoE Knowledge Engineering 287 Knowledge Evolution Truth Maintenance Systems Knowledge in Learning Summary JTMS ATMSTruth Maintenance Systems (TMS)

◮ In section on non-monotonic reasoning, we mentioned thatsome inferences have only default status until more specific information is known

◮ More general problem: belief revision, i.e. if we add ¬P to aKB that contains P, how do we make sure all inferences drawn from P are retracted?

◮ If P ⇒ Q, we have to retract Q as well . . . ◮ but what if also R ⇒ Q? ◮ Truth maintenance systems (TMS) deal with this problem ◮ Naive approach: ◮ Number all facts P1 to Pn in the order in which they wereadded to the KB

◮ If Pi is removed, go back to state before addition of Pi andadd Pi+1 to Pn (and what was inferred from them) again

◮ Simple, but impractical! Informatics UoE Knowledge Engineering 288