16/8/18, 9(58 am Week 04 Lectures Page 1 of 34 file:///Users/jas/srvr/apps/cs9315/18s2/lectures/week04/notes.html

Week 04 Lectures

Exercise 1: PostgreSQL Tuple Visibility

1/110

Due to MVCC, PostgreSQL's getTuple(b,i) is not so simple ith tuple in buffer b may be "live" or "dead" or ... ? How does PostgreSQL determine whether a tuple is visible? Assume: multiple concurrent transactions on tables. tuple = (oid, xmin, xmax, cmin, cmax, infomask, ...rest of data...) For all of the details: PG_SRC/include/access/htup.h ... tuple data structure PG_SRC/include/utils/snapshot.h ... "snapshot" data PG_SRC/backend/utils/time/tqual.c ... visibility checks

Scanning in PostgreSQL

2/110

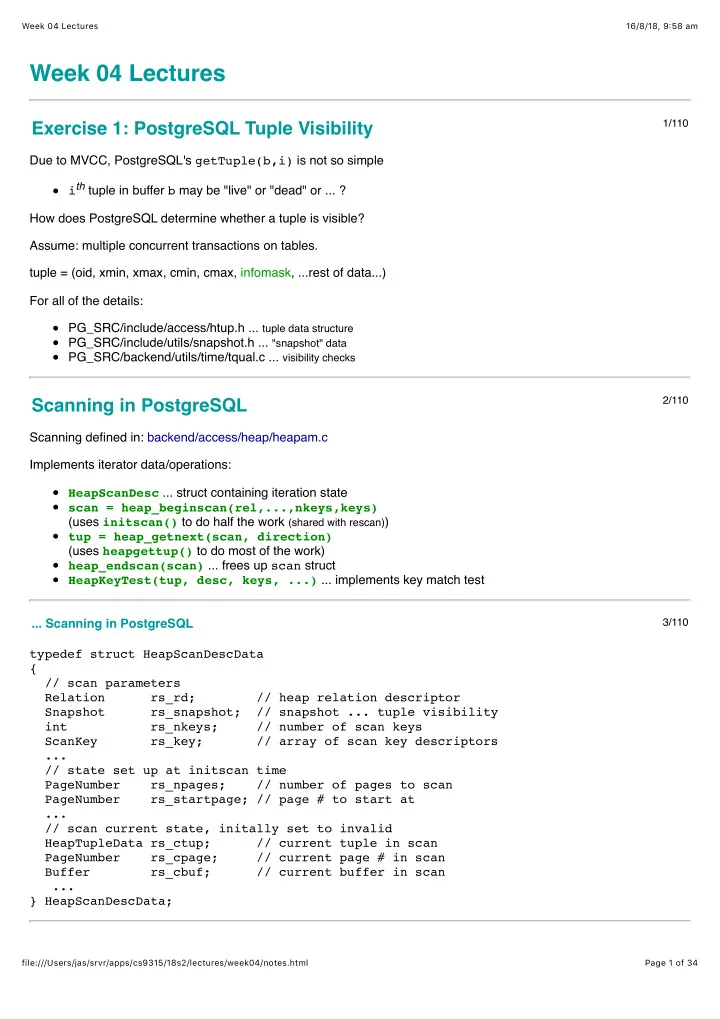

Scanning defined in: backend/access/heap/heapam.c Implements iterator data/operations: HeapScanDesc ... struct containing iteration state scan = heap_beginscan(rel,...,nkeys,keys) (uses initscan() to do half the work (shared with rescan)) tup = heap_getnext(scan, direction) (uses heapgettup() to do most of the work) heap_endscan(scan) ... frees up scan struct HeapKeyTest(tup, desc, keys, ...) ... implements key match test ... Scanning in PostgreSQL

3/110