Corso di Sistemi Distribuiti e Cloud Computing A.A. 2019/20 Valeria Cardellini Laurea Magistrale in Ingegneria Informatica

Virtualizzazione

Macroarea di Ingegneria Dipartimento di Ingegneria Civile e Ingegneria Informatica

Valeria Cardellini - SDCC 2019/20

Virtualizzazione

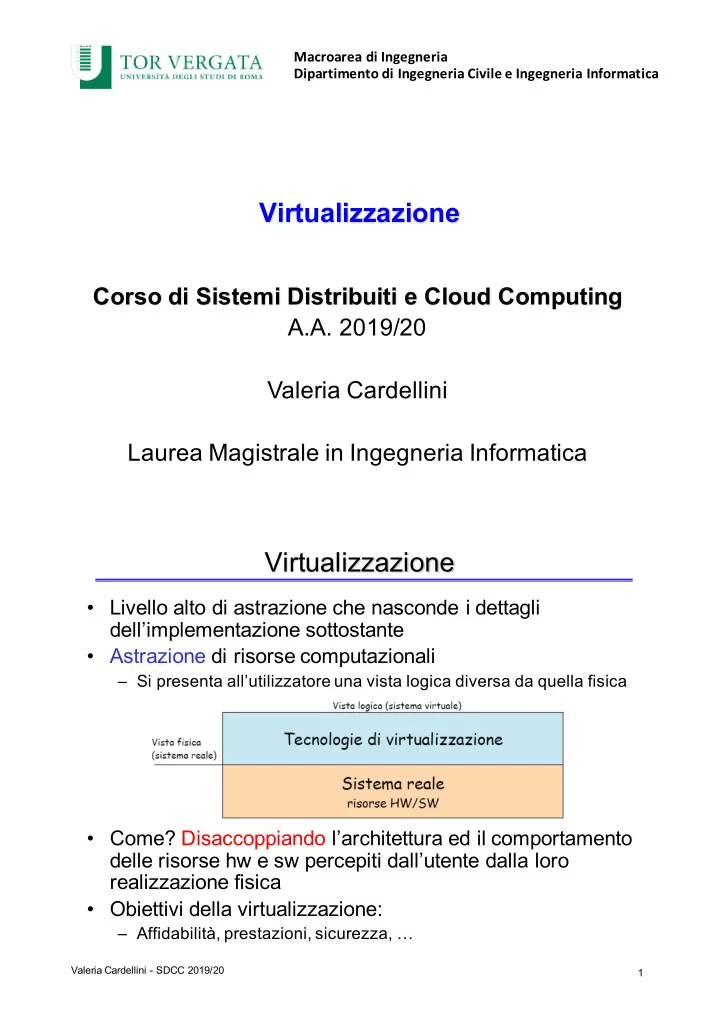

- Livello alto di astrazione che nasconde i dettagli

dell’implementazione sottostante

- Astrazione di risorse computazionali

– Si presenta all’utilizzatore una vista logica diversa da quella fisica

- Come? Disaccoppiando l’architettura ed il comportamento

delle risorse hw e sw percepiti dall’utente dalla loro realizzazione fisica

- Obiettivi della virtualizzazione:

– Affidabilità, prestazioni, sicurezza, …

1