SLIDE 1

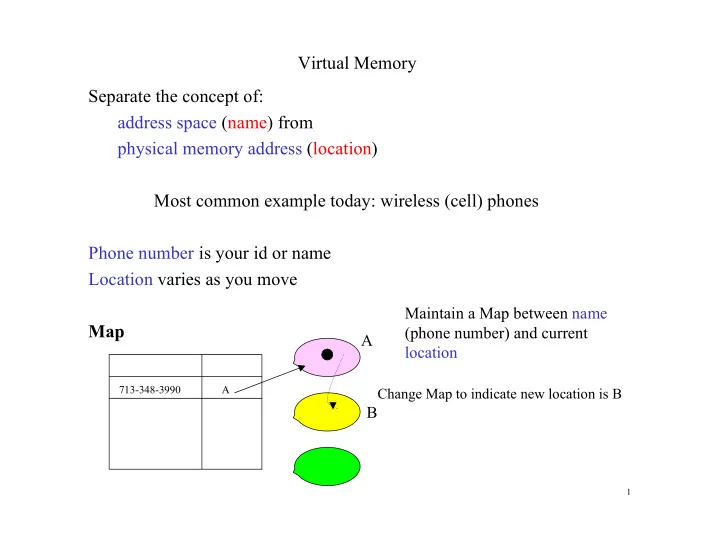

Virtual Memory Separate the concept of: address space (name) from physical memory address (location) Most common example today: wireless (cell) phones Phone number is your id or name Location varies as you move Map

713-348-3990 A

A B

Change Map to indicate new location is B

Maintain a Map between name (phone number) and current location

1