SLIDE 1 Virtual Memory

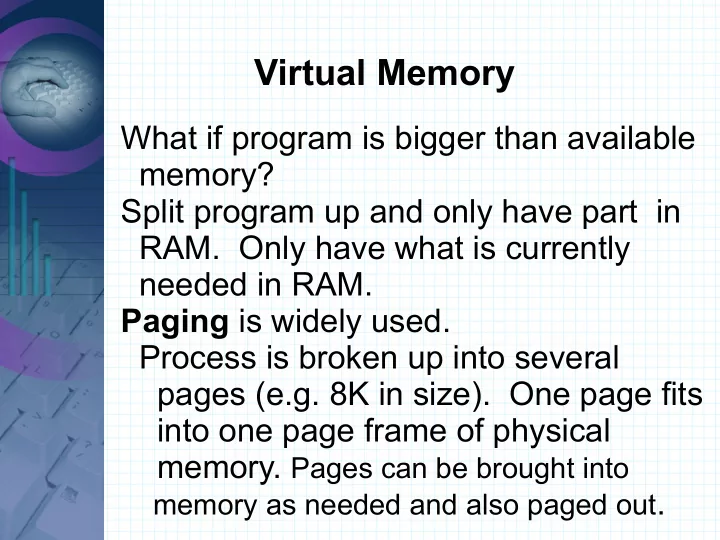

What if program is bigger than available memory? Split program up and only have part in

- RAM. Only have what is currently

needed in RAM. Paging is widely used. Process is broken up into several pages (e.g. 8K in size). One page fits into one page frame of physical

- memory. Pages can be brought into

memory as needed and also paged out.

SLIDE 2 Virtual Memory

Virtual memory vs. physical memory MMU (Memory Management Unit) maps virtual address to physical address. Pages are of size 2n

- ex. If addresses are 16 bit and page

size is 1k how many pages big can a process be?

SLIDE 3 Paging

If addresses are 16 bit and page size is 1k how many pages big can a process be? With 1k page size need 10 bits for

That leaves 6 bits to address pages Max process size is 26 = 64 1k pages

SLIDE 4 Paging

Relative (virtual) address = 1502

User Process 2700 Bytes

Page 0 Page 2

Page 1 1k page size

SLIDE 5 Paging

Relative (virtual) address = 1502 = 000001 0111011110 = page 1, offset 478

Page 1

1502 -

Do example with a 4k page size instead

SLIDE 6

Paging

The page table gives how to find physical address from virtual address. Look in page table to see if page is in memory or not. If so, look up page frame number. If not, generate a page fault and bring the page into memory. One of the things in a page table needs to be a present/absent bit.

SLIDE 7 Paging

The page table function mapping must be fast and the table can be very large.

- ex. 32 bit addr and 8k page size has

2(32-13) = 219 = 524288 pages. 64 bit address space ... very big! Each process needs its own page table. With each instruction 1, 2 or more mem references are made – so must be fast.

SLIDE 8 Paging

One simple idea for implementing a page table is to have it stored in a set

- f registers. Problem is the expense

- f loading on context switches.

Another idea is to have the page table in memory – slow. Multilevel page tables

- ex. Split address up into 3 parts

Have several page tables and don't have them all in memory at once.

SLIDE 9 Structure of a page table entry

- Page frame number

- Present/absent bit

- Protection (r,w,x) bits

- Modified bit (dirty bit)

- Referenced bit

- Caching disabled

SLIDE 10 Translation Lookaside Buffers

Having page table in memory slows performance down. The principle of locality says only a few entries in a page table are used a lot. The TLB is hardware to map virtual address to physical address.

Basically is a cache to hold part of the page table. Management of TLB is done in software with many processors. The TLB will have hits + misses – good mgmt. Is important.

SLIDE 11

More on page tables

Page tables can be large and take up a lot of space. (i.e. 1 million entries) With 64-bit addressing the page table size is way to large for memory. (i.e. 30 million GB). A sol'n is to use an inverted page table. Have an entry for each page frame of real memory. Result is much smaller. An entry has what process and virtual page is in the frame.

SLIDE 12 Inverted Page Table

However virtual address is much harder

- must search the inverted page table

entries for the process and virtual page number. Use the TLB to solve this. Must only search on TLB misses. Could use a hash table to speed up lookups.

SLIDE 13 Page Replacement Algorithms

How to choose which page to evict from memory? Need to evict to make room for others. Fewer page faults the better the performance. When a page is selected it will need to be written out to disk if it has been

- modified. Otherwise the new page can

just overwrite. Want to select a page that will not be needed in the near future.

Same issues occur with cache design.

SLIDE 14 Optimal Page Replacement Alg.

Minimizes page faults If a page will not be used again select it. Otherwise, select the page that will not be needed for the longest time. Easy as that. Impossible to implement though. Can be used to compare how good

- ther algorithms might be.

SLIDE 15 Not Recently Used (NRU)

Select a page that hasn't been used

- recently. May not be needed in the

near future. Usually works ok. One way to implement is to look at referenced bit and modified bits for the pages. The referenced bit is set to 1 when read and to 0 on clock interrupts. Get 4 combinations.

SLIDE 16

Not Recently Used (NRU)

Select a page randomly from the lowest class with members.

Class Ref Mod 1 1 2 1 3 1 1

SLIDE 17 First-In First-Out (FIFO)

Select the page that has been in memory the longest. Has problems. Rarely Used. Suffers from Belady's Anomaly.

- Possible to get more page faults

with more page frames than with fewer page frames. Ex: 5 pages 0-4 referenced: 3 2 0 4 3 2 1 3 2 0 4 1 Calculate # of page faults with 3 and 4 page frames using FIFO.

SLIDE 18

Belady's Anomaly

Ex: 5 pages 0-4 referenced: 3 2 0 4 3 2 1 3 2 0 4 1 Calculate # of page faults with 3 and 4 page frames using FIFO. With 3 frames get 9 page faults. With 4 frames get 10 page faults. Stack algorithms like LRU and optimal do not suffer from this.

SLIDE 19 Second Chance Algorithm

Improvement of FIFO. It looks at the referenced bit. If the oldest page is 0 then select it. If it is 1, clear the bit and put it at the end of the line. Clock algorithm

- Same as second chance except pages

are kept in a circular list.

SLIDE 20

Least Recently Used (LRU)

As a result of locality in programs, pages used recently are likely to be used in the near future. So select the page that was LRU. Requires updating a linked list on most memory references. Not feasible. Instead have a hardware counter that is stored in the page table entries and select the lowest. LRU can be simulated in software.

SLIDE 21

Not Frequently Used (NFU)

Is one software way to simulate LRU. It uses a software counter stored in each page table entry that starts at 0. The ref. bit is added to the counter at each clock interrupt. Select the lowest counter. Keeps track of how many times total a page has been used rather than how long ago. Will keep pages that were used a lot, but may not have been used recently.

SLIDE 22

Not Frequently Used (NFU)

Can be fixed to simulate LRU well by implementing a shift register with the counter. Right shift the counter 1 bit and add referenced bit to the highest bit. This implements aging.

SLIDE 23 Working Set Algorithm

Demand Paging – load pages on demand when they are needed. Start off with no pages of process loaded. Only a subset

- f all pages are referenced repeatedly

as a result of locality. Working Set – pages a proc. is currently using. Thrashing – a process causing a page fault every few instructions. Try to keep the working set in memory.

SLIDE 24

Working Set Algorithm

Prepaging – load pages before needed. Programs generate lots of page faults when first started then use a small working set (ex. a loop). OS must keep track of pages in the working set. Could define the working set as pages used in the last k instructions. Can approx by using execution time instead. An algorithm would want to evict the page referenced longest ago.

SLIDE 25

WSClock page replacement Alg.

Uses clock algorithm based on working set info. Simple to implement. Gives good performance. Much used. Uses a circular list of page frames. Info includes time of last use, reference and modified bits.

SLIDE 26 WSClock page replacement Alg.

Page currently being pointed at is examined first. If ref'd is 1 set it to 0, look at next page. If referenced is 0, and page is old and clean evict it. (not in working set) If dirty write it to disk and look at next. If entire list is searched and a write has been scheduled keep looking. If no writes all pages are in working set

If none clean, evict current page.

SLIDE 27

Paging Design Issues

Local vs. Global Allocation Policies How to allocate memory to different processes? How many frames does each process get? When a page fault occurs should all the pages in memory be considered for eviction or just the pages belonging to the process generating the fault? Global algorithms usually work better.

SLIDE 28 Paging Design Issues

Working set size can change. By watching the page fault frequency (PFF) can increase or decrease the number of frames allocated to a process. What to do when PFF is high for all processes and the system is thrashing?

- get more memory – no

- kill processes – no

- swap out some processes – Load

control – degree of multiprogramming

SLIDE 29

Paging Design Issues

Page Size The OS can group frames together to get larger page size. Reasons for a small page size. Less internal fragmentation Less unused code/data in memory. Reasons for a larger page size. Less pages -> smaller page table Loading a larger page from disk is almost as fast as loading a smaller page.

SLIDE 30

Paging Design Issues

4k and 8k page size are commonly used. With RAM getting bigger larger page sizes are starting to be used. No optimum size. Compile time option for page size.

SLIDE 31

Separating Instruction and Data Spaces

Instead of having one address space could have separate spaces for Instructions and Data. Some caches do this. Could be able to address twice the total space.

SLIDE 32 Shared Pages

May have several copies of same program running – same code. Have them share pages. With read only pages no problem. What about when a page gets written to?

SLIDE 33 Cleaning Policy

Best to keep some page frames open so page faults can be serviced quickly. Paging daemon – if free memory is too low, start evicting pages. Also writes

Use a two-handed clock. The front hand takes care of writing out dirty pages. The back hand does the normal clock algorithm for page replacement.

SLIDE 34

OS is involved with paging processes four times

Process Creation – must allocate initial size and make page table. Must init swap space for process. Can page program text from executable file. Process is scheduled for execution – must reset MMU and flush TLB. Start using this process' page table. May bring in some pages.

SLIDE 35

OS is involved with paging processes four times

At a page fault – Find needed page on disk and bring it in. Process exits – Free up page table, pages and swap for the process. If pages are shared with another process then leave them.

SLIDE 36 Other Paging issues

Pages can be locked in memory so they are not paged out. Backing store issues

- keep entire core image on disk

- processes can change in size

- keep only pages not in main memory

- n disk.

SLIDE 37

Segmentation

Segments are independent address spaces of varying sizes. Addresses : segment # + offset into the segment Programmer knows about segments and uses them.

SLIDE 38

Segmentation Advantages

Simplifies handling of change size data. With addresses staring at 0 can link procedures compiled separately easy. (changing one procedure does not change addressing of others) Make shared libraries between different protections. (execute only, read only, etc)

SLIDE 39 Segmentation

Segments are not fixed size like pages. Memory will have segments and holes

- external fragmentation -> compaction

Segmentation with pages is possible Page large segments Get advantages of both. Need a segment #, page # and

SLIDE 40 Unix process Memory Layout

3 sections

- Text segment – code, read only

- can be shared between processes

- Data segment – variables and other

data – BSS (uninitialized data)

malloc() uses brk() system call which set size of data segment.

SLIDE 41 Unix process Memory Layout

Stack BSS

Text

3 sections of a Unix Process

The stack grows down and the data section grows up. (toward each other)

SLIDE 42 Unix Memory

Memory-mapped files – mmap() read and write files in memory

- random access easier than with

I/O calls multiple processes can mmap the same file – easy data sharing Older unix swapped Demand paging was added The page daemon implements it using a two-handed clock.

SLIDE 43 Unix page daemon

Two-handed clock, global algorithm First pass usage bit is cleared lotsfree variable – want at least that much memory free. Second pass removes pages not used. SYSV added min and max variables instead of just lotsfree

- when mem < min, free pages until

max is available.

SLIDE 44 Paging finish

Linux uses demand paging, no pre-fetching.

- 3 level page table

- Buddy system is used for dynamically

loaded modules. Swap partitions and swap files