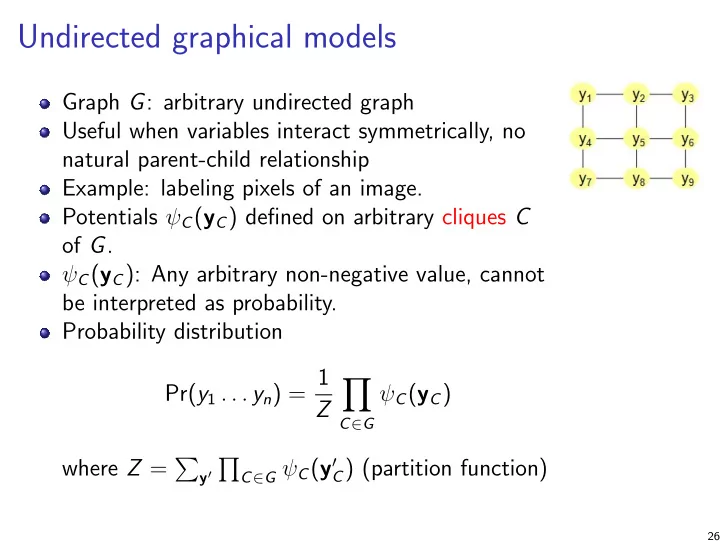

Undirected graphical models

Graph G: arbitrary undirected graph Useful when variables interact symmetrically, no natural parent-child relationship Example: labeling pixels of an image. Potentials ψC(yC) defined on arbitrary cliques C

- f G.

ψC(yC): Any arbitrary non-negative value, cannot be interpreted as probability. Probability distribution Pr(y1 . . . yn) = 1 Z

- C∈G

ψC(yC) where Z =

y′

- C∈G ψC(y′

C) (partition function)

26