2013‐10‐09 1

Topic Modeling Lecture 9: October 9, 2013

CS886‐2 Natural Language Understanding University of Waterloo

CS886 Lecture Slides (c) 2013 P. Poupart 1

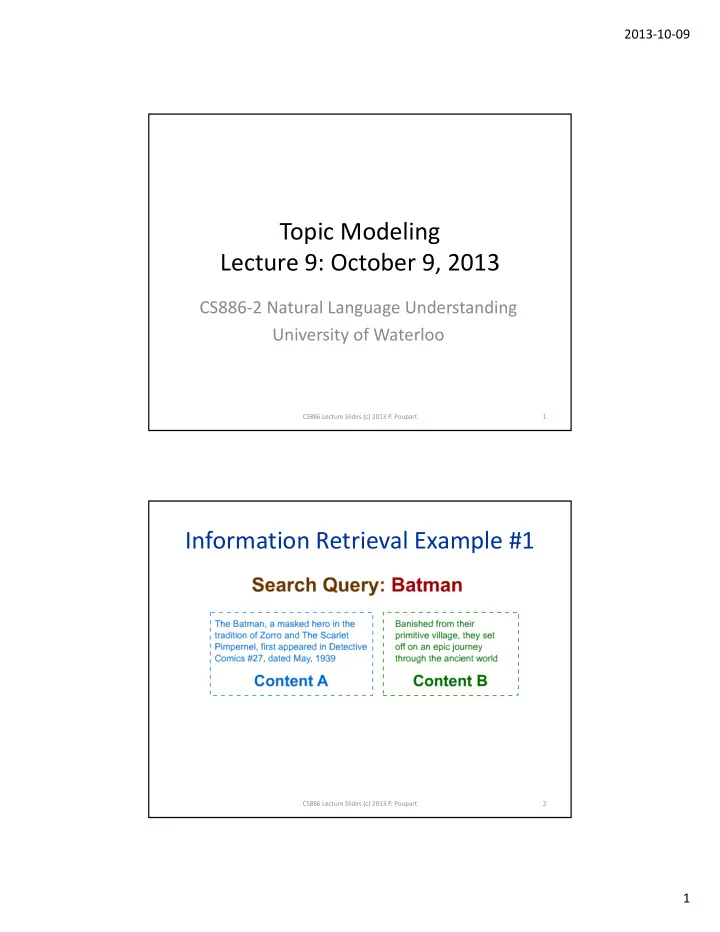

Information Retrieval Example #1

CS886 Lecture Slides (c) 2013 P. Poupart 2

Topic Modeling Lecture 9: October 9, 2013 CS886 2 Natural Language - - PDF document

2013 10 09 Topic Modeling Lecture 9: October 9, 2013 CS886 2 Natural Language Understanding University of Waterloo CS886 Lecture Slides (c) 2013 P. Poupart 1 Information Retrieval Example #1 CS886 Lecture Slides (c) 2013 P. Poupart 2

CS886 Lecture Slides (c) 2013 P. Poupart 1

CS886 Lecture Slides (c) 2013 P. Poupart 2

CS886 Lecture Slides (c) 2013 P. Poupart 3

CS886 Lecture Slides (c) 2013 P. Poupart 4

CS886 Lecture Slides (c) 2013 P. Poupart 5

CS886 Lecture Slides (c) 2013 P. Poupart 6

CS886 Lecture Slides (c) 2013 P. Poupart 7

CS886 Lecture Slides (c) 2013 P. Poupart 8

CS886 Lecture Slides (c) 2013 P. Poupart 9

CS886 Lecture Slides (c) 2013 P. Poupart 10

CS886 Lecture Slides (c) 2013 P. Poupart 11

0.2 1 p Pr(p) Dir(p; 1, 1) Dir(p; 2, 8) Dir(p; 20, 80) CS886 Lecture Slides (c) 2013 P. Poupart 12

CS886 Lecture Slides (c) 2013 P. Poupart 13

:

CS886 Lecture Slides (c) 2013 P. Poupart 14

CS886 Lecture Slides (c) 2013 P. Poupart 15

CS886 Lecture Slides (c) 2013 P. Poupart 16

CS886 Lecture Slides (c) 2013 P. Poupart 17

do

Sample

~ Pr

18

CS886 Lecture Slides (c) 2013 P. Poupart 19

V P V 2

20

CS886 Lecture Slides (c) 2013 P. Poupart 21

CS886 Lecture Slides (c) 2013 P. Poupart 22

..

23

CS886 Lecture Slides (c) 2013 P. Poupart 24

CS886 Lecture Slides (c) 2013 P. Poupart 25

CS886 Lecture Slides (c) 2013 P. Poupart 26

27

CS886 Lecture Slides (c) 2013 P. Poupart 28

CS886 Lecture Slides (c) 2013 P. Poupart 29

to all non‐evidence variables

do

Sample

~ Pr ~ ,

|

∑

30

CS886 Lecture Slides (c) 2013 P. Poupart 31

Pr

|

1

32

CS886 Lecture Slides (c) 2013 P. Poupart 33