This reduces to a generalized eigenvalue problem, i.e. to finding - - PowerPoint PPT Presentation

This reduces to a generalized eigenvalue problem, i.e. to finding - - PowerPoint PPT Presentation

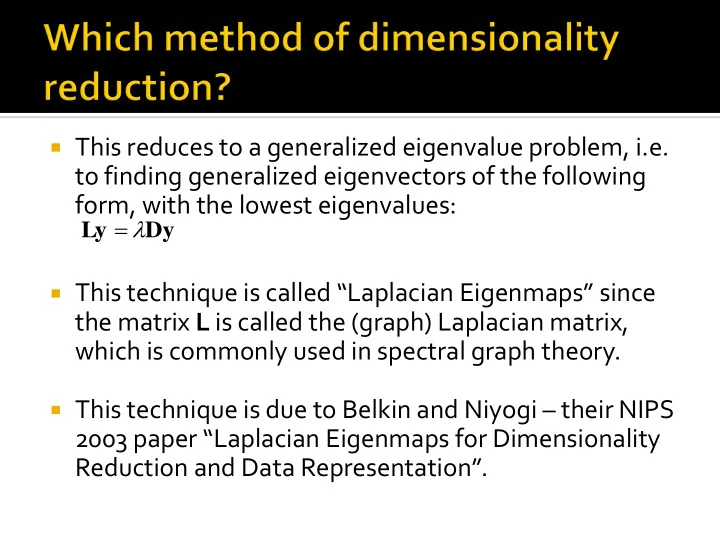

This reduces to a generalized eigenvalue problem, i.e. to finding generalized eigenvectors of the following form, with the lowest eigenvalues: Ly Dy This technique is called Laplacian Eigenmaps since the matrix L is

Image source: https://people.cs.pitt.edu/~milos/co urses/cs3750/lectures/class17.pdf

On this slide, N refers to the number of nearest neighbors per point (the other distances are set to infinity). The parameter for the Gaussian kernel needs to be selected carefully, especially if N is high.

Image source: https://people.cs.pitt.edu/ ~milos/courses/cs3750/lect ures/class17.pdf

We need a method of dimensionality reduction which

“preserves the neighbourhood structure” of the

- riginal high dimensional points.

In order words, if qi and qj were close, their lower-

dimensional projections yi and yj should also be close.

To define “closeness” mathematically, consider the

following proximity matrix W:

2 exp

2 j i

q q

ij

W

Higher values for Wij means qi and qj are nearby in the Euclidean sense. Lower values means qi and qj are far apart.

For each of the Q tomographic projections,

create a reversed copy (to take care of angles between π and 2π).

Apply Laplacian Eigenmaps to reduce the

dimensionality of these tomographic projections to 2, i.e. tomographic projection qi is mapped to yi=(1i,2i), i.e. angle ϑi = atan(1i/2i).

Sort the tomographic projections as per angles

ϑi

Reconstruct the image based on angle estimates

- f the form 2πi/Q.

Image source: Coifman et al, Graph LaplacianTomography from Unknown Random Projections

Image source: Singer and Wu, Two-dimensional tomography from noisy projections taken at unknown random directions,

For all three methods (moment-based,

- rdering-based, as well as based on

dimensionality reduction), the tomographic projections are very noisy.

They need to be denoised, for optimal

performance of the algorithm.

In these applications, the projections are

extremely noisy.

Prior to the ordering algorithm, a denoising

step is required to prevent erroneous

- rdering.

The denoising step can be performed using

Principal Components Analysis (PCA).

Assuming a Gaussian noise model, the noise

variance can be semi-automatically estimated from the regions in the projections which are completely blank (modulo noise).

An algorithm similar to the patch-based PCA

denoising (seen in CS 663 last semester) can be employed.

Given a small patch in a projection vector, we

can find small patches similar to it at spatially distant locations within the same projection vector or in projection vectors acquired from

- ther angles.

This “non-local” principle can be combined with

PCA for denoising.

Consider a set of clean projections {Ir}, r=1 to P,

corrupted by additive Gaussian noise of mean zero and standard deviation σ, to give noisy projection Jr as follows: Jr = Ir + Nr, Nr ~ Gaussian distribution of mean 0 and standard deviation σ.

Given each Jr, we want to estimate Ir, i.e. we

want to denoise Jr.

Consider a small p x 1 patch – denoted qref - in

some Js.

Step 1: We will collect together some L patches

{q1,q2,…,qL} from {Jr }, r = 1 to R, that are structurally similar to qref – pick the L nearest neighbors of qref .

Note: even if Js is noisy, there is enough

information in it to judge similarity if we assume σ << average intensity of the true projection Is.

Step 2: Assemble these L patches into a matrix

- f size p x L. Let us denote this matrix as Xref.

Step 3: Find the eigenvectors of Xref Xref

T to

produce an eigenvector matrix V.

Step 4: Project each of the (noisy) patches

{q1,q2,…,qL} onto V and compute their eigen- coefficient vectors denoted as {α1, α2,…, αL} where αi = VTqi.

Step 5: Now, we need to manipulate the

eigencoefficients of qref in order to denoise it.

Step 5 (continued): We will follow a Wiener filter

type of update:

Step 6: Reconstruct the reference patch as

follows:

patch. filtered the

- f

icients eigencoeff

- f

vector the is ). ( is element th

- which the

- f

elements, contains and patch (noisy) reference the

- f

icients eigencoeff

- f

vector a is : 1 ), ( ) ( 1 1 ) (

2 2 2 ref ref ref ref ref

β α α α α β l l p Note p l l l l

Noise variance (assumed known or can be estimated) Estimate of coefficient squared of true signal

later. see will We formula? Why this ) ) ( 1 , max( ) (

1 2 2 2

L i i l

L l α

ref

β V q

denoised ref

Repeat steps 1 to 6 for all p x 1 patches from

image J (in a sliding window fashion).

Since we take overlapping patches, any given

pixel will be covered by multiple patches (as many as p different patches).

Reconstruct the final projection by averaging

the output values that appear at any pixel.

Note that a separate eigenspace is created

for each reference patch. The eigenspace is always created from patches that are similar to the reference patch.

Such a technique is often called as spatially

varying PCA or non-local PCA.

To compute L nearest neighbors of qref,

restrict your search to a window around qref.

For every patch within the window, compute

the sum of squared differences with qref, i.e. compute: .

Pick L patches with the least distance.

p i ref

i s i q

1 2

)) ( ) ( (

)) ( ) ( ) ( 2 ) ( ) ( )) ( (( min arg )) ( ) ( ) ( ( min arg * 1 ), ( ) ( ) (

i 2 2 2 i ) ( 2 i ) ( 2 i

l l l k l l k l E l l k l E k p l l l k l

i l k i l k i

i

)) ( 2 ) ( ( min arg ) 2 ( min arg * : y readabilit better for subscript and index the drop will We

2 2 2 2 2 2

k k E k k E k i l

k k

Eigen-coefficients of the “true patch”. We are looking for a linear update which motivates this equation.

As the image and the noise are independent As the noise is zero mean ) ( ) ( ) ( ) ( :

i

l l l V V Consider

i i i true i T noisy i T i true i noisy i

n q q n q q ) ( ) ( ) ( since ) ( ) ( ) ( as ), ( 2 ) ( ) ( min arg

2 2 2 2 2

n E V n V E E E E E kE E k E

T T k

ni represents a vector of pure noise values which degrades the true patch to give the noisy patch. Its projection onto the eigenspace gives vector ϒi.

2 2 2 2 2 2 2 2 2

) ( ) ( ) ( ) ( ) ( ) ( get we 0, to w.r.t. derivative Setting E E E E k E kE k ) ( ) ( ) ( ) ( ) ( : Re ? ) ( estimate we should How

2

l l l l l call E

i i i i

Since we are dealing with L similar patches, we can assume (approximately) that the l-th eigen- coefficient of each of those L patches are very similar. ) ) ( 1 , max( )) ( ( be it to set we so value, negative a be may This ) ( 1 )) ( ( )) ( ( )) ( ( )) ( ( )) ( (

2 1 2 2 2 1 2 2 2 2 2 2 2

L i i L i i i i i

l L l E l L l E l E l E l E l E

Image source: Singer and Wu, Two- dimensional tomography from noisy projections taken at unknown random directions

Image source: Singer and Wu, Two-dimensional tomography from noisy projections taken at unknown random directions

Image source: Singer and Wu, Two- dimensional tomography from noisy projections taken at unknown random directions

The planes of projection in directions di and dj

will intersect in a common line cij.

Hence their corresponding central slices

through the Fourier volume will also have a common line.

The common line can be determined by a

search in the Fourier space!

Ajit Rajwade

Ajit Rajwade

v u v u Intersection of the planes in the Fourier space leads to a common line. The directions of projection are unknown, but the common line can be found out by searching over pairs of directions in the Fourier space and finding the best match. ri=F(Rdif) rj=F(Rdjf) Central Fourier planes corresponding to directions di and dj

Consider the following equation:

Ajit Rajwade

) , sin , (cos

ij ij

ij ij i

b c R

Common line in global coordinate system Common line expressed in local coordinate system of plane of projection in direction di

2 ,

) ( ) ( min arg ) , (

j i

r r

ji ij

Angle made by cij with local X axis

We see that Consider viewing directions c12, c23, c31. Then

we have

Ajit Rajwade

ik ij ik i ij i ik ij ij ij i

c c c R c R b b b c R ) cos( ) , sin , (cos

ik ij ij ij

1 cos cos cos 1 cos cos cos 1 ,

13 12 21 23 13 12 32 31 21 23 32 31

M CC M c c c C

31 23 12 T

The matrix C is rank 3 in the case that the

common lines are not coplanar.

To obtain C from M, we have

Ajit Rajwade

ambiguity) eflection rotation/r (upto

5 .

UD C UDU CC M

T T