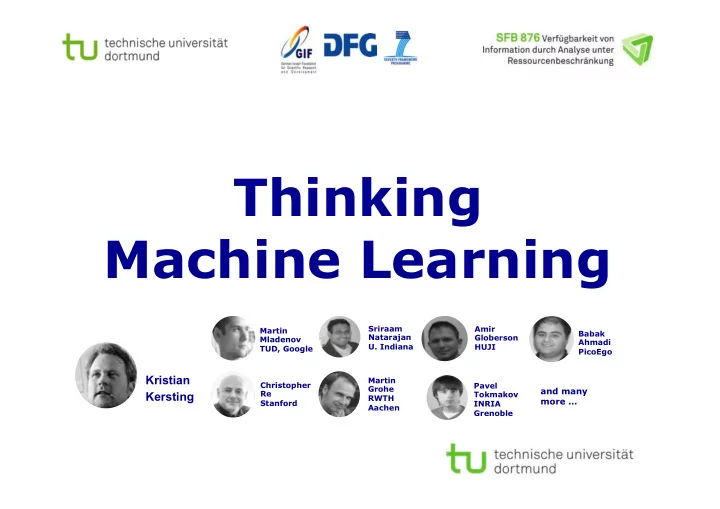

Thinking Machine Learning

Kristian Kersting

Martin Mladenov TUD, Google Babak Ahmadi PicoEgo Amir Globerson HUJI Martin Grohe RWTH Aachen Sriraam Natarajan

- U. Indiana

and many more …

Pavel Tokmakov INRIA Grenoble Christopher Re Stanford

Thinking Machine Learning Sriraam Amir Martin Babak Natarajan - - PowerPoint PPT Presentation

Thinking Machine Learning Sriraam Amir Martin Babak Natarajan Globerson Mladenov Ahmadi U. Indiana HUJI TUD, Google PicoEgo Kristian Martin Christopher Pavel Grohe and many Re Kersting Tokmakov RWTH more Stanford INRIA

Kristian Kersting

Martin Mladenov TUD, Google Babak Ahmadi PicoEgo Amir Globerson HUJI Martin Grohe RWTH Aachen Sriraam Natarajan

and many more …

Pavel Tokmakov INRIA Grenoble Christopher Re Stanford

Statistical Machine Learning (ML) needs a crossover with data and programming abstractions

applications and make experts more effective

need ways to automatically reduce the solver costs

Next Generation

Data Science High-level languages Automated reduction of computational costs

Next Generation

Machine Learning

Kristian Kersting - Thinking Machine Learning

Kristian Kersting - Thinking Machine Learning

Arms race to deeply understand data

Kristian Kersting - Thinking Machine Learning

Bottom line: Take your data spreadsheet … Features Objects

Graphical models

Kristian Kersting - Thinking Machine Learning

… and apply machine learning

Gaussian Processes Autoencoder, Deep Learning

and many more …

t

F(t) f(t)

Diffusion Models Distillation/LUPI

Big

Model

Small

Model

teaches

Features Objects

Big Data Matrix Factorization Graph Mining Boosting

Kristian Kersting - Thinking Machine Learning [Lu, Krishna, Bernstein, Fei-Fei „Visual Relationship Detection“ CVPR 2016]

Complex data networks abound

[Bratzadeh 2016; Bratzadeh, Molina, Kersting „The Machine Learning Genome“ 2017]

Complex data networks abound

The ML Genome is a dataset, a knowledge base, an ongoing effort to learn and reason about ML concepts

Algorithms Compared to

Punshline: Two trends that drive ML

Crossover of ML with data & programming abstractions

Kristian Kersting - Thinking Machine Learning

Scaling Uncertainty Databases/ Logic Data Mining

De Raedt, Kersting, Natarajan, Poole, Statistical Relational Artificial Intelligence: Logic, Probability, and Computation. Morgan and Claypool Publishers, ISBN: 9781627058414, 2016.

increases the number of people who can successfully build ML applications make the ML expert more effective

It costs considerable human effort to develop, for a given dataset and task, a good ML algorithm

Lake et al., Science 350 (6266), 1332-1338, 2015 Tenenbaum, et al., Science 331 (6022), 1279-1285, 2011

And this had major impact on CogSci

Kristian Kersting - Thinking Machine Learning

Symbolic-Numerical Solver Feature Extraction Declarative Learning Programming

(Un-)Structured Data Sources External Databases

Features and Data Rules

Features and Rules

Machine Learning Database

(data, weighted rules, loops and data structures)

Representation Learning Model Rules and DomainKnowledge DM and ML Algorithms

Inference Results Feedback/AutoDM p 0.9 0.6

Graph Kernels Diffusion Processes Random Walks Decision Trees Frequent Itemsets SVMs Graphical Models Topic Models Gaussian Processes Autoencoder Matrix and Tensor Factorization Reinforcement Learning …

[Ré, Sadeghian, Shan, Shin, Wang, Wu, Zhang IEEE Data Eng. Bull.’14; Natarajan, Picado, Khot, Kersting, Ré, Shavlik ILP’14; Natarajan, Soni, Wazalwar, Viswanathan, Kersting Solving Large Scale Learning Tasks’16, Mladenov, Heinrich, Kleinhans, Gonsior, Kersting DeLBP’16, …]

Kristian Kersting - Declarative Data Science Programming

This connects the CS communities

Data Mining/Machine Learning, Databases, AI, Model Checking, Software Engineering, Optimization, Knowledge Representation, Constraint Programming, … !

Jim Gray Turing Award 1998 “Automated Programming” Mike Stonebraker Turing Award 2014 “One size does not fit all”

However, machines that think and learn also complicate/enlarge the underlying computational models, making them potentially very slow

Kristian Kersting - Thinking Machine Learning

Guy van den Broeck UCLA

LIKO81, CCA 3.0

card (1,d2) card (1,d3) card (1,pAce) card (52,d2) card (52,d3) card

(52,pAce)

Guy van den Broeck UCLA

LIKO81, CCA 3.0

card (1,d2) card (1,d3) card (1,pAce) card (52,d2) card (52,d3) card

(52,pAce)

Guy van den Broeck UCLA

LIKO81, CCA 3.0

card (1,d2) card (1,d3) card (1,pAce) card (52,d2) card (52,d3) card

(52,pAce)

Guy van den Broeck UCLA

LIKO81, CCA 3.0

card (1,d2) card (1,d3) card (1,pAce) card (52,d2) card (52,d3) card

(52,pAce)

Guy van den Broeck UCLA

LIKO81, CCA 3.0

Faster modelling Faster ML

What are symmetries in approximate probabilistic inference, one of the working horses of ML?

Lifted Loopy Belief Propagation

Exploiting computational symmetries

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Kristian Kersting - Thinking Machine Learning

Model

Run a modified Loopy Belief Propagation

Small

Model

automatically compressed

Run Loopy Belief Propagation

Compression: Coloring the graph

§ Color nodes according to the evidence you have

§ No evidence, say red § State „one“, say brown § State „two“, say orange § ...

§ Color factors distinctively according to their equivalences For instance, assuming f1 and f2 to be identical and B appears at the second position within both, say blue

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Kristian Kersting - Thinking Machine Learning

Compression: Pass the colors around

Kristian Kersting - Thinking Machine Learning

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Compression: Pass the colors around

Kristian Kersting - Thinking Machine Learning

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Compression: Pass the colors around

Kristian Kersting - Thinking Machine Learning

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Compression: Pass the colors around

Kristian Kersting - Thinking Machine Learning

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Compression: Pass the colors around

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Kristian Kersting - Thinking Machine Learning

Lifted Loopy Belief Propagation

Exploiting computational symmetries

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Kristian Kersting - Thinking Machine Learning

Model

Run a modified Loopy Belief Propagation

Small

Model

automatically compressed

Run Loopy Belief Propagation

A,C

B

f1, f2

quasi-linear time

Compression can considerably speed up inference and training

[Singla, Domingos AAAI’08; Kersting, Ahmadi, Natarajan UAI’09; Ahmadi, Kersting, Mladenov, Natarajan MLJ’13]

Parameter training using a lifted stochastic gradient

CORA entity resolution Kristian Kersting - Thinking Machine Learning

State-of-the-art

114x faster

The higher, the better The lower, the better

converges before data has been seen once

What is going on algebraically? Can we generalize this to other ML approaches?

Probabilistic inference using lifted (loopy) belief propagation

100x faster

WL computes a fractional automorphism

where XQ and Xp are doubly-stochastic matrixes (relaxed form of automorphism)

It turns out that color passing is well- known in graph theory:

The Weisfeile-Lehman Algorithm

Instead of looking at ML through the glasses of probabilities, let‘s approach it using optimization

Lifted Linear Programming

[Mladenov, Ahmadi, Kersting AISTATS´12, Grohe, Kersting, Mladenov, Selman ESA´14, Kersting, Mladenov, Tokmatov AIJ´15]

Kristian Kersting - Thinking Machine Learning

(1) Reduce the LP by running WL on the LP-Graph (2) Run any solver on the (hopefully) smaller LP

quasi-linear overhead that may result in exponential speed up State-of-the-art

the lower, the better

Running time, log scale

Marginal Polytope Relaxed Polytope Objective Function Symmetrized Subspace

Simplified MAP LP

Feasible region

Projection of the feasible region onto the span of the fractional auto- morphism

MAP

Any MAP-LP message- passing approach is liftable

40 50

Domain Size MPLP-reparam. MPLP-ground MPLP-reparam. TRW-reparam.

5 15 25 50 5 10 20 30 40 50 5 10 20 30 40 50

120

[Mladenov, Globerson, Kersting AISTATS `14, UAI `14; Mladenov, Kersting UAI´15]

Kristian Kersting - Thinking Machine Learning

(a) Complete Graph MLN. (b) Clique-Cycle MLN.

10 5 5 10 W 20000 40000 60000 80000 100000 120000 140000 Objective of dcBP ground reparam 50 100 150 200 250 300 Domain Size 2 4 6 8 10 12 14 |V| + |F|, log 50 100 150 200 250 300 Domain Size 2 1 1 2 3 4 5 Running time, log scale(c) Friends-smokers MLN.

Marginals

Any concave free energy is liftable

the lower, the better the lower, the better the lower, the better the lower, the better Running time [sec], log

State of the art, which can also speed up training

AND PAVES THE WAY TO COMPRESSED MACHINE LEARNING IN GENERAL, NOT JUST GRAPHICAL MODELS!

Kristian Kersting - Thinking Machine Learning

Matrix Factorization Gaussian Processes Decision Trees/Boosting Autoencoder/Deep Learning

and many more …

Support Vector Machines Graphical models

Relational Data and Program Abstractions

Logically parameterized constraint Logically parameterized objective Data stored in an external DB

http://www-ai.cs.uni-dortmund.de/weblab/static/RLP/html/

Logically parameterized variable (set of ground variables)

Write down the SVM in „paper form“. The machine compiles it automatically into solver form.

[Kersting, Mladenov, Tokmakov AIJ´15; Mladenov, Heinrich, Kleinhans, Gonsio, Kersting DeLBP´16; Mladenov, Kleinhans, Kersting AAAI´17]

Embedded within Python s.t. loops and rules can be used

Kristian Kersting - Thinking Machine Learning

Kernels often scare non-experts. Our alternative:

Simply program additional constraints

[Kersting, Mladenov, Tokmakov AIJ´15; Mladenov, Heinrich, Kleinhans, Gonsio, Kersting DeLBP´16; Mladenov, Kleinhans, Kersting AAAI´17]

On par with state-of-the-art by just four lines of code

CORA entity resolution 3.6% 6.4%

the higher, the better

Papers that cite each other should be on the same side of the hyperplane

Kristian Kersting - Thinking Machine Learning [Kersting, Mladenov, Tokmakov AIJ´15; Mladenov, Heinrich, Kleinhans, Gonsio, Kersting DeLBP´16; Mladenov, Kleinhans, Kersting AAAI´17]

Not only better predictive performance

CORA entity resolution

the lower, the better

(1) Reduce the QP via WL

(2) Run any solver on the reduced QP

Kristian Kersting - Thinking Machine Learning [Mladenov, Kleinhans, Kersting AAAI´17]

Approximately Lifted SVM: Cluster via K-means using sorted distance vectors

PAC-style generalization bound: the approximately lifted SVM will very likely have a small expected error rate if it has a small empirical loss over the

Not only better predictive performance

MNIST image classification Original SVM Original SVM

37800380x faster

the higher, the better the lower, the better

Symmetry-based Data Augmentation: fractional autom. of label-preserving data transformations

Same should work for deep networks

Algebraic Decision Diagrams

Formulae parse trees

Matrix Free Optimization

And, there are other “-02”, “-03”, … flags, e.g symbolic-numerical interior point solvers

Kristian Kersting - Thinking Machine Learning

[Mladenov, Belle, Kersting AAAI´17]

Applies to QPs but here illustrated on MDPs for a factory agent which must paint two objects and connect

which actions require a number of tools which are possibly available. Various painting and connection methods are represented, each having an effect on the quality of the job, and each requiring tools. Rewards (required quality) range from 0 to 10 and a discounting factor of 0. 9 was used used >4.8x faster

Algebraic Decision Diagrams

Formulae parse trees

Matrix Free Optimization

And, there are other “-02”, “-03”, … flags, e.g symbolic-numerical interior point solvers

Kristian Kersting - Thinking Machine Learning

[Mladenov, Belle, Kersting AAAI´17]

Applies to QPs but here illustrated on MDPs for a factory agent which must paint two objects and connect

which actions require a number of tools which are possibly available. Various painting and connection methods are represented, each having an effect on the quality of the job, and each requiring tools. Rewards (required quality) range from 0 to 10 and a discounting factor of 0. 9 was used used >4.8x faster

All this opens the general machine learning toolbox for declarative machines:

feature selection, least-squares regression, label propagation, ranking, collaborative filtering, community detection, deep learning, …

[GENS, DOMINGOS NIPS 2014]

Kristian Kersting - Thinking Machine Learning

§ Learning (rich) representations is a central problem

§ (Fractional) symmetry / group theory provide a natural foundation for learning representations § Symmetries = “unimportant” variants of data (graphs, relational structures, …) § Let’s move beyond QPs: CSPs, SDPs, Autoencoders, Deep Learners, …

Together with high-level languages

Kristian Kersting - Thinking Machine Learning

faster to write and easier to understand

ML applications

ML that incorporate rich domain knowledge and separate queries from underlying code

machines thank think across a wide variety of domains and tool types

properties, compression, and compilation