1

Huffman Codes

2

Coding

! Representing characters from some

input alphabet Σ using another alphabet σ.

– Example: input characters from the Latin alphabet, output strings of binary digits.

! Fixed-length binary character code: for

an input alphabet of size n, represent each charcter as a binary string of bits.

– Example: 8-bit ASCII code.

! A more space-efficient representation

can be obtained using variable-length coding.

– Example: Huffman codes.

n lg

3

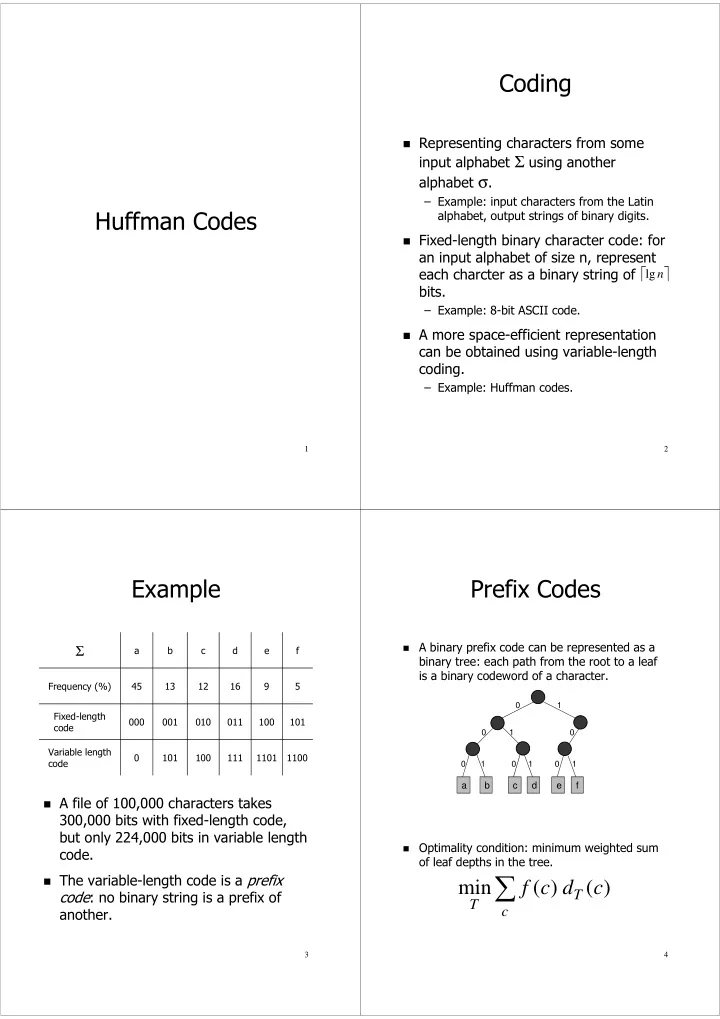

Example

! A file of 100,000 characters takes

300,000 bits with fixed-length code, but only 224,000 bits in variable length code.

! The variable-length code is a prefix

code: no binary string is a prefix of another.

1100 1101 111 100 101 Variable length code 101 100 011 010 001 000 Fixed-length code 5 9 16 12 13 45 Frequency (%) f e d c b a

Σ

4

Prefix Codes

! A binary prefix code can be represented as a

binary tree: each path from the root to a leaf is a binary codeword of a character.

! Optimality condition: minimum weighted sum

- f leaf depths in the tree.

a b c d f e

1 1 1 1 1