SLIDE 32 The phenomena

105

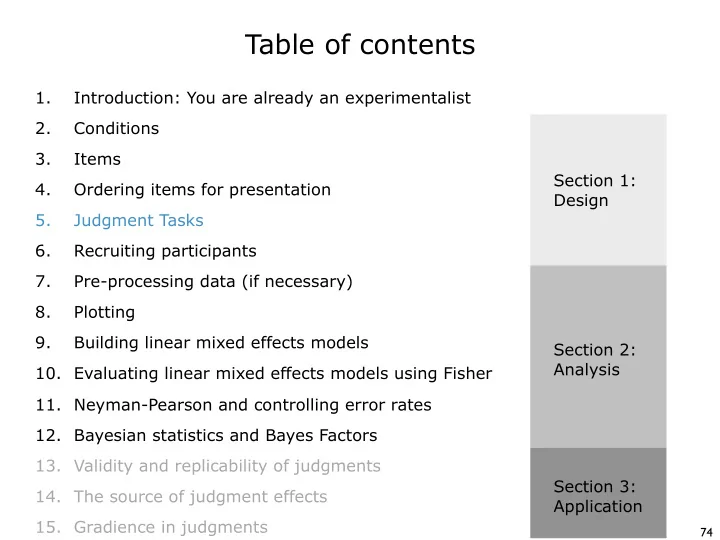

Sprouse et al. 2013 tested 150 phenomena that were randomly sampled from Linguistic Inquiry between 2001 and 2010. Each phenomenon had two conditions: a target condition that was marked unacceptable in the journal article, and a control condition that was marked acceptable. Sprouse et al. 2017 chose 47 of those phenomena to use as critical test cases for comparing power. We chose the 47 to span the lower half of the range of effect sizes. We chose the lower range because that is where the action will be!

small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large

small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small small medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium medium large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large large

5 10 15 20 5 10 15 20 LI sample from Sprouse et al. 2013 current sample 0.2 0.5 0.8 1 1.5 2 2.5 3 3.5 4 4.5

standardized effect sizes (Cohen's d) count of phenomena

These are standardized effect sizes called Cohen’s d. By standardizing the effect sizes, you can compare across fields!