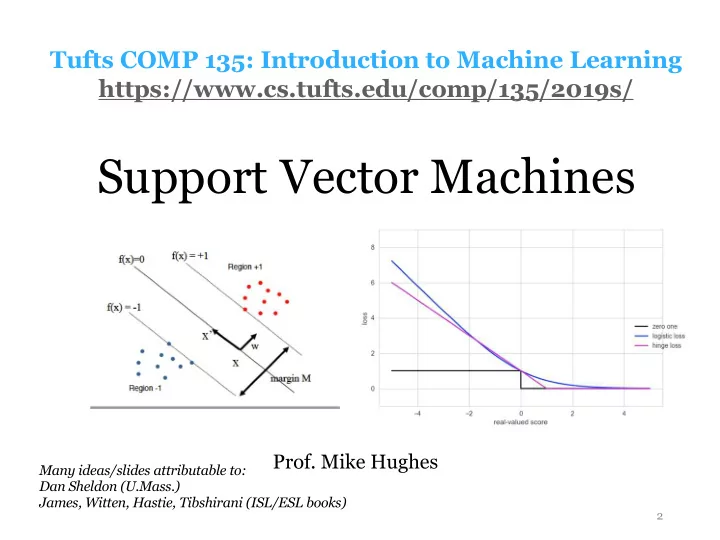

Support Vector Machines

2

Tufts COMP 135: Introduction to Machine Learning https://www.cs.tufts.edu/comp/135/2019s/

Many ideas/slides attributable to: Dan Sheldon (U.Mass.) James, Witten, Hastie, Tibshirani (ISL/ESL books)

- Prof. Mike Hughes

Support Vector Machines Prof. Mike Hughes Many ideas/slides - - PowerPoint PPT Presentation

Tufts COMP 135: Introduction to Machine Learning https://www.cs.tufts.edu/comp/135/2019s/ Support Vector Machines Prof. Mike Hughes Many ideas/slides attributable to: Dan Sheldon (U.Mass.) James, Witten, Hastie, Tibshirani (ISL/ESL books) 2

2

Many ideas/slides attributable to: Dan Sheldon (U.Mass.) James, Witten, Hastie, Tibshirani (ISL/ESL books)

3

Mike Hughes - Tufts COMP 135 - Spring 2019

4

Mike Hughes - Tufts COMP 135 - Spring 2019

Data, Label Pairs Performance measure Task data x label y

n=1

Training Prediction Evaluation

5

Mike Hughes - Tufts COMP 135 - Spring 2019

6

Mike Hughes - Tufts COMP 135 - Spring 2019

7

Mike Hughes - Tufts COMP 135 - Spring 2019

8

Mike Hughes - Tufts COMP 135 - Spring 2019

9

Mike Hughes - Tufts COMP 135 - Spring 2019

10

Mike Hughes - Tufts COMP 135 - Spring 2019

11

Mike Hughes - Tufts COMP 135 - Spring 2019

12

Mike Hughes - Tufts COMP 135 - Spring 2019

13

Mike Hughes - Tufts COMP 135 - Spring 2019

α N

n=1

Can do all needed operations with only access to kernel (no feature vectors are created in memory)

14

Mike Hughes - Tufts COMP 135 - Spring 2019

15

Mike Hughes - Tufts COMP 135 - Spring 2019

16

Mike Hughes - Tufts COMP 135 - Spring 2019

17

Mike Hughes - Tufts COMP 135 - Spring 2019

18

Mike Hughes - Tufts COMP 135 - Spring 2019

19

Mike Hughes - Tufts COMP 135 - Spring 2019

20

Mike Hughes - Tufts COMP 135 - Spring 2019

21

Mike Hughes - Tufts COMP 135 - Spring 2019

22

Mike Hughes - Tufts COMP 135 - Spring 2019

23

Mike Hughes - Tufts COMP 135 - Spring 2019

24

Mike Hughes - Tufts COMP 135 - Spring 2019

y positive y negative ! = #+1 &'w x + b ≥ 0 −1 &'w x + b < 0

w x + b<0 w x + b>0

Margin: distance to the boundary

25

Mike Hughes - Tufts COMP 135 - Spring 2019

! = #+1 &'w x + b ≥ 0 −1 &'w x + b < 0

w x + b<0 w x + b>0

Margin: distance to the boundary y positive y negative

26

Mike Hughes - Tufts COMP 135 - Spring 2019

27

Mike Hughes - Tufts COMP 135 - Spring 2019

28

Mike Hughes - Tufts COMP 135 - Spring 2019

This is a constrained quadratic optimization problem. There are standard

For each n = 1, 2, …. N

29

Mike Hughes - Tufts COMP 135 - Spring 2019

This is a constrained quadratic optimization problem. There are standard

Minimizing this equivalent to maximizing the margin width in feature space

For each n = 1, 2, …. N

30

Mike Hughes - Tufts COMP 135 - Spring 2019

Slack at example i Distance on wrong side

31

Mike Hughes - Tufts COMP 135 - Spring 2019

32

Mike Hughes - Tufts COMP 135 - Spring 2019

Assumes current example has positive label y = +1 +1

33

Mike Hughes - Tufts COMP 135 - Spring 2019

Tradeoff parameter C controls model complexity Smaller C: Simpler model, encourage large margin even if we make lots of mistakes Bigger C: Avoid mistakes

34

Mike Hughes - Tufts COMP 135 - Spring 2019

35

Mike Hughes - Tufts COMP 135 - Spring 2019

36

Mike Hughes - Tufts COMP 135 - Spring 2019

37

Mike Hughes - Tufts COMP 135 - Spring 2019

Efficient training algorithms using modern quadratic programming solve the dual optimization problem of SVM soft margin problem

38

Mike Hughes - Tufts COMP 135 - Spring 2019

39

Mike Hughes - Tufts COMP 135 - Spring 2019

40

Mike Hughes - Tufts COMP 135 - Spring 2019

41

Mike Hughes - Tufts COMP 135 - Spring 2019

42

Mike Hughes - Tufts COMP 135 - Spring 2019