1

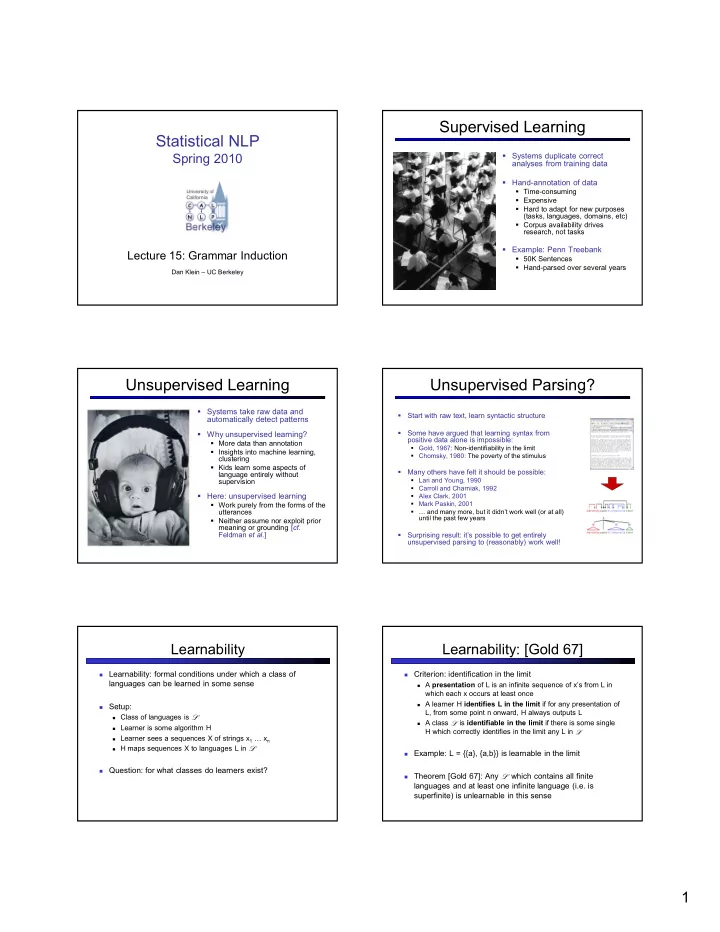

StatisticalNLP

Spring2010

Lecture15:GrammarInduction

DanKlein– UCBerkeley

SupervisedLearning

Systemsduplicatecorrect analysesfromtrainingdata Hand'annotationofdata

Time'consuming Expensive Hardtoadaptfornewpurposes (tasks,languages,domains,etc) Corpusavailabilitydrives research,nottasks

Example:PennTreebank

50KSentences Hand'parsedoverseveralyears

UnsupervisedLearning

Systemstakerawdataand automaticallydetectpatterns Whyunsupervisedlearning?

Moredatathanannotation Insightsintomachinelearning, clustering Kidslearnsomeaspectsof languageentirelywithout supervision

Here:unsupervisedlearning

Workpurelyfromtheformsofthe utterances Neitherassumenorexploitprior meaningorgrounding[. Feldman.]

UnsupervisedParsing?

- Startwithrawtext,learnsyntacticstructure

- Somehavearguedthatlearningsyntaxfrom

positivedataaloneisimpossible:

Gold,1967:Non'identifiability inthelimit Chomsky,1980:Thepovertyofthestimulus

- Manyothershavefeltitshouldbepossible:

Lari andYoung,1990 CarrollandCharniak,1992 AlexClark,2001 MarkPaskin,2001 …andmanymore,butitdidn’tworkwell(oratall) untilthepastfewyears

- Surprisingresult:it’spossibletogetentirely

unsupervisedparsingto(reasonably)workwell!

Learnability

Learnability:formalconditionsunderwhichaclassof

languagescanbelearnedinsomesense

Setup:

Classoflanguagesis

- LearnerissomealgorithmH

LearnerseesasequencesXofstringsx1 …xn HmapssequencesXtolanguagesLin

- Question:forwhatclassesdolearnersexist?

Learnability:[Gold67]

Criterion:identificationinthelimit

A ofLisaninfinitesequenceofx’s fromLin

whicheachxoccursatleastonce

AlearnerH ifforanypresentationof

L,fromsomepointnonward,HalwaysoutputsL

Aclass

- is ifthereissomesingle

HwhichcorrectlyidentifiesinthelimitanyLin

- Example:L={{a},{a,b}}islearnableinthelimit

Theorem[Gold67]:Any

- whichcontainsallfinite

languagesandatleastoneinfinitelanguage(i.e.is superfinite)isunlearnable inthissense