SLIDE 1

IT2EC 2020 IT2EC Extended Abstract Template Presentation/Panel

Stronger threads: The critical requirement for enabling the Competency- Based Workforce

Brian Moon1

1Chief Technology Officer, Perigean Technologies, Fredericksburg, VA, USA

Abstract — Essential advancements toward enabling the competency-based wokforce (CBW) have been made in the past few years. As interest grows and new endeavours are pursued, however, it is becoming increasingly clear that many of the key advancements have been made without sufficient connectivity to others. To realize the full potential, more focus must be placed on stitching together the essential elements that can enable the CBW. This presentation

- ffers insights from the point of view of a practitioner who has been working in the seams to enable competency-based

workforces in military and commercial contexts, and suggestions regarding the requirements of the threads necessary to stitch the elements more tightly together.

1 The Essential Elements

- f

a Competency-Based Workforce

The competency-based workforce (CBW) movement is been well underway, as industries have come to realize that industrial age models of workforce development are no longer satisfactory to survive in information-rich, cognitively complex domains. Previous approaches that supported hiring by qualifications, one-size-fits-all training, and periodic performance review, are being replaced by techniques and tools that enable more exact definition of performance requirements, more adaptive and higher fidelity learning experiences, and deeper assessment on performance across all venues of performance. The proliferation of tools and techniques is serving to gain coverage over the essential elements of the CBW, which are:

- Explicit delineation of all tasks and the associated

competencies required for efficient and effective performance of a job (“Delineation”)

- Experiences that enable the development of the

competencies (“Development”)

- Accurate

and efficient assessment

- f

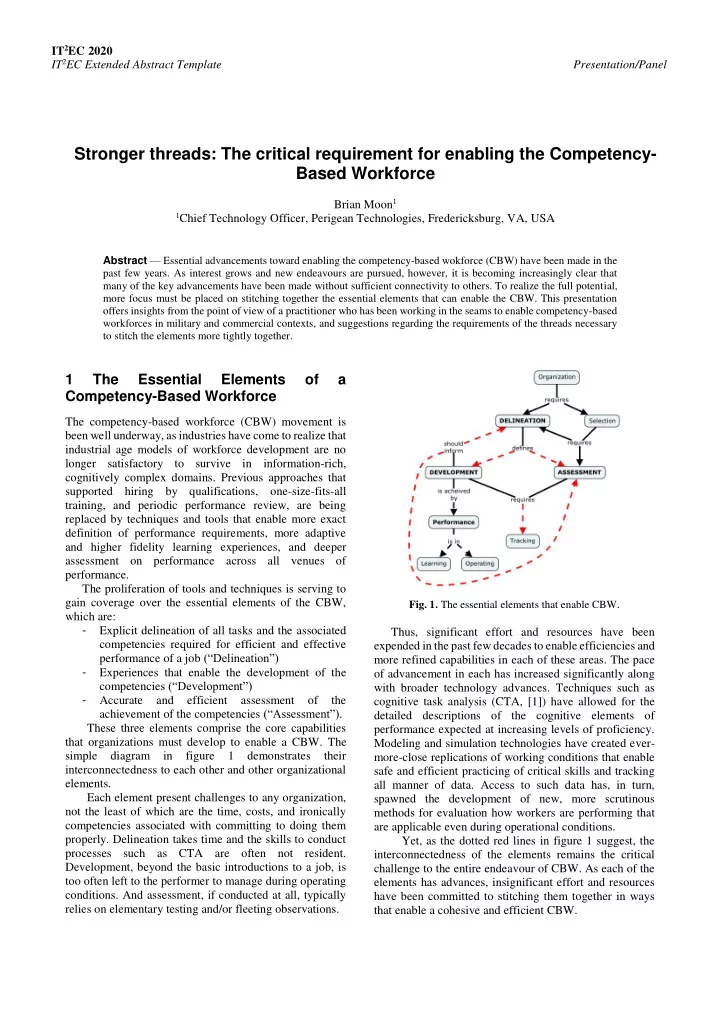

the achievement of the competencies (“Assessment”). These three elements comprise the core capabilities that organizations must develop to enable a CBW. The simple diagram in figure 1 demonstrates their interconnectedness to each other and other organizational elements. Each element present challenges to any organization, not the least of which are the time, costs, and ironically competencies associated with committing to doing them

- properly. Delineation takes time and the skills to conduct

processes such as CTA are often not resident. Development, beyond the basic introductions to a job, is too often left to the performer to manage during operating

- conditions. And assessment, if conducted at all, typically

relies on elementary testing and/or fleeting observations.

- Fig. 1. The essential elements that enable CBW.

Thus, significant effort and resources have been expended in the past few decades to enable efficiencies and more refined capabilities in each of these areas. The pace

- f advancement in each has increased significantly along