1

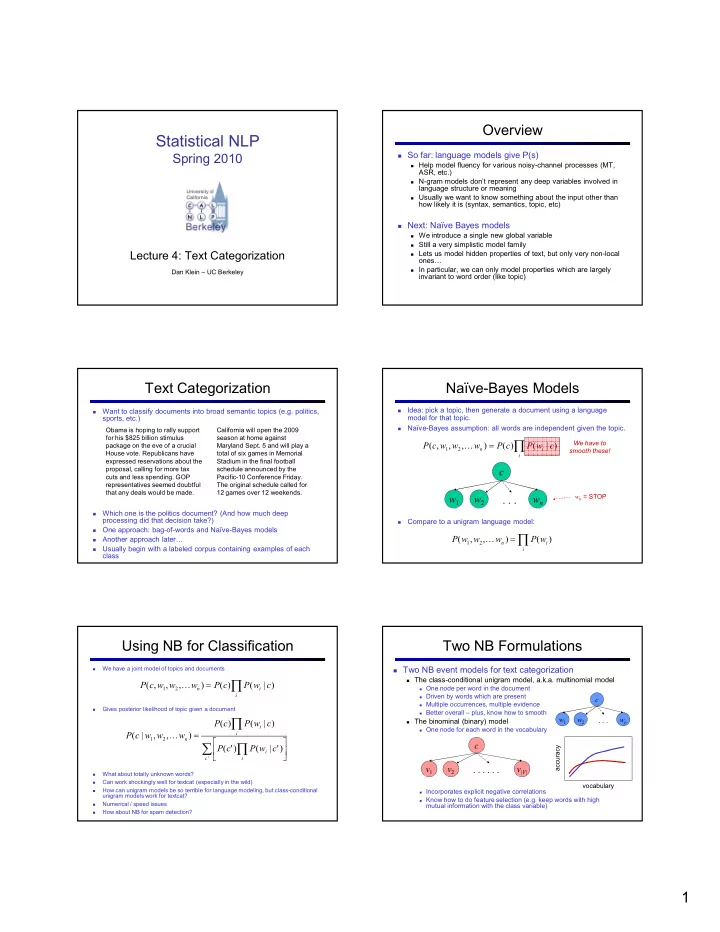

StatisticalNLP

Spring2010

Lecture4:TextCategorization

DanKlein– UCBerkeley

Overview

Sofar:languagemodelsgiveP(s)

Helpmodelfluencyforvariousnoisy,channelprocesses(MT,

ASR,etc.)

N,grammodelsdon’trepresentanydeepvariablesinvolvedin

languagestructureormeaning

Usuallywewanttoknowsomethingabouttheinputotherthan

howlikelyitis(syntax,semantics,topic,etc)

Next:NaïveBayesmodels

Weintroduceasinglenewglobalvariable Stillaverysimplisticmodelfamily Letsusmodelhiddenpropertiesoftext,butonlyverynon,local

- nes…

Inparticular,wecanonlymodelpropertieswhicharelargely

invarianttowordorder(liketopic)

TextCategorization

- Wanttoclassifydocumentsintobroadsemantictopics(e.g.politics,

sports,etc.)

- Whichoneisthepoliticsdocument?(Andhowmuchdeep

processingdidthatdecisiontake?)

- Oneapproach:bag,of,wordsandNaïve,Bayes models

- Anotherapproachlater…

- Usuallybeginwithalabeledcorpuscontainingexamplesofeach

class

Obamaishopingtorallysupport forhis$825billionstimulus packageontheeveofacrucial Housevote.Republicanshave expressedreservationsaboutthe proposal,callingformoretax cutsandlessspending.GOP representativesseemeddoubtful thatanydealswouldbemade. Californiawillopenthe2009 seasonathomeagainst MarylandSept.5andwillplaya totalofsixgamesinMemorial Stadiuminthefinalfootball scheduleannouncedbythe Pacific,10ConferenceFriday. Theoriginalschedulecalledfor 12gamesover12weekends.

Naïve,BayesModels

- Idea:pickatopic,thengenerateadocumentusingalanguage

modelforthattopic.

- Naïve,Bayesassumption:allwordsareindependentgiventhetopic.

- Comparetoaunigramlanguagemodel:

- ∏

=

- ∏

=

- =STOP

- UsingNBforClassification

- Wehaveajointmodeloftopicsanddocuments

- Givesposteriorlikelihoodoftopicgivenadocument

- Whatabouttotallyunknownwords?

- Canworkshockinglywellfortextcat(especiallyinthewild)

- Howcanunigrammodelsbesoterribleforlanguagemodeling,butclass,conditional

unigrammodelsworkfortextcat?

- Numerical/speedissues

- HowaboutNBforspamdetection?

∏

=

- ∑

∏ ∏

=

- TwoNBFormulations

TwoNBeventmodelsfortextcategorization

Theclass,conditionalunigrammodel,a.k.a.multinomialmodel

Onenodeperwordinthedocument Drivenbywordswhicharepresent Multipleoccurrences,multipleevidence Betteroverall– plus,knowhowtosmooth

Thebinominal(binary)model

Onenodeforeachwordinthevocabulary Incorporatesexplicitnegativecorrelations Knowhowtodofeatureselection(e.g.keepwordswithhigh

mutualinformationwiththeclassvariable)

- vocabulary

accuracy