1

StatisticalNLP

Spring2010

Lecture2:LanguageModels

DanKlein– UCBerkeley

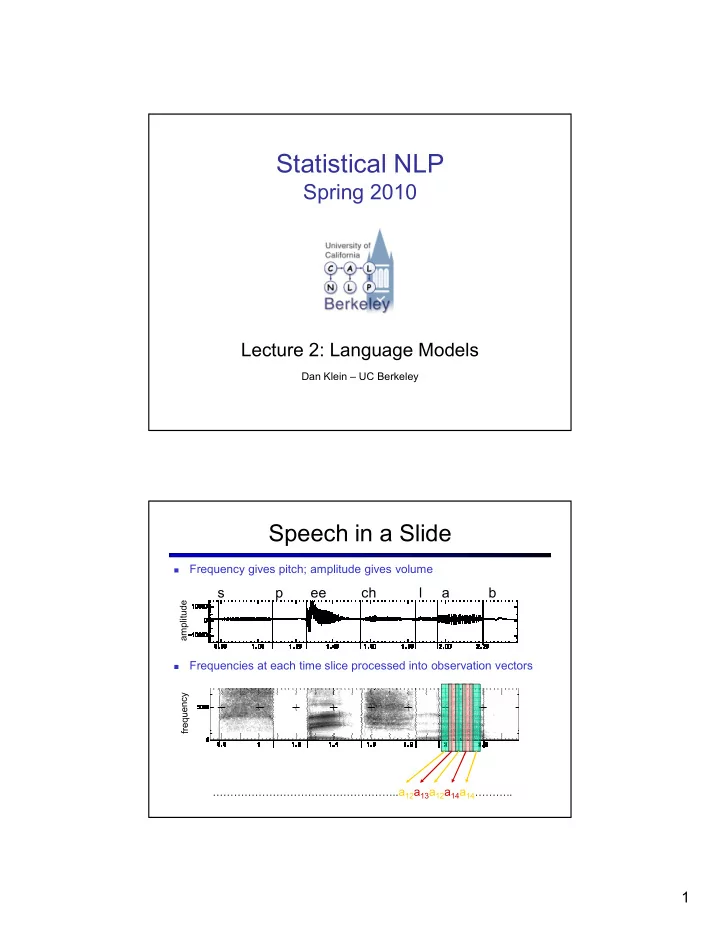

- Frequencygivespitch;amplitudegivesvolume

- Frequenciesateachtimesliceprocessedintoobservationvectors

speechlab

amplitude

SpeechinaSlide

……………………………………………..a12a13a12a14a14………..

StatisticalNLP Spring2010 Lecture2:LanguageModels DanKlein - - PDF document

StatisticalNLP Spring2010 Lecture2:LanguageModels DanKlein UCBerkeley SpeechinaSlide Frequencygivespitch;amplitudegivesvolume

DanKlein– UCBerkeley

amplitude

……………………………………………..a12a13a12a14a14………..

Wewanttopredictasentencegivenacoustics: Thenoisychannelapproach:

Acousticmodel:HMMsover wordpositionswithmixtures

Languagemodel: Distributionsoversequences

thestationsignsareindeepinenglish

thestationssignsareindeepinenglish

thestationsignsareindeepintoenglish

thestation'ssignsareindeepinenglish

thestationsignsareindeepintheenglish

thestationsignsareindeedinenglish

thestation'ssignsareindeedinenglish

thestationsignsareindiansinenglish

thestationsignsareindianinenglish

thestationssignsareindiansinenglish

thestationssignsareindiansandenglish

WarrenWeaver(1955:18,quotingaletterhewrotein1947)

Input:manyobservationsoftrainingsentencesx Output:systemcapableofcomputingP(x)

P(Isawavan)>>P(eyesaweofan) :P(artichokesintimidatezippers)≈ 0 Inprinciple,“plausible”dependsonthedomain,context,speaker…

Problem:doesn’tgeneralize(atall)

Decomposition:breaksentencesintosmallpieceswhichcanbe recombinedinnewways(conditionalindependence) Smoothing:allowforthepossibilityofunseenpieces

P(???|Turntopage134andlookatthepictureofthe)?

Theactualconditionalprobabilityestimates,we’llcallthemθ Obviousestimate:()

TakeatrainingsetXandatestsetX’ Computeanestimateθ fromX Useittoassignprobabilitiestoothersentences,suchasthoseinX’ 198015222thefirst 194623024thesame 168504105thefollowing 158562063theworld … 14112454thedoor

TrainingCounts

3380pleaseclosethedoor 1601pleaseclosethewindow 1164pleaseclosethenew 1159pleaseclosethegate 900pleaseclosethebrowser

198015222thefirst 194623024thesame 168504105thefollowing 158562063theworld … 14112454thedoor

197302closethewindow 191125closethedoor 152500closethegap 116451closethethread 87298closethedeal

mexico,never,consider,fall,bungled,davison,that,obtain,price,lines,the,to,sass,the,the,further, board,a,details,machinists,the,companies,which,rivals,an,because,longer,oakes,percent,a, they,three,edward,it,currier,an,within,in,three,wrote,is,you,s.,longer,institute,dentistry,pay, however,said,possible,to,rooms,hiding,eggs,approximate,financial,canada,the,so,workers, advancers,half,between,nasdaq]

conditionalindependenceassumptions(what’sthecost?)

gurria,mexico,'s,motion,control,proposal,without,permission,from,five,hundred, fifty,five,yen]

seconds,at,the,greatest,play,disingenuous,to,be,reset,annually,the,buy,out,of, american,brands,vying,for,mr.,womack,currently,sharedata,incorporated,believe, chemical,prices,undoubtedly,will,be,as,much,is,scheduled,to,conscientious, teaching]

Manylinguisticargumentsthatlanguageisn’tregular.

Long-distanceeffects:“Thecomputer whichIhadjustputintothe machineroomonthefifthfloor___.”(crashed) Recursivestructure

WhyCANweoftengetawaywithn-grammodels?

[This,quarter,‘s,surprisingly,independent,attack,paid,off,the, risk,involving,IRS,leaders,and,transportation,prices,.] [It,could,be,announced,sometime,.] [Mr.,Toseland,believes,the,average,defense,economy,is, drafted,from,slightly,more,than,12,stocks,.]

Obviously,generatedsentencesget“better”asweincreasethe modelorder Moreprecisely:usingMLestimators,higherorderisalways betterlikelihoodontrain,butnottest

Willourmodelprefergoodsentencestobadones? Bad≠ungrammatical! Bad≈ unlikely Bad=sentencesthatouracousticmodelreallylikesbutaren’t thecorrectanswer

Howwellcanwepredictthenextword? Unigramsareterribleatthisgame.(Why?)

WhenIeatpizza,Iwipeoffthe____ Manychildrenareallergicto____ Isawa____

grease0.5 sauce0.4 dust0.05 …. mice0.0001 …. the1e-100 3516wipeofftheexcess 1034wipeoffthedust 547wipeoffthesweat 518wipeoffthemouthpiece … 120wipeoffthegrease 0wipeoffthesauce 0wipeoffthemice

0.1bitsofimprovementdoesn’tsoundsogood “Solution”:perplexity Interpretation:averagebranchingfactorinmodel

It’seasytogetbogusperplexitiesbyhavingbogusprobabilities thatsumtomorethanoneovertheireventspaces.30%ofyou willdothisonHW1. Eventhoughourmodelsrequireastopstep,averagesareper actualword,notperderivationstep.

Taskerrordriven Forspeechrecognition Foraspecificrecognizer!

Correctanswer: Andysawa part ofthemovie Recognizeroutput: And he sawapart ofthemovie

insertions +deletions +substitutions truesentencesize

0.2 0.4 0.6 0.8 1 200000 400000 600000 800000 1000000

Bigrams Rules

Newwordsappearallthetime:

Synaptitute 132,701.03 multidisciplinarization

Newbigrams:evenmoreoften Trigramsormore– stillworse!

Types(words)vs.tokens(wordoccurences) Broadly:mostwordtypesarerareones Specifically:

Rankwordtypesbytokenfrequency Frequencyinverselyproportionaltorank

Notspecialtolanguage:randomlygeneratedcharacterstrings havethisproperty(tryit!)

Takeyourempiricalcounts Modifytheminvariouswaystoimproveestimates

Oftencangiveestimatorsaformalstatisticalinterpretation …butnotalways Approachesthataremathematicallyobviousaren’talwayswhatworks

3516wipeofftheexcess 1034wipeoffthedust 547wipeoffthesweat 518wipeoffthemouthpiece … 120wipeoffthegrease 0wipeoffthesauce 0wipeoffthemice

P(w|deniedthe) 3allegations 2reports 1claims 1request 7total

allegations

charges motion benefits

allegations reports claims

charges

request

motion benefits

allegations reports

claims

request

P(w|deniedthe) 2.5allegations 1.5reports 0.5claims 0.5request 2other 7total

Knownwordsinunseencontexts Entirelyunknownwords

Manysystemsignorethis– why? OftenjustlumpallnewwordsintoasingleUNKtype

Add-onesmoothingespeciallyoftentalkedabout

centeredonsmoothedbigramestimate,etc[MacKayandPeto,94]

showsupas"#

Canflexiblyincludemultipleback-offcontexts,notjustachain Oftenmultipleweights,dependingonbucketedcounts GoodwaysoflearningthemixtureweightswithEM(later) Notentirelyclearwhyitworkssomuchbetter

Setasmallnumberofhyperparameters thatcontrolthedegreeof smoothingbymaximizingthe(log-)likelihoodofheld-outdata Canuseanyoptimizationtechnique(linesearchorEMusuallyeasiest)

TrainingData Held-Out Data Test Data k

Add-onevastlyoverestimatesthefractionofnewbigrams Add-0.0000027vastlyunderestimatestheratio2*/1*

Countin22MWords Actualc*(Next22M) Add-one’sc* Add-0.0000027’sc* 1 0.448 2/7e-10 ~1 2 1.25 3/7e-10 ~2 3 2.24 4/7e-10 ~3 4 3.23 5/7e-10 ~4 5 4.21 6/7e-10 ~5 MassonNew 9.2% ~100% 9.2% Ratioof2/1 2.8 1.5 ~2

Nk:numberoftypeswhichoccurktimesinthe entirecorpus Takeeachofthectokensoutofcorpusinturn c“training”setsofsizec-1,“held-out”ofsize1 Howmanyheld-outtokensareunseenin training?

N1

Howmanyheld-outtokensareseenktimesin training?

(k+1)Nk+1

ThereareNk wordswithtrainingcountk Eachshouldoccurwithexpectedcount

(k+1)Nk+1/Nk

Eachshouldoccurwithprobability:

(k+1)Nk+1/(cNk)

N1 2N2 3N3

4417N4417 3511N3511

/N0 /N1 /N2 /N4416 /N3510

“Training” “Held-Out”

Forsmallk,Nk >Nk+1 Forlargek,toojumpy,zeroswreckestimates SimpleGood-Turing[GaleandSampson]: replaceempiricalNk withabest-fitpowerlaw

N1 N2 N3 N4417 N3511

N0 N1 N2 N4416 N3510

N1 N2 N3 N1 N2

UseGTdiscounted$counts(roughly– Katzleftlargecountsalone) Whatevermassisleftgoestoempiricalunigram

Countin22MWords Actualc*(Next22M) GT’sc* 1 0.448 0.446 2 1.25 1.26 3 2.24 2.24 4 3.23 3.24 MassonNew 9.2% 9.2%

Noneedtoactuallyhaveheld-outdata;justsubtract0.75(orsomed) Maybehaveaseparatevalueofdforverylowcounts

Countin22MWords Futurec*(Next22M) 1 0.448 2 1.25 3 2.24 4 3.23

Shannongame:Therewasanunexpected____?

delay? Francisco?

“Francisco”ismorecommonthan“delay” …but“Francisco”alwaysfollows“San” …soit’sless“fertile”

Intheback-offmodel,wedon’twanttheprobabilityofwasaunigram Instead,wanttheprobabilitythatwis% Foreachword,countthenumberofbigramtypesitcompletes

Absolutediscounting Lowerordercontinuationprobabilities

generallyuseless

there’senoughdata)

MT,butnotsomuchfor speech

Turing,held-outestimation, Witten-Bell

readingfortonsofgraphs!

[Graphsfrom JoshuaGoodman]

5.5 6 6.5 7 7.5 8 8.5 9 9.5 10 1 2 3 4 5 6 7 8 9 10 20

100,000KN 1,000,000Katz 1,000,000KN 10,000,000Katz 10,000,000KN allKatz allKN

Cachingmodels:recentwordsmorelikelytoappearagain Triggermodels:recentwordstriggerotherwords Topicmodels

Syntacticmodels:usetreemodelstocapturelong-distance syntacticeffects[Chelba andJelinek,98] Discriminativemodels:setn-gramweightstoimprovefinaltask accuracyratherthanfittrainingsetdensity[Roark,05,forASR; Liang et.al.,06,forMT] Structuralzeros:somen-gramsaresyntacticallyforbidden,keep estimatesatzero[Mohri andRoark,06] BayesiandocumentandIRmodels[Daume 06]

Helpmodelfluencyforvariousnoisy-channelprocesses(MT, ASR,etc.) N-grammodelsdon’trepresentanydeepvariablesinvolvedin languagestructureormeaning Usuallywewanttoknowsomethingabouttheinputotherthan howlikelyitis(syntax,semantics,topic,etc)

Weintroduceasinglenewglobalvariable Stillaverysimplisticmodelfamily Letsusmodelhiddenpropertiesoftext,butonlyverynon-local

Inparticular,wecanonlymodelpropertieswhicharelargely invarianttowordorder(liketopic)