1

Statistical NLP

Spring 2011

Lecture 5: Speech Recognition II

Dan Klein – UC Berkeley

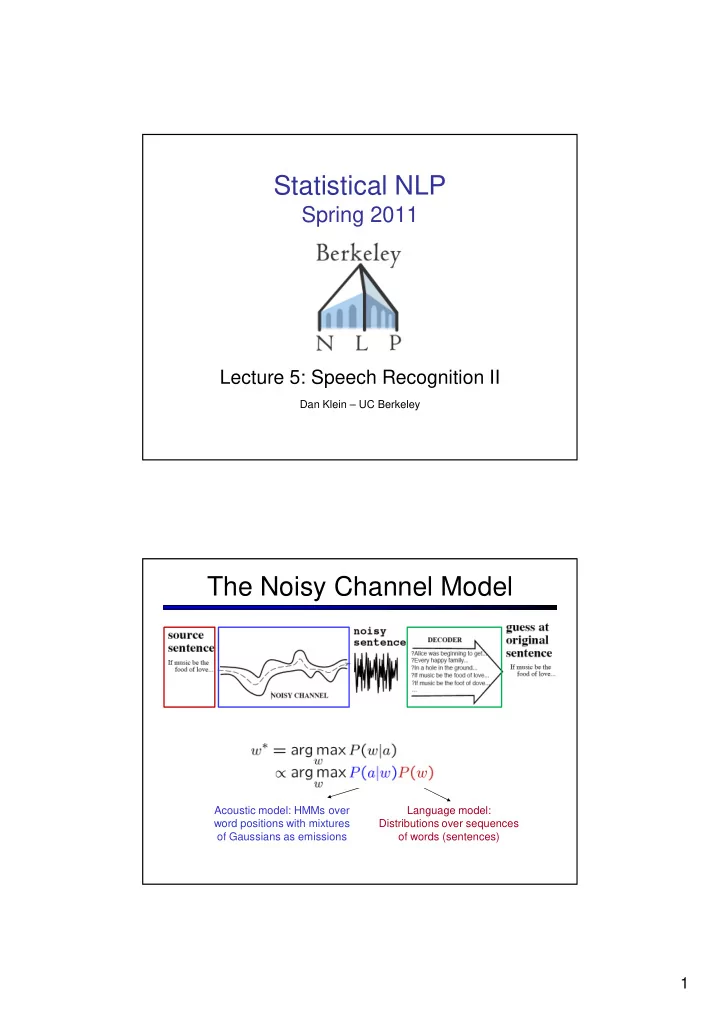

The Noisy Channel Model

Acoustic model: HMMs over word positions with mixtures

- f Gaussians as emissions

Language model: Distributions over sequences

- f words (sentences)