1

StatisticalNLP

Spring2010

Lecture3:LMsII/TextCat

DanKlein– UCBerkeley

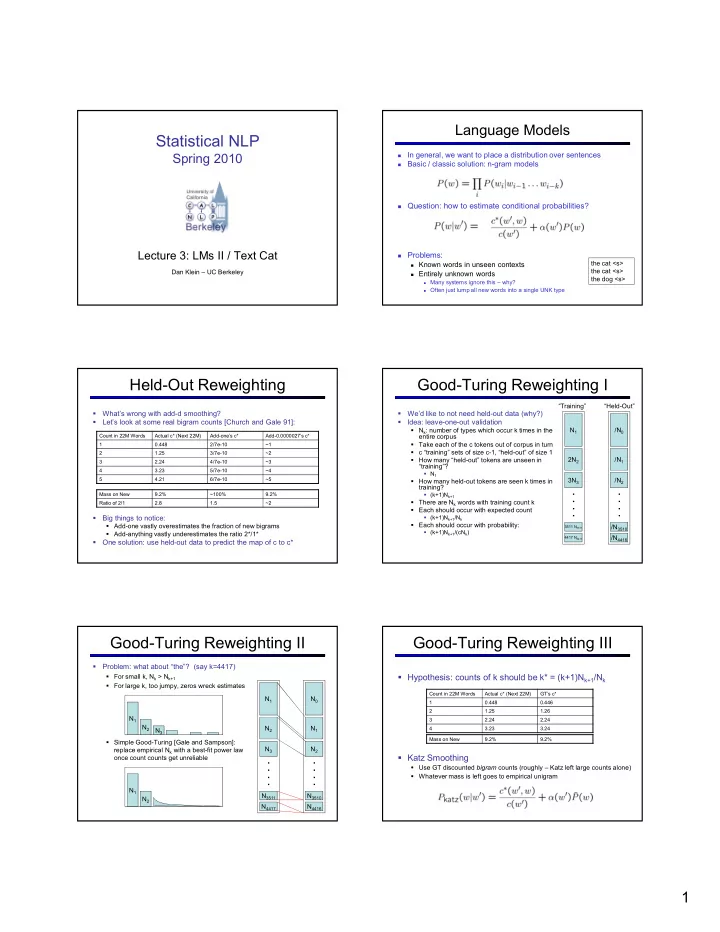

LanguageModels

- Ingeneral,wewanttoplaceadistributionoversentences

- Basic/classicsolution:n*grammodels

Question:howtoestimateconditionalprobabilities? Problems:

Knownwordsinunseencontexts Entirelyunknownwords

Manysystemsignorethis– why? OftenjustlumpallnewwordsintoasingleUNKtype

thecat<s> thecat<s> thedog<s>

Held*OutReweighting

- What’swrongwithadd*dsmoothing?

- Let’slookatsomerealbigramcounts[ChurchandGale91]:

- Bigthingstonotice:

Add*onevastlyoverestimatesthefractionofnewbigrams Add*anythingvastlyunderestimatestheratio2*/1*

- Onesolution:useheld*outdatatopredictthemapofctoc*

Countin22MWords Actualc*(Next22M) Add*one’sc* Add*0.0000027’sc* 1 0.448 2/7e*10 ~1 2 1.25 3/7e*10 ~2 3 2.24 4/7e*10 ~3 4 3.23 5/7e*10 ~4 5 4.21 6/7e*10 ~5 MassonNew 9.2% ~100% 9.2% Ratioof2/1 2.8 1.5 ~2

Good*TuringReweightingI

- We’dliketonotneedheld*outdata(why?)

- Idea:leave*one*outvalidation

Nk:numberoftypeswhichoccurktimesinthe entirecorpus Takeeachofthectokensoutofcorpusinturn c“training”setsofsizec*1,“held*out”ofsize1 Howmany“held*out”tokensareunseenin “training”?

N1

Howmanyheld*outtokensareseenktimesin training?

(k+1)Nk+1

ThereareNk wordswithtrainingcountk Eachshouldoccurwithexpectedcount

(k+1)Nk+1/Nk

Eachshouldoccurwithprobability:

(k+1)Nk+1/(cNk)

N1 2N2 3N3

4417N4417 3511N3511

....

/N0 /N1 /N2 /N4416 /N3510

....

“Training” “Held*Out”

Good*TuringReweightingII

- Problem:whatabout“the”?(sayk=4417)

Forsmallk,Nk >Nk+1 Forlargek,toojumpy,zeroswreckestimates SimpleGood*Turing[GaleandSampson]: replaceempiricalNk withabest*fitpowerlaw

- ncecountcountsgetunreliable

N1 N2 N3 N4417 N3511

....

N0 N1 N2 N4416 N3510

....

N1 N2 N3 N1 N2

Good*TuringReweightingIII

Hypothesis:countsofkshouldbek*=(k+1)Nk+1/Nk KatzSmoothing

UseGTdiscountedcounts(roughly– Katzleftlargecountsalone) Whatevermassisleftgoestoempiricalunigram

Countin22MWords Actualc*(Next22M) GT’sc* 1 0.448 0.446 2 1.25 1.26 3 2.24 2.24 4 3.23 3.24 MassonNew 9.2% 9.2%