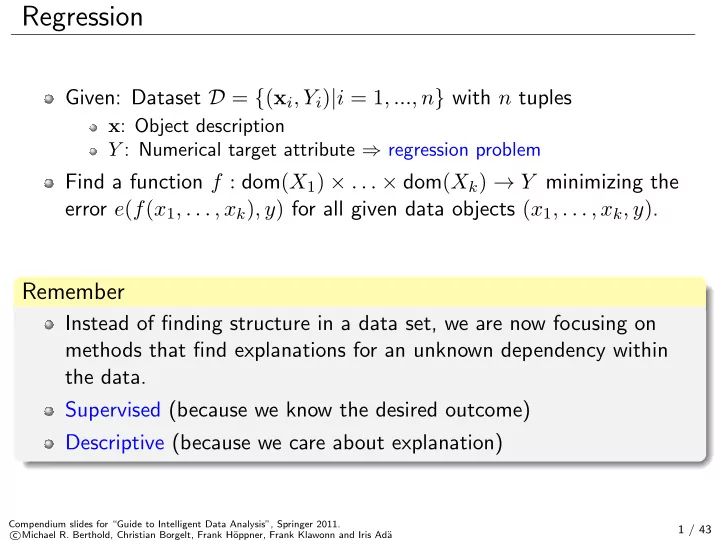

Regression

Given: Dataset D = {(xi, Yi)|i = 1, ..., n} with n tuples

x: Object description Y : Numerical target attribute ⇒ regression problem

Find a function f : dom(X1) × . . . × dom(Xk) → Y minimizing the error e(f(x1, . . . , xk), y) for all given data objects (x1, . . . , xk, y).

Remember

Instead of finding structure in a data set, we are now focusing on methods that find explanations for an unknown dependency within the data. Supervised (because we know the desired outcome) Descriptive (because we care about explanation)

Compendium slides for “Guide to Intelligent Data Analysis”, Springer 2011. c Michael R. Berthold, Christian Borgelt, Frank H¨

- ppner, Frank Klawonn and Iris Ad¨

a

1 / 43