!

Probability*and*Statistics* for*Computer*Science**

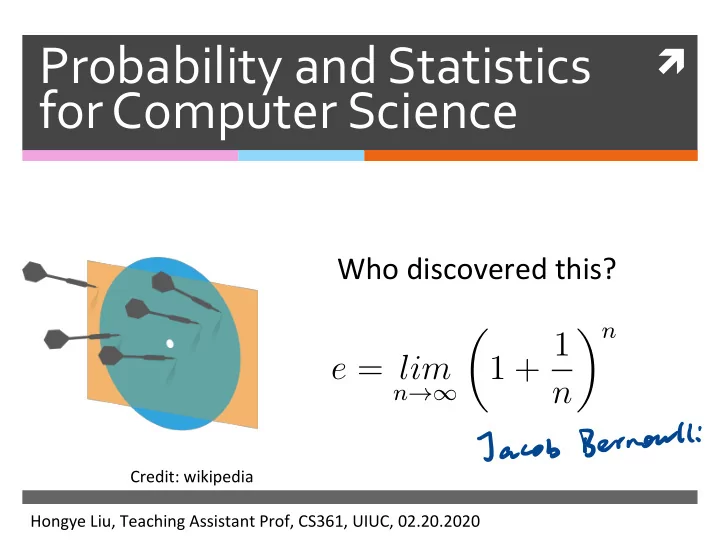

Who!discovered!this?!

!

Hongye!Liu,!Teaching!Assistant!Prof,!CS361,!UIUC,!02.20.2020! Credit:!wikipedia!

e = lim

n→∞

- 1 + 1

n n

Jacob Bernoulli

Probability*and*Statistics* ! for*Computer*Science** - - PowerPoint PPT Presentation

Probability*and*Statistics* ! for*Computer*Science** Who!discovered!this?! ! n 1 + 1 e = lim n n Jacob Bernoulli Credit:!wikipedia! Hongye!Liu,!Teaching!Assistant!Prof,!CS361,!UIUC,!02.20.2020! Last*time*

!

Probability*and*Statistics* for*Computer*Science**

Who!discovered!this?!

!

Hongye!Liu,!Teaching!Assistant!Prof,!CS361,!UIUC,!02.20.2020! Credit:!wikipedia!

e = lim

n→∞

n n

Jacob Bernoulli

Last*time*

Random!Variable!!

Review&with&ques,ons& The&weak&law&of&large&numbers&

Announcement*

* please

start

to review course

content

for

Midterm l

l Mar. 5)

*

Review your Uws

for

mistakes

Experiment

in

last

lecture

*

Received

16

teams

'results

*

One

needs

ere

be

corrected

* Sample

mean is

4.5

Expected

value for each

random expt

.is

5

Content*

Con$nuous'Random'Variable'' Important!known!discrete!

probability!distribuMons! !

Example*of*a*continuous*random* variable*

The!spinner! The!sample!space!for!all!outcomes!is!

not!countable! !

0!

θ!

θ ∈ (0, 2π]

Pco = 351=0

* What

is

the probability of

p LO = Oo) ?

do

is

a constantin

( o, UT ]It

what is

the probability of

p l

E

Probability*density*function*(pdf)*

For!a!conMnuous!random!variable!X,!the!

probability!that!X=x!is!essenMally!zero!for!all! (or!most)!x,!so!we!can’t!define!!

Instead,!we!define!the!probability'density'

func$on!(pdf)!over!an!infinitesimally!small! interval!dx,

For a < b

!

p(x)dx = P(X ∈ [x, x + dx])

b

a

p(x)dx = P(X ∈ [a, b])

P(X = x)

p ex) Z o -

Properties*of*the*probability*density* function**

!!!!!!!!!resembles'the!probability!funcMon!

!!!!!!!!!!!!!!!!!!!!!!!!!!for!all!x The!probability!of!X !taking!all!possible!

values!is!1.!

!

p(x) p(x) ≥ 0

∞

−∞

p(x)dx = 1

Properties*of**the*probability*density* function**

!!!!!!!!!differs!from!the!probability!

distribuMon!funcMon!for!a!discrete! random!variable!in!that!

!!!!!!!!!!!!is!not!the!probability!that!X!=!x!! !!!!!!!!!!!!can!exceed!1!

! !

p(x) p(x) p(x)

pixel

Probability*density*function:*spinner*

Suppose!the!spinner!has!equal!chance!

stopping!at!any!posiMon.!What’s!the!pdf!of!the! angle!θ!of!the!spin!posiMon?!!!!!!!!!!

!For!this!funcMon!to!be!a!pdf,!

Then!!

θ

2π! c! 0!

p(θ) =

if θ ∈ (0, 2π]

∞

−∞

p(θ)dθ = 1

⇒ c = 1 2π

11l ll

i

Probability*density*function:*spinner*

What!the!probability!that!the!spin!angle!θ!is!

within![!!!!!!!!!!!]?!!!!!!!!!!

π 12, π 7

O Opix C- cabs ) = Jb pcxsdx

a =

S

¥dx=

12Probability*density*function:*spinner*

What!the!probability!that!the!spin!angle!θ!is!

within![!!!!!!!!!!!]?!!!!!!!!!!

π 12, π 7

θ

2π! 0!

1 2π p(θ)

E's

Probability*density*function:*spinner*

What!the!probability!that!the!spin!angle!θ!is!

within![!!!!!!!!!!!]?!!!!!!!!!!

π 12, π 7

P( π 12 ≤ θ ≤ π 7 ) =

p(θ)dθ

θ

2π! 0!

1 2π p(θ)

Probability*density*function:*spinner*

What!the!probability!that!the!spin!angle!θ!is!

within![!!!!!!!!!!!]?!!!!!!!!!!

π 12, π 7

P( π 12 ≤ θ ≤ π 7 ) =

p(θ)dθ =

1 2πdθ = 5 168

θ

2π! 0!

1 2π p(θ)

Q:*Probability*density*function:*spinner*

What!is!the!constant!c!given!the!spin!angle!θ!

has!the!following!pdf?! θ

2π! 0!

p(θ)

π!

c'

A.!1! B.!1/π! C.!2/π! D.!4/π! E.!1/2π!

Q:*Probability*density*function:*spinner*

What!is!the!constant!c!given!the!spin!angle!θ!

has!the!following!pdf?! θ

2π! 0!

p(θ)

π!

c'

A.!1! B.!1/π! C.!2/π! D.!4/π! E.!1/2π!

x!

Expectation*of*continuous* variables*

Expected!value!of!a!conMnuous!random!

variable!X

Expected!value!of!funcMon!of!conMnuous!

random!variable! !

E[X] = ∞

−∞

xp(x)dx E[Y ] = E[f(X)] = ∞

−∞

f(x)p(x)dx

Y = f(X)

x!

weight&

ype

Probability*density*function:*spinner*

Given!the!probability!density!of!the!spin!angle!θ!!!!!!!!!!! The!expected!value!of!spin!angle!is!!

p(θ) = 1

2π

if θ ∈ (0, 2π]

E[θ] = ∞

−∞

θp(θ)dθ

=

=

EYE

"Probability*density*function:*spinner*

Given!the!probability!density!of!the!spin!angle!θ!!!!!!!!!!! The!expected!value!of!spin!angle!is!!

p(θ) = 1

2π

if θ ∈ (0, 2π]

= 2π θ 1 2πdθ E[θ] = ∞

−∞

θp(θ)dθ

Probability*density*function:*spinner*

Given!the!probability!density!of!the!spin!angle!θ!!!!!!!!!!! The!expected!value!of!spin!angle!is!!

p(θ) = 1

2π

if θ ∈ (0, 2π]

= 2π θ 1 2πdθ = π E[θ] = ∞

−∞

θp(θ)dθ

Properties*of*expectation*of* continuous*random*variables*

The!linearity!of!expected!value!is!true!for!

conMnuous!random!variables.

And!the!other!properMes!that!we!derived!

for!variance!and!covariance!also!hold!for! conMnuous!random!variable! !

X , 'T

it x Y are

indpt

varlx-41=051×7-1 vary)

Q.*

Suppose!a!conMnuous!variable!has!pdf!

! What!is!E[X]?!! A.!1/2 !!!B.!1/3 !!!!C.!1/4!!! !! D.!1 !!!!! !!!E.!2/3! !

p(x) =

x ∈ [0, 1]

E[X] = ∞

−∞

xp(x)dx

J!X pixldx

=X-2×2 ) Ix

✓

= ' -3 = 'sQ.*

Suppose!a!conMnuous!variable!has!pdf!

! What!is!E[X]?!! A.!1/2 !!!B.!1/3 !!!!C.!1/4!!! !! D.!1 !!!!! !!!E.!2/3! !

p(x) =

x ∈ [0, 1]

x!

Variance*of*a*continuous*variable*

pcx) = f

lX C- co ,

I ]varCx7= ?

E[ ex - text)

pcx )

EE

'I'm

±

= Sj ex -IT

. ' DX, IWhat is the CDE of the spin ?

CDF

for continues

random

variable

is

defined

the

same

way

⑧pcxsdx

Pex ,

if

p Cx) = {It

,x o

e -it . ]l X >2T

,pcxex)

=/ g.sc#.ax=Eaxei

"Content*

ConMnuous!Random!Variable!! Important'known'discrete'

probability'distribu$ons' !

I

S

s

The*usefulness*of*probability* distributions*

Many!common!processes!generate!data!

with!probability!distribuMons!that!belong!to! families!with!known!properMes!

Even!if!the!data!are!not!distributed!

according!to!a!known!probability! distribuMon,!it!is!someMmes!useful!in! pracMce!to!approximate!with!known! distribuMon.!

mr

The*classic*discrete*distributions**

*

Discrete

uniform

distribution

*

Bernoulli

distribution

*

Geometric

distribution}p?eIYouee

:*

Binomial distribution

*

Multinomial

distribution

Discrete*uniform*distribution*

A!discrete!random!variable!X!is!uniform!if!it!

takes!k!different!values!and!! ! ! !!

For!example:! Rolling!a!fair!kdsided!die! Tossing!a!fair!coin!(k=2)!

P(X = xi) = 1 k

For!all!xi!that!X!can!take!

X

p ex,.

±

. ""÷¥:Discrete*uniform*distribution*

ExpectaMon!of!a!discrete!random!variable!X!that!!

takes!k!different!values!uniformly!

Variance!of!a!uniformly!distributed!random!

variable!X!.!

E[X] = 1 k

k

xi

var[X] = 1 k

k

(xi − E[X])2

ECx7= Exipcx,

=E

K

it

Bernoulli*distribution*

A!random!variable!X!is!Bernoulli!if!it!takes!on!two!

values!0!and!1!such!that! !

!!Credit:!wikipedia!

E[X] = p

var[X] = p(1 − p)

Jacob!Bernoulli!(1654d1705)!

P

X =/

→ H

PCX)=f

, - px=o

→ T

O) =p

varCx7=ECX4 - ETx7=EEpcx, - p

"

=p - p

~=p a-p )

Bernoulli*distribution*

Examples! Tossing!a!biased!(or!fair)!coin! Making!a!free!throw! Rolling!a!sixdsided!die!and!checking!if!it!shows!6! Any'indicator'func$on!of!a!random!variable!

p

→ H

T

l - p

1-

pix-65.to

ex) =/ !

A occurs torx

PCA) =p

Geometric*distribution*

A!discrete!random!variable!X!is!geometric!if!! Expected!value!and!variance!

P(X = k) = (1 − p)k−1p

k ≥ 1

E[X] = 1 p & var[X] = 1 − p p2

H,!TH,!TTH,!TTTH,!TTTTH,!TTTTTH,…!

ie . p is the prob .

's

a -PIK

.p

→

E- Cx) -

Ek CI - p)KIELTY -ETXJ

Geometric*distribution*

P(X = k) = (1 − p)k−1p

k ≥ 1

Credit:!Prof.!Grinstead! P=!0.5! P=!0.2!

Geometric*distribution*

Examples:!

How!many!rolls!of!a!sixdsided!die!will!it!take!to!

see!the!first!6?!

How!many!Bernoulli!trials!must!be!done!before!

the!first!1?!

How!many!experiments!needed!to!have!the!first!

success?!

Plays!an!important!role!in!the!theory'of'queues'

Derivation*of*geometric*expected* value*

!

E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k = 1 p

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! ! !!!!!!!!!!!!!! ! !! ! ! !

petit

Derivation*of*geometric* expected*value*

!

E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k = 1 p

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!! ! ! !!!!!!!!!!!!!! ! !! ! ! !

O

Derivation*of*geometric* expected*value*

!

E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k

Derivation*of*geometric* expected*value*

!

✺ For!we!have!

!this!power!series:!!

E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k

⇐

h X

"

= ¥gu

1×14

Derivation*of*geometric* expected*value*

!

✺ For!we!have!

!this!power!series:!!

∞

nxn = x (1 − x)2; |x| < 1 E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k

X=

I - p

→-

Derivation*of*geometric* expected*value*

!

✺ For!we!have!

!this!power!series:!!

∞

nxn = x (1 − x)2; |x| < 1 E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k

x = 1 − p

=

Derivation*of*geometric* expected*value*

!

✺ For!we!have!

!this!power!series:!!

∞

nxn = x (1 − x)2; |x| < 1 E[X] =

∞

k(1 − p)k−1p = p

∞

k(1 − p)k−1 = p 1 − p

∞

k(1 − p)k = 1 p

x!

Derivation*of*the*power*series*

!

∞

nxn = x (1 − x)2; |x| < 1

S(x) x =

∞

nxn−1 x S(t) t =

∞

xn = x · 1 1 − x = x 1 − x S(x) x = ( x 1 − x)

′

S(x) = x (1 − x)2

Proof:!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!;! S(x) =

∞

xn = 1 1 − x; |x| < 1

Home reading

Geometric*distribution:*die*example*

Let!X!be!the!number!of!rolls!of!a!fair!sixdsided!

die!needed!to!see!the!first!6.!What!is!!!!!!!!!!!!!! for!k!=!1,!2?!

Calculate!E[X]!and!var[X]!

P(X = k)

E[X] = 1 p & var[X] = 1 − p p2

P CX -

ft

pix--4=

E

"- P" I

.

( I-p)

' pp =L

E-Cx)=pt= 6

Geometric*distribution:*die*example*

Let!X!be!the!number!of!rolls!of!a!fair!sixdsided!

die!needed!to!see!the!first!6.!What!is!!!!!!!!!!!!!! for!k!=!1,!2?!

Calculate!E[X]!and!var[X]!

P(X = k)

P(X = 1) = (1 − p)0p = p = 1 6 ≃ 0.167

Geometric*distribution:*die*example*

Let!X!be!the!number!of!rolls!of!a!fair!sixdsided!

die!needed!to!see!the!first!6.!What!is!!!!!!!!!!!!!! for!k!=!1,!2?!

Calculate!E[X]!and!var[X]!

P(X = k)

P(X = 2) = (1 − p)1p = 5 6 · 1 6 ≃ 0.139 P(X = 1) = (1 − p)0p = p = 1 6 ≃ 0.167

Geometric*distribution:*die*example*

Let!X!be!the!number!of!rolls!of!a!fair!sixdsided!

die!needed!to!see!the!first!6.!What!is!!!!!!!!!!!!!! for!k!=!1,!2?!

Calculate!E[X]!and!var[X]!

P(X = k)

P(X = 2) = (1 − p)1p = 5 6 · 1 6 ≃ 0.139 P(X = 1) = (1 − p)0p = p = 1 6 ≃ 0.167

E[X] = 1 p = 1 1/6 = 6

var[X] = 1 − p p2 = 1 − 1/6 (1/6)2 = 30

Binomial*distribution*

Remember!Galton!Board?! Remember!the!airline!problem?! hjp://www.randomservices.org/ random/apps/ GaltonBoardExperiment.html!

Equates .

.Throw manycoins

many times

at once

.Probability of exactly

u

people showing

up

.Binomial*distribution*

Credit:!Prof.!Grinstead!

P!=!0.5!

jp→H

HT

H

H

1-IT

'=p

TH H HHT

# H=4

" Ci -pi

N Tosses

N=6

K -sherds

k=4

PC¥=k# Nk ) pkctpsn-I-ws.sq.ae

each ton

is

a

Bernoulli trial

Binomial*distribution*

A!discrete!random!variable!X!is!binomial!if! Examples!

If!we!roll!a!sixdsided!die!N!Mmes,!how!many!sixes!we!will!

see!

If!I!ajempt!N!free!throws,!how!many!points!will!I!score! What'is'the'sum'of'N'independent'and'iden$cally'

distributed'Bernoulli'trials?'

P(X = k) = N k

for integer 0 ≤ k ≤ N

E[X] = Np & var[X] = Np(1 − p)

with!

E Cx)

Expectations*of*Binomial*distribution*

A!discrete!random!variable!X!is!binomial!if!

P(X = k) = N k

for integer 0 ≤ k ≤ N

E[X] = Np & var[X] = Np(1 − p)

with!

XXj are iid

xitxat

N

kit

=EECXi7

Ecxi) -_ 2- xplx)

Phi)=fIp×i=o

it

=

lip

=to

N

varcxitp ' '-P)

=p

Binomial*distribution:*die*example*

Let!X!be!the!number!of!sixes!in!36!rolls!of!a!

fair!sixdsided!die.!What!is!P(X=k)!for!k!=5,!6,!7!

Calculate!E[X]!and!var[X]!

P(X = 5) = 36 5

6)5(5 6)31 ≃ 0.170

*

' II)

"

pix⇒HYGIENE

,

"

ECX) = n

✓ arex) = n p l l - p7--36x tf x If = 5

Binomial*distribution:*die*example*

Let!X!be!the!number!of!sixes!in!36!rolls!of!a!

fair!sixdsided!die.!What!is!P(X=k)!for!k!=5,!6,!7!

Calculate!E[X]!and!var[X]!

P(X = 5) = 36 5

6)5(5 6)31 ≃ 0.170

P(X = 6) = 36 6

6)6(5 6)30 ≃ 0.176

Binomial*distribution:*die*example*

Let!X!be!the!number!of!sixes!in!36!rolls!of!a!

fair!sixdsided!die.!What!is!P(X=k)!for!k!=5,!6,!7!

Calculate!E[X]!and!var[X]!

P(X = 5) = 36 5

6)5(5 6)31 ≃ 0.170

P(X = 6) = 36 6

6)6(5 6)30 ≃ 0.176

P(X = 7) = 36 7

6)7(5 6)29 ≃ 0.151

E[X] = 36 · 1 6 = 6

var[X] = 36 · 1 6 · 5 6 = 5

Betting*brainteaser*

What!would!you!rather!bet!on?!

How!many!rolls!of!a!fair!sixdsided!die!will!it!

take!to!see!the!first!6?!

How!many!sixes!will!appear!in!36!rolls!of!a!fair!

sixdsided!die?!

Why?!

→ →

Betting*brainteaser*

What!would!you!rather!bet!on?!

How!many!rolls!of!a!fair!sixdsided!die!will!it!

take!to!see!the!first!6?!

How!many!sixes!will!appear!in!36!rolls!of!a!fair!

sixdsided!die?!

Why?!

P(X = 6) = 36 6

6)6(5 6)30 ≃ 0.176

P(X = 1) = (1 − p)0p = p = 1 6 ≃ 0.167Geometric

distr .

Binomial

distr .

Multinomial*distribution*

A!discrete!random!variable!X!is!MulMnomial!if! The!event!of!throwing!N!Mmes!the!kdsided!die!

to!see!the!probability!of!gelng!n1!X1,!n2!X2,!n3! X3…nk!Xk!

P(X1 = n1, X2 = n2, ..., Xk = nk) = N! n1!n2!...nk!pn1

1 pn2 2 ...pnk k

where N = n1 + n2 + ... + nk

Multinomial*distribution*

A!discrete!random!variable!X!is!MulMnomial!if! The!event!of!throwing!kdsided!die!to!see!the!

probability!of!gelng!n1!X1,!n2!X2,!n3!X3…!

P(X1 = n1, X2 = n2, ..., Xk = nk) = N! n1!n2!...nk!pn1

1 pn2 2 ...pnk k

where N = n1 + n2 + ... + nk

8! 3!2!1!1!1!

I! L! ILLINOIS?!

Multinomial*distribution*

Examples!

If!we!roll!a!sixdsided!die!N!Mmes,!how!many!

What!are!the!counts!of!N!independent!and!

idenMcal!distributed!trials?!

This!is!very!widely!used!in!geneMcs!

Multinomial*distribution:*die*example*

What!is!the!probability!of!seeing!1!

and!0!sixes!in!15!rolls!of!a!fair!sixd sided!die?!

Multinomial*distribution:*die*example*

What!is!the!probability!of!seeing!1!

and!0!sixes!in!15!rolls!of!a!fair!sixd sided!die?!

P(X1 = 1, X2 = 2, X3 = 3, X4 = 4, X5 = 5, X6 = 0) = 15! 1!2!3!4!5!0!(1 6)1(1 6)2(1 6)3(1 6)4(1 6)5(1 6)0 = 15! 2!3!4!5!(1 6)15

0!'=1'

Assignments*

Read!Chapter!5!of!the!textbook! Next!Mme:!!more!classic!known!

probability!distribuMons!

!

Additional*References*

Charles!M.!Grinstead!and!J.!Laurie!Snell!

"IntroducMon!to!Probability”!!

Morris!H.!Degroot!and!Mark!J.!Schervish!

"Probability!and!StaMsMcs”!

See*you*next*time*

See You!