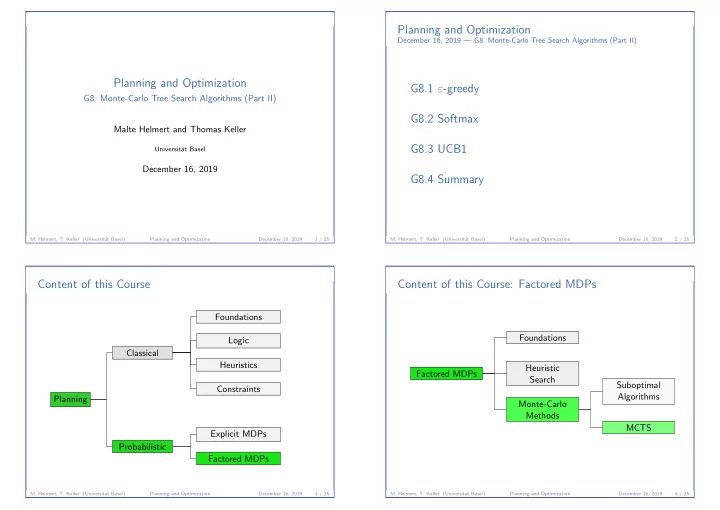

SLIDE 5

- G8. Monte-Carlo Tree Search Algorithms (Part II)

UCB1

Upper Confidence Bounds: Idea

◮ select successor c of d that minimizes ˆ

Qk(c) − E k(d) · Bk(c)

◮ based on action-value estimate ˆ

Qk(c),

◮ exploration factor E k(d) and ◮ bonus term Bk(c).

◮ select Bk(c) such that

Q⋆(s(c), a(c)) ≤ ˆ Qk(c) − E k(d) · Bk(c) with high probability

◮ Idea: ˆ

Qk(c) − E k(d) · Bk(c) is a lower confidence bound

- n Q⋆(s(c), a(c)) under the collected information

- M. Helmert, T. Keller (Universit¨

at Basel) Planning and Optimization December 16, 2019 17 / 25

- G8. Monte-Carlo Tree Search Algorithms (Part II)

UCB1

Bonus Term of UCB1

◮ use Bk(c) =

Nk(c)

as bonus term

◮ bonus term is derived from Chernoff-Hoeffding bound:

◮ gives the probability that a sampled value (here: ˆ

Qk(c))

◮ is far from its true expected value (here: Q⋆(s(c), a(c))) ◮ in dependence of the number of samples (here: Nk(c))

◮ picks the optimal action exponentially more often ◮ concrete MCTS algorithm that uses UCB1 is called UCT

- M. Helmert, T. Keller (Universit¨

at Basel) Planning and Optimization December 16, 2019 18 / 25

- G8. Monte-Carlo Tree Search Algorithms (Part II)

UCB1

Exploration Factor (1)

Exploration factor E k(d) serves two roles in SSPs:

◮ UCB1 designed for MAB with reward in [0, 1]

⇒ ˆ Qk(c) ∈ [0; 1] for all k and c

◮ bonus term Bk(c) =

Nk(c)

always ≥ 0

◮ when d is visited,

◮ Bk+1(c) > Bk(c) if a(c) is not selected ◮ Bk+1(c) < Bk(c) if a(c) is selected

◮ if Bk(c) ≥ 2 for some c, UCB1 must explore ◮ hence, ˆ

Qk(c) and Bk(c) are always of similar size ⇒ set E k(d) to a value that depends on ˆ V k(d)

- M. Helmert, T. Keller (Universit¨

at Basel) Planning and Optimization December 16, 2019 19 / 25

- G8. Monte-Carlo Tree Search Algorithms (Part II)

UCB1

Exploration Factor (2)

Exploration factor E k(d) serves two roles in SSPs:

◮ E k(d) allows to adjust balance

between exploration and exploitation

◮ search with E k(d) = ˆ

V k(d) very greedy

◮ in practice, E k(d) is often multiplied with constant > 1 ◮ UCB1 often requires hand-tailored E k(d) to work well

- M. Helmert, T. Keller (Universit¨

at Basel) Planning and Optimization December 16, 2019 20 / 25