DM812 METAHEURISTICS

Lecture 2

Simulated Annealing

Marco Chiarandini

Department of Mathematics and Computer Science University of Southern Denmark, Odense, Denmark

Simulated Annealing Convergence

Outline

- 1. Simulated Annealing

- 2. Convergence of Simulated Annealing

Simulated Annealing Convergence

Outline

- 1. Simulated Annealing

- 2. Convergence of Simulated Annealing

Simulated Annealing Convergence

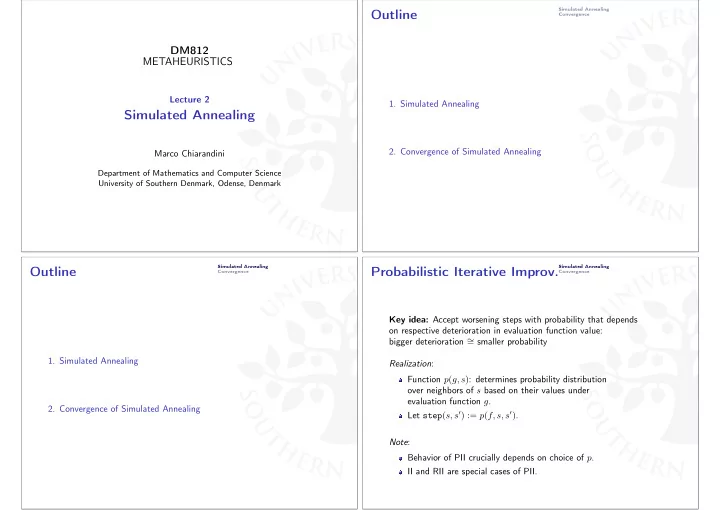

Probabilistic Iterative Improv.

Key idea: Accept worsening steps with probability that depends

- n respective deterioration in evaluation function value:

bigger deterioration ∼ = smaller probability Realization: Function p(g, s): determines probability distribution

- ver neighbors of s based on their values under