01/03/18 1

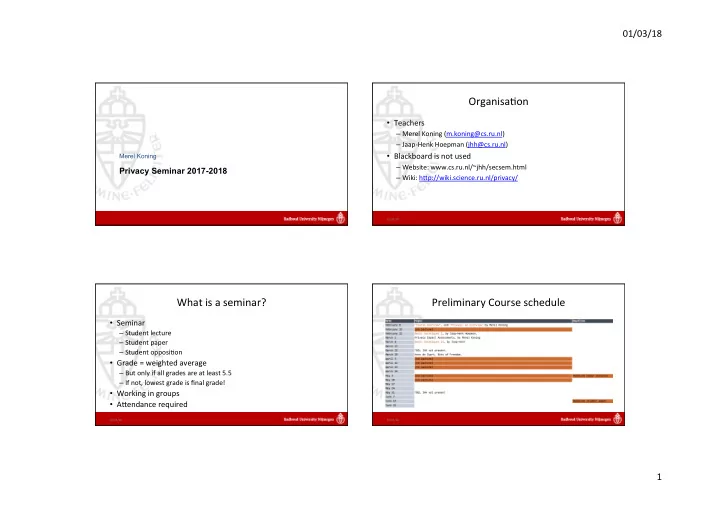

Privacy Seminar 2017-2018

Merel Koning

1

Organisa.on

- Teachers

– Merel Koning (m.koning@cs.ru.nl) – Jaap-Henk Hoepman (jhh@cs.ru.nl)

- Blackboard is not used

– Website: www.cs.ru.nl/~jhh/secsem.html – Wiki: hKp://wiki.science.ru.nl/privacy/

01/03/18 2

What is a seminar?

- Seminar

– Student lecture – Student paper – Student opposi.on

- Grade = weighted average

– But only if all grades are at least 5.5 – If not, lowest grade is final grade!

- Working in groups

- AKendance required

01/03/18 3

Preliminary Course schedule

01/03/18 4