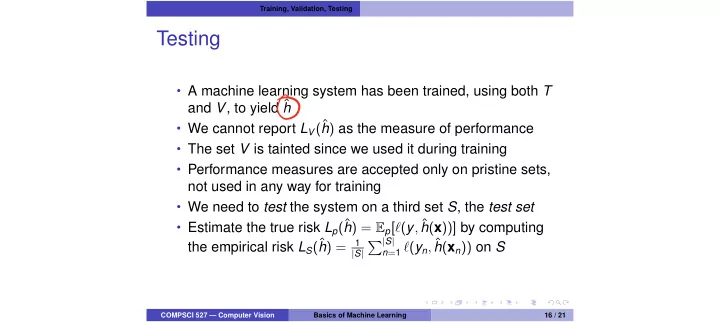

Training, Validation, Testing

Testing

- A machine learning system has been trained, using both T

and V, to yield ˆ h

- We cannot report LV(ˆ

h) as the measure of performance

- The set V is tainted since we used it during training

- Performance measures are accepted only on pristine sets,

not used in any way for training

- We need to test the system on a third set S, the test set

- Estimate the true risk Lp(ˆ

h) = Ep[`(y, ˆ h(x))] by computing the empirical risk LS(ˆ h) =

1 |S|

P|S|

n=1 `(yn, ˆ

h(xn)) on S

COMPSCI 527 — Computer Vision Basics of Machine Learning 16 / 21