12/10/19 1

NLP with recurrent networks

Chapter 9 in Martin/Jurafsky

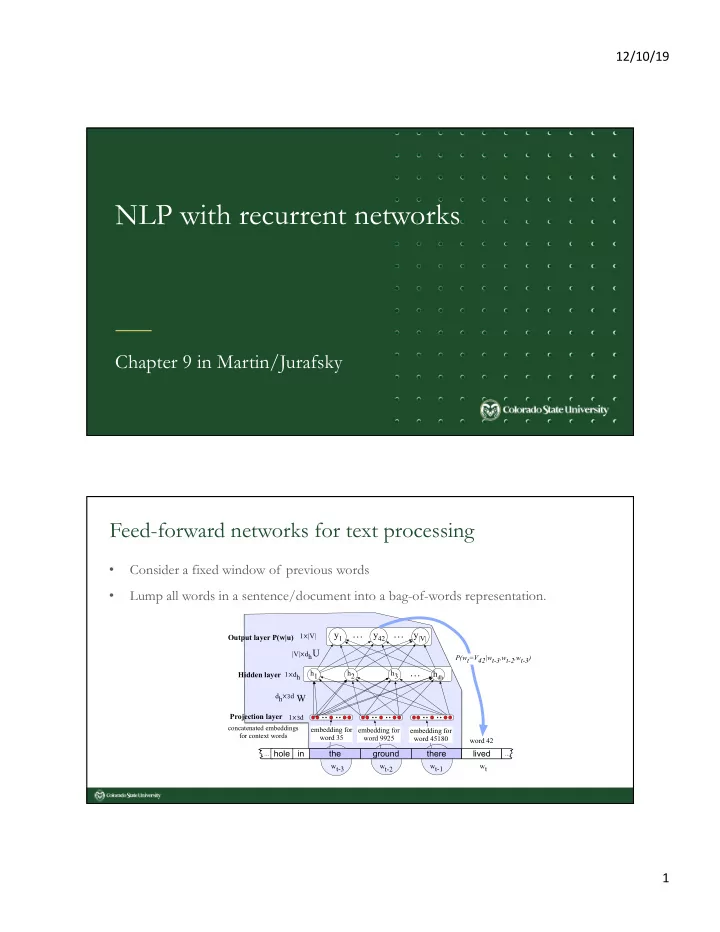

Feed-forward networks for text processing

- Consider a fixed window of previous words

- Lump all words in a sentence/document into a bag-of-words representation.

h1 h2

y1

h3

hdh

… …

U W y42 y|V|

Projection layer 1⨉3d

concatenated embeddings for context words

Hidden layer Output layer P(w|u)

…

in the hole

... ...

ground there lived

word 42 embedding for word 35 embedding for word 9925 embedding for word 45180 wt-1 wt-2 wt wt-3 dh⨉3d 1⨉dh |V|⨉dh P(wt=V42|wt-3,wt-2,wt-3) 1⨉|V|