New Algorithms for Sparse Representation of Discrete Signals Based on - PowerPoint PPT Presentation

New Algorithms for Sparse Representation of Discrete Signals Based on p - 2 Optimization New Algorithms for Sparse Representation of Discrete Signals Based on p - 2 Optimization Jie Yan and Wu-Sheng Lu Department of Electrical and

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization Jie Yan and Wu-Sheng Lu Department of Electrical and Computer Engineering University of Victoria, Victoria, BC, Canada August 25, 2011 1 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization INTRODUCTION OUTLINE INTRODUCTION 1 PRELIMINARIES 2 ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION 3 4 SIMULATIONS CONCLUSIONS 5 2 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization INTRODUCTION Motivation A central point in sparse signal processing is to seek and approximate to an ill-posed linear system while requiring that the solution has fewest nonzero entries. Many of the applications lead to minimizing the following ℓ 1 - ℓ 2 function F ( s ) = � x − Ψ s � 2 2 + λ � s � 1 . F ( s ) is globally convex and its global minimizer can be identified. 3 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization INTRODUCTION Motivation Cont’d For the ℓ 1 - ℓ 2 problem, iterative-shrinkage algorithms have emerged as a family of highly effective numerical methods. Of particular interest, a state-of-the-art algorithm called FISTA/MFISTA was developed by A. Beck and M. Teboulle. Chartrand and Yin have proposed algorithms for ℓ p - ℓ 2 minimization for 0 < p < 1 . Improved performance relative to that obtained by ℓ 1 - ℓ 2 minimization was demonstrated. 4 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization INTRODUCTION Contribution New algorithms for sparse representation based on ℓ p - ℓ 2 optimization are proposed. Our algorithms are built on MFISTA with several major changes. The soft-shrinkage step in MFISTA is replaced by a global solver for the minimization of a 1-D nonconvex ℓ p - ℓ 2 problem. Two efficient techniques for solving the 1-D ℓ p - ℓ 2 are proposed. 5 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization PRELIMINARIES OUTLINE INTRODUCTION 1 PRELIMINARIES 2 ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION 3 4 SIMULATIONS CONCLUSIONS 5 6 / 30

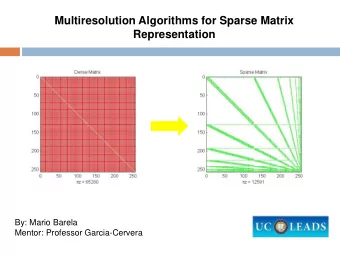

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization PRELIMINARIES Sparse represenations in overcomplete bases A typical sparse representation problem can be stated as finding the sparsest represenations of a discrete signal x under a (possibly overcomplete) dictionary Ψ . The problem can be described as minimizing � s � 0 subject to x = Ψ s or � x − Ψ s � 2 ≤ ǫ . Unfortunately, this problem is NP hard. A popular approach is to consider a relaxed ℓ 1 - ℓ 2 unconstrained convex problem as F ( s ) = || x − Ψ s || 2 min 2 + λ || s || 1 . s 7 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization PRELIMINARIES Iterative shrinkage-thresholding algorithm (ISTA) ISTA can be viewed as an extension of the classical gradient algorithm. Due to its simplicity, it is adequate for solving large-scale problem. A key step in its k th iteration is to approximate F ( s ) by an easy-to-deal-with upper-bound (up to a constant) convex function F ( s ) = L ˆ 2 � s − c k � 2 2 + λ � s � 1 The minimizer of ˆ F ( s ) is a soft shrinkage of vector c k with a constant threshold λ/ L , as s k = T λ/ L ( c k ) . ISTA provides a convergence rate O ( 1 / k ) . 8 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization PRELIMINARIES FISTA and MFISTA The FISTA is built on ISTA with an extra step in each iteration that, with the help of a sequence of scaling factors t k , creates an auxiliary iterate b k + 1 by moving the current iterate s k along the direction of s k − s k − 1 so as to improve the subsequent iterate s k + 1 . Furthermore, monotone FISTA (MFISTA) includes an additional step to FISTA to possess desirable monotone convergence. FISTA and MFISTA possess a much improved convergence rate of O ( 1 / k 2 ) . 9 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION OUTLINE INTRODUCTION 1 PRELIMINARIES 2 ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION 3 4 SIMULATIONS CONCLUSIONS 5 10 / 30

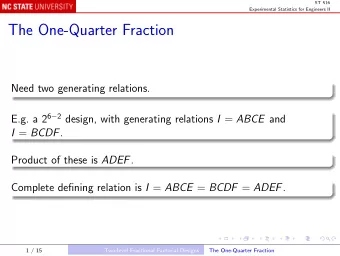

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION An interesting development in sparse representation and compressive sensing is to investigate a nonconvex variant of the basis pursuit by replacing the ℓ 1 norm term with an ℓ p norm with 0 < p < 1 . Naturally, an ℓ p - ℓ 2 counterpart can be formulated as F ( s ) = || x − Ψ s || 2 2 + λ || s || p min p . s 11 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION The algorithms we propose in this paper will be developed within the framework of FISTA/MFISTA in that � L � 2 || s − c k || 2 2 + λ || s || p s k = argmin (1) p s With 0 < p < 1 , the setting is closer to the ℓ 0 -norm problem, hence an improved sparse representation is expected. However, soft shrinkage fails to work as (1) is nonconvex. 12 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION The computation of s k reduces to solving M one-dimensional (1-D) minimization problems, and it boils down to solving the 1-D problem { u ( s ) = L s ∗ = argmin 2 ( s − c ) 2 + λ | s | p } . (2) s We propose two techniques to find the global solution of (2) with 0 < p < 1 . 13 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Method 1: When p is rational Suppose p = a / b with a , b positive integers and a < b . Let us first consider s ≥ 0 , then the problem is converted to minimizing v ( z ) = u ( s ) s = z b = L 2 ( z b − c ) 2 + λ z a whose gradient is ∇ v ( z ) = Lbz 2 b − 1 − Lcbz b − 1 + λ az a − 1 . The global minimizer z ∗ + must either be 0, or one of those stationary points where ∇ v ( z ) = 0 . MATLAB function roots was applied to find all the roots of polynomial ∇ v ( z ) . + ) b as the solution that After identifying z ∗ + , we have s ∗ + = ( z ∗ minimizes u ( s ) for s ≥ 0 . 14 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Method 1: When p is rational Cont’d In a similar way, the global minimizer s ∗ − that minimizes u ( s ) for s ≤ 0 can be computed, and the global minimizer s ∗ is obtained as s ∗ = argmin s { u ( s ) : s = s ∗ + , s ∗ − } . The above ℓ p solver is incorporated into an FISTA/MFISTA type algorithm. In each iteration, the computational complexity is O ( M ( 2 b − 1 ) 3 ) . The method proposed above works well whenever p is rational with a small denominator integer such as p ∈ { 1 / 4 , 1 / 3 , 1 / 2 , 2 / 3 , 3 / 4 } . 15 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Method 2: When p is an arbitrary real in ( 0 , 1 ) 60 a(s)=L(s−c) 2 /2 b(s)= λ |s| p 50 u(s)=a(s)+b(s) 40 30 20 10 0 −2 −1 0 1 2 3 4 5 Let us examine the function to minimize, i.e., 2 ( s − c ) 2 + λ | s | p . u ( s ) = L If c = 0 , s ∗ = 0 . Next, we consider the case of c > 0 . 16 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Method 2: When p is an arbitrary real in ( 0 , 1 ) Cont’d It can be observed that the global minimizer s ∗ lies in [ 0 , c ] where the function of interest becomes u ( s ) = L 2 ( s − c ) 2 + λ s p for s ∈ [ 0 , c ] . The convexity of u ( s ) can be analyzed by examining the 2nd-order derivative, i.e., u ′′ ( s ) = L + λ p ( p − 1 ) s p − 2 . 17 / 30

New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Method 2: When p is an arbitrary real in ( 0 , 1 ) Cont’d The stationary point that makes u ′′ ( s ) = 0 is s c = [ λ p ( 1 − p ) ] 1 / ( 2 − p ) . L For 0 ≤ s < s c , u ( s ) is concave as u ′′ ( s ) < 0 ; for s > s c , u ( s ) is convex as u ′′ ( s ) > 0 . 18 / 30

������� ������ New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Case (a): s c ≥ c 0 c sc u ( s ) is concave in [ 0 , c ] . As a result, s ∗ must be either 0 or c . Namely, s ∗ = argmin s { u ( s ) : s = 0 , c } . 19 / 30

������� ������ New Algorithms for Sparse Representation of Discrete Signals Based on ℓ p - ℓ 2 Optimization ALGORITHMS FOR ℓ p - ℓ 2 OPTIMIZATION Case (b): s c < c c 0 sc u ( s ) is concave in [ 0 , s c ] and convex in [ s c , c ] . We argue that s ∗ must be either at the point s t that minimizes convex function u ( s ) in [ s c , c ] , or at the boundary point 0. 20 / 30

Recommend

More recommend

Explore More Topics

Stay informed with curated content and fresh updates.