1MIT 2National Taiwan University 3MIT-IBM Watson AI Lab

MCUNet: Tiny Deep Learning

- n IoT Devices

NeurIPS 2020 (spotlight)

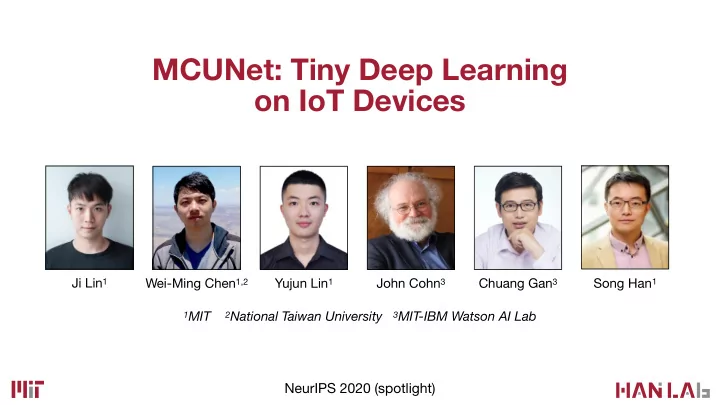

Ji Lin1 Wei-Ming Chen1,2 John Cohn3 Yujun Lin1 Song Han1 Chuang Gan3

MCUNet: Tiny Deep Learning on IoT Devices Ji Lin 1 Song Han 1 - - PowerPoint PPT Presentation

MCUNet: Tiny Deep Learning on IoT Devices Ji Lin 1 Song Han 1 Wei-Ming Chen 1,2 Yujun Lin 1 John Cohn 3 Chuang Gan 3 1 MIT 2 National Taiwan University 3 MIT-IBM Watson AI Lab NeurIPS 2020 (spotlight) Background: The Era of AIoT on

1MIT 2National Taiwan University 3MIT-IBM Watson AI Lab

NeurIPS 2020 (spotlight)

Ji Lin1 Wei-Ming Chen1,2 John Cohn3 Yujun Lin1 Song Han1 Chuang Gan3

#Units (Billion)

10 20 30 40 50 12 13 14 15F 16F 17F 18F 19F

Smart Retail Personalized Healthcare Precision Agriculture Smart Home …

#Units (Billion)

10 20 30 40 50 12 13 14 15F 16F 17F 18F 19F

Memory (Activation) Storage (Weights)

Memory (Activation) Storage (Weights) 16GB ~TB/PB

Memory (Activation) Storage (Weights) 16GB 4GB 256GB ~TB/PB

Memory (Activation) Storage (Weights) 16GB 4GB 256GB 320kB 1MB ~TB/PB

Memory (Activation) Storage (Weights) 16GB ~TB/PB 4GB 256GB 320kB 1MB

Memory (Activation) Storage (Weights) 16GB ~TB/PB 4GB 256GB 320kB 1MB

We need to reduce the peak activation size AND the model size to fit a DNN into MCUs.

10 20 30 40 50 Param (MB) Peak Activation (MB) ResNet-18 MobileNetV2-0.75 MCUNet ~70% ImageNet Top-1

4.6x 1.8x

ResNet-50 MobileNetV2 MobileNetV2 (int8) 2000 4000 6000 8000

320kB constraint

Peak Memory (kB)

22x 23x 5x

ResNet-50 MobileNetV2 MobileNetV2 (int8) 2000 4000 6000 8000

320kB constraint

Peak Memory (kB)

22x 23x 5x

ResNet-50 MobileNetV2 MobileNetV2 (int8) 2000 4000 6000 8000

320kB constraint

Peak Memory (kB)

22x 23x 5x

(a) Search NN model on an existing library e.g., ProxylessNAS, MnasNet

Library NAS

(a) Search NN model on an existing library e.g., ProxylessNAS, MnasNet (b) Tune deep learning library given a NN model e.g., TVM

Library NAS Library NN Model

TinyEngine TinyNAS Efficient Compiler / Runtime Efficient Neural Architecture Library NN Model

(a) Search NN model on an existing library e.g., ProxylessNAS, MnasNet (b) Tune deep learning library given a NN model e.g., TVM (c) MCUNet: system-algorithm co-design

MCUNet Library NAS

TinyEngine TinyNAS Efficient Compiler / Runtime Efficient Neural Architecture Library NN Model

(a) Search NN model on an existing library e.g., ProxylessNAS, MnasNet (b) Tune deep learning library given a NN model e.g., TVM (c) MCUNet: system-algorithm co-design

MCUNet Library NAS TinyNAS

Full Network Space

Optimized Search Space Full Network Space

Memory/Storage Constraints

Optimized Search Space Full Network Space Model Specialization

Memory/Storage Constraints

k=7 k=5 k=3

k=7 k=5 k=3 e=6 e=4 e=2 pw1 dw pw2

k=7 k=5 k=3 e=6 e=4 e=2 pw1 dw pw2 d=4 d=3 d=2

Out of memory!

* Cai et al., Once-for-All: Train One Network and Specialize it for Efficient Deployment, ICLR’20

F412/F743/H746/…

256kB/320kB/512kB/…

0% 25% 50% 75% 100% 25 30 35 40 45 50 55 60 65

w0.3-r160 | 32.5 w0.4-r144 | 46.9

FLOPs (M) Cumulative Probability

width-res. | mFLOPs

0% 25% 50% 75% 100% 25 30 35 40 45 50 55 60 65

w0.3-r160 | 32.5 w0.4-r144 | 46.9

FLOPs (M) Cumulative Probability

width-res. | mFLOPs

0% 25% 50% 75% 100% 25 30 35 40 45 50 55 60 65

w0.3-r160 | 32.5 w0.4-r144 | 46.9 p0.8

FLOPs (M) Cumulative Probability

p=80% (32.3M, 80%) width-res. | mFLOPs

Bad design space

(45.4M, 80%)

0% 25% 50% 75% 100% 25 30 35 40 45 50 55 60 65

w0.3-r160 | 32.5 w0.4-r144 | 46.9 p0.8

FLOPs (M) Cumulative Probability

p=80% (32.3M, 80%) best acc: 76.4% width-res. | mFLOPs

Bad design space

b e s t a c c : 7 4 . 2 % (45.4M, 80%)

0% 25% 50% 75% 100% 25 30 35 40 45 50 55 60 65

w0.3-r160 | 32.5 w0.4-r112 | 32.4 w0.4-r128 | 39.3 w0.4-r144 | 46.9 w0.5-r112 | 38.3 w0.5-r128 | 46.9 w0.5-r144 | 52.0 w0.6-r112 | 41.3 w0.7-r96 | 31.4 w0.7-r112 | 38.4 p0.8

FLOPs (M) Cumulative Probability

p=80% (50.3M, 80%) (32.3M, 80%) best acc: 76.4% width-res. | mFLOPs

Good design space: likely to achieve high FLOPs under memory constraint Bad design space

best acc: 78.7% b e s t a c c : 7 4 . 2 %

Random sample (kernel size, expansion, depth) Jointly fine-tune multiple sub- networks Super Network

* Cai et al., Once-for-All: Train One Network and Specialize it for Efficient Deployment, ICLR’20

Random sample (kernel size, expansion, depth) Jointly fine-tune multiple sub- networks Super Network …

40

Elastic Kernel Size Elastic Depth Elastic Width

41

Elastic Kernel Size Elastic Depth Elastic Width

7x7 Index central 5x5 Index central 3x3

Start with full kernel size Smaller kernel takes centered weights

42

Elastic Kernel Size Elastic Depth Elastic Width

unit i train with full depth unit i shrink the depth O1 O2 O3

Allow later layers in each unit to be skipped to reduce the depth

Elastic Kernel Size Elastic Depth Elastic Width

train with full width channel importance 0.02 0.15 0.85 0.63 channel sorting reorg. shrink the width O1 O2 O3 O1

Shrink the width Keep the most important channels when shrinking via channel sorting

Peak Mem (kB) 75 150 225 300

MobileNetV2 TinyNAS

Peak Memory for First Two Stages

Peak Mem (kB) 75 150 225 300

average average

MobileNetV2 TinyNAS

allowing us to fit a larger model at the same amount of memory

Peak Memory for First Two Stages

2.2x 1.6x max max

(a) Existing libraries based on runtime interpretation e.g., TF-Lite Micro, CMSIS-NN

NN Model

All Supported ops

Inference

Runtime

Meta info. & Memory allocation

Interprete

(a) Existing libraries based on runtime interpretation e.g., TF-Lite Micro, CMSIS-NN

NN Model

All Supported ops

Inference

Runtime

Meta info. & Memory allocation

Interprete

Computation

(a) Existing libraries based on runtime interpretation e.g., TF-Lite Micro, CMSIS-NN

NN Model

All Supported ops

Inference

Runtime

Meta info. & Memory allocation

Interprete

Computation

Storage

(a) Existing libraries based on runtime interpretation e.g., TF-Lite Micro, CMSIS-NN

NN Model

All Supported ops

Inference

Runtime

Meta info. & Memory allocation

Interprete

Computation

Memory

Storage

(b) TinyEngine: Model-adaptive code generation.

NN Model

Specialized ops

Inference

Compile time (offline) Runtime

Memory schedule

(a) Existing libraries based on runtime interpretation e.g., TF-Lite Micro, CMSIS-NN

NN Model

All Supported ops

Inference

Runtime

Meta info. & Memory allocation

Interprete

Computation

Memory

Storage

(b) TinyEngine: Model-adaptive code generation.

NN Model

Specialized ops

Inference

Compile time (offline) Runtime

Memory schedule

Code generation

Peak Mem (KB) ↓

76 48 Baseline: ARM CMSIS-NN 160

1.7x smaller

1x

peak mem

6x 1x

1x

peak mem

6x 1x

channels

(a) Depth-wise convolution

Input activation Output activation

2 1 n n-1 (Peak Mem: 2n)

N-1 N

1x

peak mem

6x 1x

channels

(a) Depth-wise convolution

Input activation Output activation

2 1 n n-1 (Peak Mem: 2n)

N-1 N

(b) In-place depth-wise convolution

Input/output activation Temp buffer

1 2 n write back (Peak Mem: n+1)

N

1x

peak mem

6x 1x

Code generation

Peak Mem (KB) ↓

76 48 Baseline: ARM CMSIS-NN 160

3.4x smaller

In-place depth-wise

channels

(a) Depth-wise convolution

Input activation Output activation

2 1 n n-1 (Peak Mem: 2n)

N-1 N

(b) In-place depth-wise convolution

Input/output activation Temp buffer

1 2 n write back (Peak Mem: n+1)

N

Baseline: ARM CMSIS-NN Million MAC/s ↑ 52

Baseline: ARM CMSIS-NN Code generation: Eliminate runtime interpretation overhead Million MAC/s ↑ 64 52

OOM

NN Model

Specialized ops

Inference

TinyEngine: Model-adaptive code generation.

Compile time Runtime

Memory schedule

OOM

Baseline: ARM CMSIS-NN Code generation Specialized Im2col: Increase the data reuse Million MAC/s ↑ 64 70 52

OOM

Baseline: ARM CMSIS-NN Code generation Specialized Im2col Op fusion Million MAC/s ↑ 64 70 52 75

Pad Conv ReLU BN Specialized kernel

OOM

Baseline: ARM CMSIS-NN Code generation Specialized Im2col Op fusion Loop unrolling Million MAC/s ↑ 64 70 52 75 79 82

Baseline: ARM CMSIS-NN Code generation Specialized Im2col Op fusion Loop unrolling Tiling Million MAC/s ↑ 64 70 52 75 79 82 Specialized loop unrolling/tiling according to network architecture

Im2col buffer x Weights ...

Tile size

Output

Tile size

Code generation

Peak Mem (KB) ↓

76 48 Baseline: ARM CMSIS-NN 160

3.4x smaller

In-place depth-wise

Million MAC/s ↑

1.6x faster

Baseline: ARM CMSIS-NN Code generation Specialized Im2col Op fusion Loop unrolling Tiling Million MAC/s ↑ 64 70 52 75 79 82

adsfas

Code generation

Peak Mem (KB) ↓

76 48 Baseline: ARM CMSIS-NN 160

3.4x smaller

In-place depth-wise

Million MAC/s ↑

Baseline: ARM CMSIS-NN Code generation Specialized Im2col Op fusion Loop unrolling Tiling 64 70 52 75 79 82

1.6x faster

0% 25% 50% 75% 100%

1.00 1.00 1.00 1.00 0.61 0.66 0.64 0.94 0.82 0.32 0.33 0.32 0.33

TF-Lite Micro MicroTVM Tuned CMSIS-NN TinyEngine

SmallCifar MobileNetV2 ProxylessNAS MnasNet

Normalized Speed↑

1.6x faster 3x faster 3x faster 3x faster 3x faster 1.5x faster 1.6x faster OOM OOM OOM

Peak Mem (KB)↓

46 92 138 184 230

84 41 65 46 228 197 217 67 144 216 161 211 64

SmallCifar MobileNetV2 ProxylessNAS MnasNet

3.1x smaller 4.8x smaller OOM 2.7x smaller 3.3x smaller OOM OOM

Code generation

Peak Mem (KB) ↓

76 48 Baseline: ARM CMSIS-NN 160

3.4x smaller

In-place depth-wise

Million MAC/s ↑

Baseline: ARM CMSIS-NN Code generation Specialized Im2col Op fusion Loop unrolling Tiling 64 70 52 75 79 82

1.6x faster

0% 25% 50% 75% 100%

1.00 1.00 1.00 1.00 0.61 0.66 0.64 0.94 0.82 0.32 0.33 0.32 0.33

TF-Lite Micro MicroTVM Tuned CMSIS-NN TinyEngine

SmallCifar MobileNetV2 ProxylessNAS MnasNet

Normalized Speed↑

1.6x faster 3x faster 3x faster 3x faster 3x faster 1.5x faster 1.6x faster OOM OOM OOM

Peak Mem (KB)↓

46 92 138 184 230

84 41 65 46 228 197 217 67 144 216 161 211 64

SmallCifar MobileNetV2 ProxylessNAS MnasNet

3.1x smaller 4.8x smaller OOM 2.7x smaller 3.3x smaller OOM OOM

We focus on large-scale datasets to reflect real-life use cases. Datasets: (1) ImageNet-1000 (2) Wake Words

40

* scaled down version: width multiplier 0.3, input resolution 80

56 44 40

* scaled down version: width multiplier 0.3, input resolution 80

62 56 44 40

* scaled down version: width multiplier 0.3, input resolution 80

50 55 60 65 70 75

70.7 65.9 63.5 62.0 ImageNet Top-1 Accuracy (%)

STM32F412 (256kB/1MB) STM32F746 (320kB/1MB) STM32F765 (512kB/1MB) STM32H743 (512kB/2MB)

50 55 60 65 70 75

70.7 65.9 63.5 62.0 ImageNet Top-1 Accuracy (%)

STM32F412 (256kB/1MB) STM32F746 (320kB/1MB) STM32F765 (512kB/1MB) STM32H743 (512kB/2MB)

The first to achieve >70% ImageNet accuracy on commercial MCUs

50 55 60 65 70 75 53.8

70.7 65.9 63.5 62.0 ImageNet Top-1 Accuracy (%)

STM32F412 (256kB/1MB) STM32F746 (320kB/1MB) STM32F765 (512kB/1MB) STM32H743 (512kB/2MB)

The first to achieve >70% ImageNet accuracy on commercial MCUs

MobileNetV2+CMSIS-NN

+17%

10 20 30 40 50 Param (MB) Peak Activation (MB) ResNet-18 MobileNetV2-0.75 MCUNet ~70% ImageNet Top-1

4.6x 1.8x

10 20 30 40 50 Param (MB) Peak Activation (MB) ResNet-18 MobileNetV2-0.75 MCUNet

24.6x 13.8x

~70% ImageNet Top-1

VWW Accuracy

84 86 88 90 92 440 880 1320 1760 2200

MCUNet MobileNetV2 ProxylessNAS Han et al.

84 86 88 90 92 50 162.5 275 387.5 500

OOM Latency (ms) Peak SRAM (kB)

(a) Trade-off: accuracy vs. measured latency (b) Trade-off: accuracy vs. peak memory

256kB constraint on MCU

VWW Accuracy

84 86 88 90 92 440 880 1320 1760 2200

MCUNet MobileNetV2 ProxylessNAS Han et al.

84 86 88 90 92 50 162.5 275 387.5 500

10FPS 5FPS

OOM Latency (ms) Peak SRAM (kB)

(a) Trade-off: accuracy vs. measured latency (b) Trade-off: accuracy vs. peak memory

256kB constraint on MCU

VWW Accuracy

84 86 88 90 92 440 880 1320 1760 2200

MCUNet MobileNetV2 ProxylessNAS Han et al.

84 86 88 90 92 50 162.5 275 387.5 500

10FPS 5FPS

OOM Latency (ms) Peak SRAM (kB) 3.4× faster 3.7× smaller 2.4× faster

(a) Trade-off: accuracy vs. measured latency (b) Trade-off: accuracy vs. peak memory

256kB constraint on MCU

GSC Accuracy

88 90 92 94 96 340 680 1020 1360 1700

MCUNet MobileNetV2 ProxylessNAS

88 90 92 94 96 30 147.5 265 382.5 500

10FPS 5FPS

2.8× faster 4.1× smaller 2% higher 256kB constraint Latency (ms) Peak SRAM (kB)

(a) Trade-off: accuracy vs. measured latency (b) Trade-off: accuracy vs. peak memory

OOM

Project Page: http://tinyml.mit.edu