Machine-Assisted Proofs James Davenport (moderator) Bjorn Poonen - - PowerPoint PPT Presentation

Machine-Assisted Proofs James Davenport (moderator) Bjorn Poonen - - PowerPoint PPT Presentation

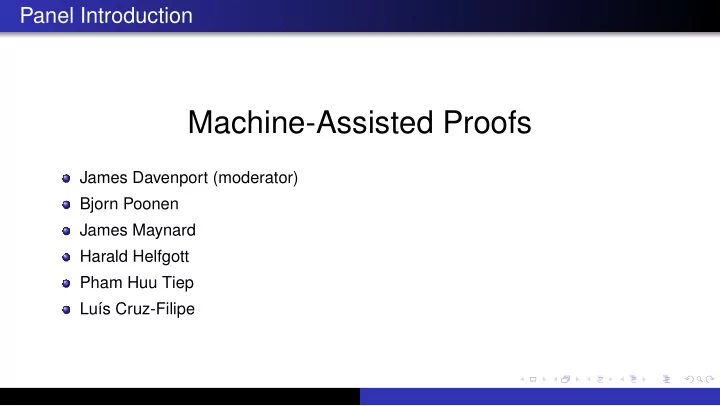

Panel Introduction Machine-Assisted Proofs James Davenport (moderator) Bjorn Poonen James Maynard Harald Helfgott Pham Huu Tiep Lu s Cruz-Filipe A (very brief, partial) history 1963 Solvability of Groups of Odd Order: 254 pages

A (very brief, partial) history

1963 “Solvability of Groups of Odd Order”: 254 pages [FT63] 1976 “Every Planar Map is Four-Colorable”: 256 pages + computation [AH76] 1989 Revised Four-Color proof published [AH89] 1998 Hales announced proof of Kepler Conjecture 2005 Hales’ proof published “uncertified” [Hal05] 2008 Gonthier stated formal proof of Four-Color [Gon08] 2012 Gonthier/Th´ ery stated formal proof of Odd Order [GT12] 2013 Helfgott published (arXiv) proof of ternary Goldbach [Hel13] 2014 Flyspeck project announced formal proof of Kepler [Hal14] 2016 Maynard published “Large gaps between primes” [May16] 2017 Flyspeck paper published [HAB+17]

A few, partial, topics

What are the implications for authors journals/publishers the refereeing process readers

Discussion panel: Machine-assisted proofs

Bjorn Poonen, MIT

(with thanks to my colleague Andrew V. Sutherland)

August 7, 2018

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Kinds of machine assistance

Experimental mathematics: Humans design experiments for the computers to carry out, in order to discover or test new conjectures. Human/machine collaborative proofs: Humans reduce a proof to a large number

- f cases, or to a detailed computation, which the computer carries out.

Formal proof verification: Humans supply the steps of a proof in a language such that the computer can verify that each step follows logically from previous steps. Formal proof discovery: The computer searches for a chain of deduction leading from provided axioms to a theorem.

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Really big computations

Cloud computing services make it possible to do computations much larger than most people would be able to do with their own physical computers. Example My colleague Andrew Sutherland at MIT did a 300 core-year computation in 8 hours using (a record) 580,000 cores of the Google Compute Engine for about $20,000. As he says, “Having a computer that is 10 times as fast doesn’t really change the way you do your research, but having a computer that is 10,000 times as fast does.”

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Large databases

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Refereeing computations

Almost all math journals now publish papers depending on machine computations. Should papers involving machine-assisted proofs be viewed as more or less suspect than purely human ones? What if the referee is not qualified to check the computations, e.g., by redoing them? What if the computations are too expensive to do more than once? What if the computation involves proprietary software (e.g., MAGMA) for which there is no direct way to check that the algorithms do what they claim to do? Should one insist on open-source software, or even software that has gone through a peer-review process (as in Sage, for instance)? In what form should computational proofs be published?

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Some opinions

The burden should be on authors to provide understandable and verifiable code, just as the burden is on authors to make their human-generated proofs understandable and verifiable. Ideally, code should be written in a high-level computer algebra package whose language is close to mathematics, to minimize the computer proficiency demands

- n a potential reader.

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Introduction

James Maynard

Professor of number theory, University of Oxford, UK

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Opportunities and challenges of use of machines

The use of computers in mathematics is widespread and likely to increase.

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Opportunities and challenges of use of machines

The use of computers in mathematics is widespread and likely to increase. This presents several opportunities: Personal assistant: Guiding intuition, checking hypotheses Theorem proving: Large computations, checking many cases Theorem checking: Formal verification

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Opportunities and challenges of use of machines

The use of computers in mathematics is widespread and likely to increase. This presents several opportunities: Personal assistant: Guiding intuition, checking hypotheses Theorem proving: Large computations, checking many cases Theorem checking: Formal verification But also several challenges: Are computations rigorous? Are proofs with computation understandable to humans? How do we find errors?

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

My use of computation

I use computation daily to guide my proofs and intuition. Two special cases of computations in my proofs:

Want to understand the spectrum of infinite dimensional operator. Consider

well-chosen finite-dimensional subspace and do explicit computations there.

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

My use of computation

I use computation daily to guide my proofs and intuition. Two special cases of computations in my proofs:

Want to understand the spectrum of infinite dimensional operator. Consider

well-chosen finite-dimensional subspace and do explicit computations there. Do non-rigorous computation to guess good answers, then compute answers using exact arithmetic Happy trade-off between quality of numerical result and computation time

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

My use of computation

I use computation daily to guide my proofs and intuition. Two special cases of computations in my proofs:

Want to understand the spectrum of infinite dimensional operator. Consider

well-chosen finite-dimensional subspace and do explicit computations there. Do non-rigorous computation to guess good answers, then compute answers using exact arithmetic Happy trade-off between quality of numerical result and computation time

After doing theoretical manipulations, show that some messy explicit integral is

less than 1.

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

My use of computation

I use computation daily to guide my proofs and intuition. Two special cases of computations in my proofs:

Want to understand the spectrum of infinite dimensional operator. Consider

well-chosen finite-dimensional subspace and do explicit computations there. Do non-rigorous computation to guess good answers, then compute answers using exact arithmetic Happy trade-off between quality of numerical result and computation time

After doing theoretical manipulations, show that some messy explicit integral is

less than 1. My computations are non-rigorous! Very difficult to referee - many potential sources for error.

Bjorn Poonen, MIT Discussion panel: Machine-assisted proofs

Machine-assisted proofs: experiences from analytic number theory

Harald Andr´ es Helfgott

CNRS/G¨

- ttingen

August 2018

The varieties of machine assistance. Main issues.

When we say “machine-assisted”, we may mean any of several related things:

The varieties of machine assistance. Main issues.

When we say “machine-assisted”, we may mean any of several related things:

1

Exploratory work

2

As part of the proof: rigorous computations/casework

3

Production and verification of formal proofs

The varieties of machine assistance. Main issues.

When we say “machine-assisted”, we may mean any of several related things:

1

Exploratory work

2

As part of the proof: rigorous computations/casework

3

Production and verification of formal proofs Some possible issues:

The varieties of machine assistance. Main issues.

When we say “machine-assisted”, we may mean any of several related things:

1

Exploratory work

2

As part of the proof: rigorous computations/casework

3

Production and verification of formal proofs Some possible issues:

1

What is a rigorous computation?

2

Are computer errors at all likely?

3

How do we reduce programming errors, or errors in machine/human interaction?

4

How do we check for errors in proofs altogether? Can computers help?

5

What is the meaning and purpose of a proof, for us, as mathematicians?

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B).

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B). First proposed in the late 1950s. Several commonly used open-source implementations.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B). First proposed in the late 1950s. Several commonly used open-source

- implementations. (The package ARB implements a variant, ball arithmetic.)

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B). First proposed in the late 1950s. Several commonly used open-source

- implementations. (The package ARB implements a variant, ball arithmetic.)

Cost: multiplies running time by (very roughly) ∼ 10 (depending on the implementation).

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B). First proposed in the late 1950s. Several commonly used open-source

- implementations. (The package ARB implements a variant, ball arithmetic.)

Cost: multiplies running time by (very roughly) ∼ 10 (depending on the implementation). Example of a large-scale application: D. Platt’s verification of the Riemann Hypothesis for zeroes with imaginary part ≤ 1.1 · 1011.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B). First proposed in the late 1950s. Several commonly used open-source

- implementations. (The package ARB implements a variant, ball arithmetic.)

Cost: multiplies running time by (very roughly) ∼ 10 (depending on the implementation). Example of a large-scale application: D. Platt’s verification of the Riemann Hypothesis for zeroes with imaginary part ≤ 1.1 · 1011.

Note: It is possible to avoid interval arithmetic and keep track of rounding erros by hand.

Rigorous computations

Rigorous computations with real numbers must take into account rounding errors. Moreover, a generic element of R cannot even be represented in bounded space. Interval arithmetic provides a way to keep track of rounding errors automatically, while providing data types for work in R and C. The basic data type is an interval [a, b], where a, b ∈ Q. Of course, rationals can be stored in a computer. Best if of the form n/2k. A procedure is said to implement a function f : Rk → R if, given B = ([ai, bi])1≤i≤k ⊂ Rk, it returns an interval in R containing f(B). First proposed in the late 1950s. Several commonly used open-source

- implementations. (The package ARB implements a variant, ball arithmetic.)

Cost: multiplies running time by (very roughly) ∼ 10 (depending on the implementation). Example of a large-scale application: D. Platt’s verification of the Riemann Hypothesis for zeroes with imaginary part ≤ 1.1 · 1011.

Note: It is possible to avoid interval arithmetic and keep track of rounding erros by hand. Doing so would save computer time at the expense of human time, and would create one more

Rigorous computations, II: comparisons. Maxima and minima

Otherwise put: making “proof by graph” rigorous.

Rigorous computations, II: comparisons. Maxima and minima

Otherwise put: making “proof by graph” rigorous. Comparing functions in exploratory work:

Rigorous computations, II: comparisons. Maxima and minima

Otherwise put: making “proof by graph” rigorous. Comparing functions in exploratory work: f(x) ≤ g(x) for x ∈ [0, 1] because a plot tells me so.

Rigorous computations, II: comparisons. Maxima and minima

Otherwise put: making “proof by graph” rigorous. Comparing functions in exploratory work: f(x) ≤ g(x) for x ∈ [0, 1] because a plot tells me so. Comparing functions in a proof:

Rigorous computations, II: comparisons. Maxima and minima

Otherwise put: making “proof by graph” rigorous. Comparing functions in exploratory work: f(x) ≤ g(x) for x ∈ [0, 1] because a plot tells me so. Comparing functions in a proof: must prove that f(x) − g(x) ≤ 0 for x ∈ [0, 1].

Rigorous computations, II: comparisons. Maxima and minima

Otherwise put: making “proof by graph” rigorous. Comparing functions in exploratory work: f(x) ≤ g(x) for x ∈ [0, 1] because a plot tells me so. Comparing functions in a proof: must prove that f(x) − g(x) ≤ 0 for x ∈ [0, 1]. Can let the computer prove this fact! Bisection method and interval arithmetic. Look at derivatives if necessary. Similarly for: locating maxima and minima. Interval arithmetic particularly suitable.

Rigorous computations, III: numerical integration

1

F(x)dx = ?

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge.

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge. Rigorous quadrature: use trapezoid rule, or Simpson’s rule, or Euler-Maclaurin, etc., together with interval arithmetic, using bounds on derivatives of F to bound the error.

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge. Rigorous quadrature: use trapezoid rule, or Simpson’s rule, or Euler-Maclaurin, etc., together with interval arithmetic, using bounds on derivatives of F to bound the error. What if F is pointwise not differentiable?

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge. Rigorous quadrature: use trapezoid rule, or Simpson’s rule, or Euler-Maclaurin, etc., together with interval arithmetic, using bounds on derivatives of F to bound the error. What if F is pointwise not differentiable? Should be automatic; as of the dark days of 2018, some ad-hoc work still needed.

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge. Rigorous quadrature: use trapezoid rule, or Simpson’s rule, or Euler-Maclaurin, etc., together with interval arithmetic, using bounds on derivatives of F to bound the error. What if F is pointwise not differentiable? Should be automatic; as of the dark days of 2018, some ad-hoc work still needed. Even more ad-hoc work needed for complex integrals.

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge. Rigorous quadrature: use trapezoid rule, or Simpson’s rule, or Euler-Maclaurin, etc., together with interval arithmetic, using bounds on derivatives of F to bound the error. What if F is pointwise not differentiable? Should be automatic; as of the dark days of 2018, some ad-hoc work still needed. Even more ad-hoc work needed for complex integrals. Example: for Pr(s) =

p≤r(1 − p−s) and R the straight path from 200i to 40000i,

1

πi

- R

|P(s + 1/2)P(s + 1/4)||ζ(s + 1/2)||ζ(s + 1/4)| |s|2 |ds| = 0.009269 + error,

where |error| ≤ 3 · 10−6.

Rigorous computations, III: numerical integration

1

F(x)dx = ? It is not enough to increase the “precision” in Sagemath/Mathematica/Maple until the result seems to converge. Rigorous quadrature: use trapezoid rule, or Simpson’s rule, or Euler-Maclaurin, etc., together with interval arithmetic, using bounds on derivatives of F to bound the error. What if F is pointwise not differentiable? Should be automatic; as of the dark days of 2018, some ad-hoc work still needed. Even more ad-hoc work needed for complex integrals. Example: for Pr(s) =

p≤r(1 − p−s) and R the straight path from 200i to 40000i,

1

πi

- R

|P(s + 1/2)P(s + 1/4)||ζ(s + 1/2)||ζ(s + 1/4)| |s|2 |ds| = 0.009269 + error,

where |error| ≤ 3 · 10−6. Method: e-mail ARB’s author (F. Johansson). To change the path or integrand, edit the code he sends you. Run overnight.

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation.

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N?

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N? Often, methods from analysis show: expression(n) = f(n) + error, where |error| ≤ g(n) and g(n) ≪ |f(n)|, but only for n large.

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N? Often, methods from analysis show: expression(n) = f(n) + error, where |error| ≤ g(n) and g(n) ≪ |f(n)|, but only for n large. For n ≤ N, we compute expression(n) instead. That can establish a strong, simple bound valid for all n ≤ N.

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N? Often, methods from analysis show: expression(n) = f(n) + error, where |error| ≤ g(n) and g(n) ≪ |f(n)|, but only for n large. For n ≤ N, we compute expression(n) instead. That can establish a strong, simple bound valid for all n ≤ N. Example: let m(n) =

k≤n µ(k)/k. Then

|m(n)| ≤ 0.0144 log n

for n ≥ 96955 (Ramar´ e).

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N? Often, methods from analysis show: expression(n) = f(n) + error, where |error| ≤ g(n) and g(n) ≪ |f(n)|, but only for n large. For n ≤ N, we compute expression(n) instead. That can establish a strong, simple bound valid for all n ≤ N. Example: let m(n) =

k≤n µ(k)/k. Then

|m(n)| ≤ 0.0144 log n

for n ≥ 96955 (Ramar´ e). A C program using interval arithmetic gives

|m(n)| ≤

- 2/n

for all 0 < n ≤ 1014.

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N? Often, methods from analysis show: expression(n) = f(n) + error, where |error| ≤ g(n) and g(n) ≪ |f(n)|, but only for n large. For n ≤ N, we compute expression(n) instead. That can establish a strong, simple bound valid for all n ≤ N. Example: let m(n) =

k≤n µ(k)/k. Then

|m(n)| ≤ 0.0144 log n

for n ≥ 96955 (Ramar´ e). A C program using interval arithmetic gives

|m(n)| ≤

- 2/n

for all 0 < n ≤ 1014. An algorithm running in time almost linear on N for all n ≤ N is

- bvious here; it is not so in general.

Algorithmic complexity. Computations and asymptotic analysis

Whether an algorithm runs in time O(N), O(N2) or O(N3) is much more important (for large N) than its implementation. Why large N? Often, methods from analysis show: expression(n) = f(n) + error, where |error| ≤ g(n) and g(n) ≪ |f(n)|, but only for n large. For n ≤ N, we compute expression(n) instead. That can establish a strong, simple bound valid for all n ≤ N. Example: let m(n) =

k≤n µ(k)/k. Then

|m(n)| ≤ 0.0144 log n

for n ≥ 96955 (Ramar´ e). A C program using interval arithmetic gives

|m(n)| ≤

- 2/n

for all 0 < n ≤ 1014. An algorithm running in time almost linear on N for all n ≤ N is

- bvious here; it is not so in general. The limitation can be running time or accuracy.

Errors

In practice, computer errors are barely an issue, except perhaps for very large computations.

Errors

In practice, computer errors are barely an issue, except perhaps for very large

- computations. Obvious approach: just run (or let others run) the same computation

again, and again, possibly on different software/hardware. Doable unless many months of CPU time are needed. That is precisely the case when the probability of computer error may not be microscopic :( .

Errors

In practice, computer errors are barely an issue, except perhaps for very large

- computations. Obvious approach: just run (or let others run) the same computation

again, and again, possibly on different software/hardware. Doable unless many months of CPU time are needed. That is precisely the case when the probability of computer error may not be microscopic :( .

Errors

In practice, computer errors are barely an issue, except perhaps for very large

- computations. Obvious approach: just run (or let others run) the same computation

again, and again, possibly on different software/hardware. Doable unless many months of CPU time are needed. That is precisely the case when the probability of computer error may not be microscopic :( . Almost all of the time, the actual issue is human error: errors in programming, errors in input/output.

Errors

In practice, computer errors are barely an issue, except perhaps for very large

- computations. Obvious approach: just run (or let others run) the same computation

again, and again, possibly on different software/hardware. Doable unless many months of CPU time are needed. That is precisely the case when the probability of computer error may not be microscopic :( . Almost all of the time, the actual issue is human error: errors in programming, errors in input/output. Humans also make mistakes when they don’t use computers! conceptual mistakes, silly errors, especially in computations or tedious casework. How to avoid them?

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short.

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request.

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable.

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable. Thanks to SageTeX: (a) input-output automated,

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable. Thanks to SageTeX: (a) input-output automated,

(b) code can be displayed in text by switching a TeX flag.

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable. Thanks to SageTeX: (a) input-output automated,

(b) code can be displayed in text by switching a TeX flag. Runs afresh whenever TeX file is compiled. Thus human errors in copying input/output and updating versions are minimized.

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable. Thanks to SageTeX: (a) input-output automated,

(b) code can be displayed in text by switching a TeX flag. Runs afresh whenever TeX file is compiled. Thus human errors in copying input/output and updating versions are minimized. Example:

Hence, $f(x)\leq \sage{result1 + result2}$.

Is it right to use a computer-algebra system in a proof?

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable. Thanks to SageTeX: (a) input-output automated,

(b) code can be displayed in text by switching a TeX flag. Runs afresh whenever TeX file is compiled. Thus human errors in copying input/output and updating versions are minimized. Example:

Hence, $f(x)\leq \sage{result1 + result2}$.

Is it right to use a computer-algebra system in a proof? Sagemath is both open-source and highly modular. We depend only on the correctness of the (small) parts of it that we use in the proof.

Avoding errors: my current practice

Program a great deal at first, but try to minimize the number of programs in submitted version, and keep them short. Time- and space-intensive computations: a few programs in C, available on request. Small computations: Sagemath/Python code, largely included in the TeX source

- f the book. Short and readable. Thanks to SageTeX: (a) input-output automated,

(b) code can be displayed in text by switching a TeX flag. Runs afresh whenever TeX file is compiled. Thus human errors in copying input/output and updating versions are minimized. Example:

Hence, $f(x)\leq \sage{result1 + result2}$.

Is it right to use a computer-algebra system in a proof? Sagemath is both open-source and highly modular. We depend only on the correctness of the (small) parts of it that we use in the proof. In my case: Python + basic computer algebra + ARB.

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic.

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields.

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields. QEPCAD, please prove that, 0 < x ≤ y1, y2 < 1 with y2

1 ≤ x, y2 2 ≤ x,

1 + y1y2

(1 − y1 + x)(1 − y2 + x) ≤ (1 − x3)2(1 − x4) (1 − y1y2)(1 − y1y2

2)(1 − y2 1y2).

(1)

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields. QEPCAD, please prove that, 0 < x ≤ y1, y2 < 1 with y2

1 ≤ x, y2 2 ≤ x,

1 + y1y2

(1 − y1 + x)(1 − y2 + x) ≤ (1 − x3)2(1 − x4) (1 − y1y2)(1 − y1y2

2)(1 − y2 1y2).

(1) QEPCAD crashes.

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields. QEPCAD, please prove that, 0 < x ≤ y1, y2 < 1 with y2

1 ≤ x, y2 2 ≤ x,

1 + y1y2

(1 − y1 + x)(1 − y2 + x) ≤ (1 − x3)2(1 − x4) (1 − y1y2)(1 − y1y2

2)(1 − y2 1y2).

(1) QEPCAD crashes. Show checking cases y1 = y2, yi = √x or yi = x is enough; then QEPCAD does fine.

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields. QEPCAD, please prove that, 0 < x ≤ y1, y2 < 1 with y2

1 ≤ x, y2 2 ≤ x,

1 + y1y2

(1 − y1 + x)(1 − y2 + x) ≤ (1 − x3)2(1 − x4) (1 − y1y2)(1 − y1y2

2)(1 − y2 1y2).

(1) QEPCAD crashes. Show checking cases y1 = y2, yi = √x or yi = x is enough; then QEPCAD does fine. Computational complexity (dependence of running time on number of variables and degree) has to be very bad (exponential), and is currently much worse than that.

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields. QEPCAD, please prove that, 0 < x ≤ y1, y2 < 1 with y2

1 ≤ x, y2 2 ≤ x,

1 + y1y2

(1 − y1 + x)(1 − y2 + x) ≤ (1 − x3)2(1 − x4) (1 − y1y2)(1 − y1y2

2)(1 − y2 1y2).

(1) QEPCAD crashes. Show checking cases y1 = y2, yi = √x or yi = x is enough; then QEPCAD does fine. Computational complexity (dependence of running time on number of variables and degree) has to be very bad (exponential), and is currently much worse than that. Again, only some small areas of math accept this treatment (G¨

- del!).

Advanced issues, I

Automated proofs Some small parts of mathematics are complete theories, in the sense of logic. Example: theory of real closed fields. QEPCAD, please prove that, 0 < x ≤ y1, y2 < 1 with y2

1 ≤ x, y2 2 ≤ x,

1 + y1y2

(1 − y1 + x)(1 − y2 + x) ≤ (1 − x3)2(1 − x4) (1 − y1y2)(1 − y1y2

2)(1 − y2 1y2).

(1) QEPCAD crashes. Show checking cases y1 = y2, yi = √x or yi = x is enough; then QEPCAD does fine. Computational complexity (dependence of running time on number of variables and degree) has to be very bad (exponential), and is currently much worse than that. Again, only some small areas of math accept this treatment (G¨

- del!).

One such lemma in v1 of ternary Goldbach, zero in current version.

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers:

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”).

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”). Do we ever use formal proofs in practice? Can we?

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”). Do we ever use formal proofs in practice? Can we? Well –

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”). Do we ever use formal proofs in practice? Can we? Well – Now sometimes possible thanks to Proof assistants (Isabelle/HOL, Coq,. . . ):

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”). Do we ever use formal proofs in practice? Can we? Well – Now sometimes possible thanks to Proof assistants (Isabelle/HOL, Coq,. . . ): Humans and computers work together to make a proof (in our day-to-day sense) into a formal proof. Notable success: v2 of Hales’s sphere-packing theorem.

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”). Do we ever use formal proofs in practice? Can we? Well – Now sometimes possible thanks to Proof assistants (Isabelle/HOL, Coq,. . . ): Humans and computers work together to make a proof (in our day-to-day sense) into a formal proof. Notable success: v2 of Hales’s sphere-packing theorem. Only some areas of math are covered so far.

Advanced issues, II

Formal proofs Or, what we call a proof when we study logic, or argue with philosophers: a sequence

- f symbols whose “correctness” can be checked by a monkey grinding an organ

(nowadays called “computer”). Do we ever use formal proofs in practice? Can we? Well – Now sometimes possible thanks to Proof assistants (Isabelle/HOL, Coq,. . . ): Humans and computers work together to make a proof (in our day-to-day sense) into a formal proof. Notable success: v2 of Hales’s sphere-packing theorem. Only some areas of math are covered so far. My perception: some time will pass before complex analysis, let alone analytic number theory, can be done in this way. Time will tell what is practical.

Machine-Assisted Proofs in Group Theory and Representation Theory

Pham Huu Tiep

Rutgers/VIASM

Rio de Janeiro, Aug. 7, 2018

23

A typical outline of many proofs

Mathematical induction

24

A typical outline of many proofs

Mathematical induction A modified strategy: Goal: Prove a statement concerning a (finite or algebraic) group G Reduce to the case of (almost quasi) simple G, using perhaps CFSG If G is large enough: A uniform proof

24

A typical outline of many proofs

Mathematical induction A modified strategy: Goal: Prove a statement concerning a (finite or algebraic) group G Reduce to the case of (almost quasi) simple G, using perhaps CFSG If G is large enough: A uniform proof If G is small: “Induction base” In either case, induction base usually needs a different treatment

24

An example: the Ore conjecture

Conjecture (Ore, 1951) Every element g in any finite non-abelian simple group G is a commutator, i.e. can be written as g = xyx−1y−1 for some x, y ∈ G.

25

An example: the Ore conjecture

Conjecture (Ore, 1951) Every element g in any finite non-abelian simple group G is a commutator, i.e. can be written as g = xyx−1y−1 for some x, y ∈ G. Partial important results: Ore/Miller, R. C. Thompson, Neub¨ user-Pahlings-Cleuvers, Ellers-Gordeev Theorem (Liebeck-O’Brien-Shalev-T, 2010) Yes!

25

An example: the Ore conjecture

Conjecture (Ore, 1951) Every element g in any finite non-abelian simple group G is a commutator, i.e. can be written as g = xyx−1y−1 for some x, y ∈ G. Partial important results: Ore/Miller, R. C. Thompson, Neub¨ user-Pahlings-Cleuvers, Ellers-Gordeev Theorem (Liebeck-O’Brien-Shalev-T, 2010) Yes! Even building on previous results, the proof of this LOST-theorem is still 70 pages long.

25

Proof of the Ore conjecture

How does this proof go? A detailed account: Malle’s 2013 Bourbaki seminar Relies on: Lemma (Frobenius character sum formula) Given a finite group G and an element g ∈ G, the number of pairs (x, y) ∈ G × G such that g = xyx−1y−1 is

|G| ·

- χ∈Irr(G)

χ(g) χ(1).

26

Checking induction base. I

G one of the groups in the induction base If the character table of G is available: Use Lemma 3 If the character table of G is not available, but G is not too large: Construct the character table of G and proceed as before. Start with a nice presentation or representation of G Produce enough characters of G to get ZIrr(G) Use LLL-algorithm to get the irreducible ones. Unger’s algorithm, implemented in MAGMA But some G, like Sp10(F3), Ω11(F3), or U6(F7) are still too big for this computation.

27

Checking induction base. II

For the too-big G in the induction base: Given g ∈ G. Run a randomized search for y ∈ G such that y and gy are conjugate. Thus, gy = xyx−1 for some x ∈ G, i.e. g = [x, y]. Done!

28

How long and how reliable was the computation?

- It took about 150 weeks of CPU time of a 2.3GHz computer with 250GB of RAM.

29

How long and how reliable was the computation?

- It took about 150 weeks of CPU time of a 2.3GHz computer with 250GB of RAM.

- In the cases we used/computed character table of G:

The character table is publicly available and can be re-checked by others.

- In the larger cases: The randomized computation was used to find (x, y) for a given

g, and then one checks directly that g = [x, y].

29

Machine-assisted discoveries

Perhaps even more important are:

1

Machine-assisted discovered theorems, and

2

Machine-assisted discovered counterexamples. Some examples: The Galois-McKay conjecture was formulated by Navarro after many many days

- f computing in GAP.

Isaacs-Navarro-Olsson-T found (and later proved) a natural McKay correspondence (for the prime 2) also after long computations with Symn, n ≤ 50. Isaacs-Navarro: A solvable rational group of order 29 · 3, whose Sylow 2-subgroup is not rational.

30

some thoughts on machine-assisted proofs

lu´ ıs cruz-filipe

department of mathematics and computer science university of southern denmark

international congress of mathematicians rio de janeiro, brazil august 7th, 2018

31

a not-so-new trend in mathematics

the 4-color theorem Appel, Haken and Koch (1977) traditionally considered “the” birth of the field more than 10 years before. . . (Floyd & Knuth, 1973)

32

the present day

software and hardware verification critical systems (testing is not enough) lots of mechanical, “boring” proofs with lots of (simple) cases

- ften largely/completely automatic

mathematical results because we can proofs in “mathematical style”, typically interactive “less elegant” proofs by encoding, often automatic

33

two styles of proving

ad hoc programs highly specialized programs check that some property holds (cf. early examples) the program must be correct not always easy to trust theorem provers general-purpose programs that can construct/check proofs in a particular logic we still need to trust the program (but. . . ) the encoding in the logic must be correct

34

an example

- ptimal sorting networks

same domain as Floyd and Knuth, proving S(9) = 25 ad-hoc Prolog program, following “good” practices independently verified by encoding in propositional logic algorithm rerun by a provenly correct program why so much work? can we trust Prolog? can we trust sat-solvers (more on that later)? is the sat encoding correct? (it wasn’t – several times) certified programs are typically MUCH slower

35

another example

sat solving general problem: is a given propositional formula satisfiable? very efficient solvers exist, able to deal with gigantic formulas usable in practice to solve other problems by encoding nearly impossible to understand the code recent trend independently check a trace of the sat solver’s “reasoning” checking a proof is much easier than finding it possible to do efficiently state-of-the-art traces of around 400 TB

36

Bibliography I

K.I. Appel and W. Haken. Every Planar Map is Four-Colorable.

- Bull. A.M.S., 82:711–712, 1976.

K.I. Appel and W. Haken. Every Planar Map is Four-Colorable (With the collaboration of J. Koch), volume 98

- f Contemporary Mathematics.

A.M.S., 1989.

- W. Feit and J.G. Thompson.

Solvability of Groups of Odd Order. Pacific J. Math., 13:775–1029, 1963.

37

Bibliography II

- G. Gonthier.

Formal Proof — The Four-Color Theorem. Notices A.M.S., 55:1382–1393, 2008.

- G. Gonthier and L. Th´

ery. Formal Proof — The Feit–Thompson Theorem.

http: //www.msr-inria.inria.fr/events-news/feit-thompson-proved-in-coq,

2012.

38

Bibliography III

Thomas Hales, Mark Adams, Gertrud Bauer, Tat Dat Dang, John Harrison, Le Truong Hoang, Cezary Kaliszyk, Victor Magron, Sean McLaughlin, TatThang Nguyen, Quang Truong Nguyen, Tobias Nipkow, Steven Obua, Joseph Pleso, Jason Rute, Alexey Solovyev, Thi Hoai An Ta, Nam Trung Tran, Thi Diep Trieu, Josef Urban, Ky Vu, and Roland Zumkeller. A Formal Proof of the Kepler Conjecture. Forum of Mathematics, Pi, 5:e2, 2017. T.C. Hales. A proof of the Kepler conjecture.

- Ann. Math., 162:1065–1185, 2005.

39

Bibliography IV

T.C. Hales. Flyspeck project completion. E-mail Sunday August 10th, 2014. H.A. Helfgott. Major arcs for Goldbach’s theorem.

http://arxiv.org/abs/1305.2897, 2013.

J.A. Maynard. Large gaps between primes. Annals of Mathematics, 183:915–933, 2016.

40