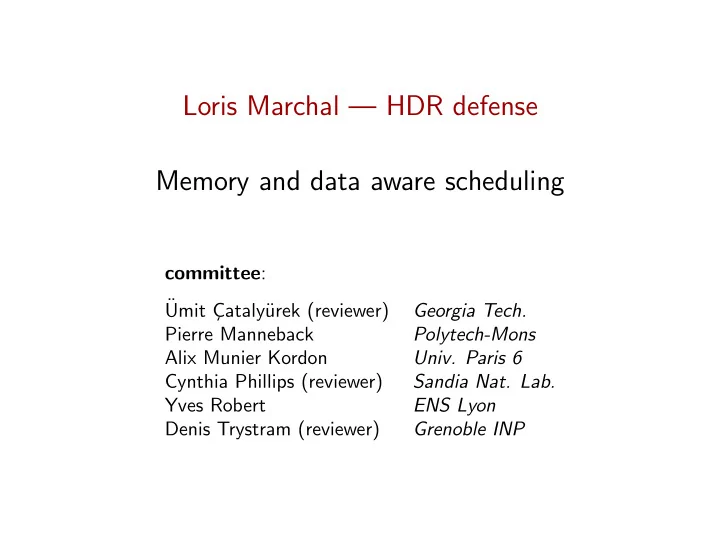

Loris Marchal — HDR defense Memory and data aware scheduling

committee: ¨ Umit C ¸ataly¨ urek (reviewer) Georgia Tech. Pierre Manneback Polytech-Mons Alix Munier Kordon

- Univ. Paris 6

Loris Marchal HDR defense Memory and data aware scheduling - - PowerPoint PPT Presentation

Loris Marchal HDR defense Memory and data aware scheduling committee : Umit C ataly urek (reviewer) Georgia Tech. Pierre Manneback Polytech-Mons Alix Munier Kordon Univ. Paris 6 Cynthia Phillips (reviewer) Sandia Nat. Lab.

2 / 46

◮ Mathias Jacquelin: 2008 – 2011 (with Y. Robert)

◮ Julien Herrmann: 2012 – 2015 (with Y. Robert)

◮ Bertrand Simon: 2015 – 2018 (with F. Vivien)

◮ Changjiang Gou: 2016 – . . . (with A. Benoit)

3 / 46

4 / 46

4 / 46

4 / 46

4 / 46

4 / 46

4 / 46

5 / 46

◮ Chapter 2. Memory-aware dataflow model ◮ Chapter 3. Peak Memory and I/O Volume on Trees ◮ Chapter 4. Peak memory of series-parallel task graphs ◮ Chapter 5. Hybrid scheduling with bounded memory ◮ Chapter 6. Memory-aware parallel tree processing

◮ Chapter 7. Matrix product for memory hierarchy ◮ Chapter 8. Data redistribution for parallel computing ◮ Chapter 9. Dynamic scheduling for matrix computations

5 / 46

◮ Chapter 2. Memory-aware dataflow model ◮ Chapter 3. Peak Memory and I/O Volume on Trees ◮ Chapter 4. Peak memory of series-parallel task graphs ◮ Chapter 5. Hybrid scheduling with bounded memory ◮ Chapter 6. Memory-aware parallel tree processing

◮ Chapter 7. Matrix product for memory hierarchy ◮ Chapter 8. Data redistribution for parallel computing ◮ Chapter 9. Dynamic scheduling for matrix computations

8 / 46

◮ Express dependencies between tasks ◮ Write code for each task on (possibly several) processing units ◮ Choose task mapping at runtime

9 / 46

9 / 46

9 / 46

9 / 46

9 / 46

10 / 46

10 / 46

10 / 46

11 / 46

12 / 46

+ u − − + 7 + v − 2 z 5 1 z x × / + t

12 / 46

t u − − + 7 + v − 2 z 5 1 z x × / + +

12 / 46

u − − + 7 + v − 2 z 5 1 z x × / + + t

12 / 46

− − + 7 + v − 2 z 5 1 z x × / + + t u

12 / 46

t u − − + 7 + v − 2 z 5 1 z x × / + +

12 / 46

− − + 7 + v − 2 z 5 1 z x × / + + t u

12 / 46

− + 7 + v − 2 z 5 1 z x × / + + t u −

12 / 46

u − − + 7 + v − 2 z 5 1 z x × / + + t

12 / 46

− − + 7 + v − 2 z 5 1 z x × / + + t u

12 / 46

13 / 46

13 / 46

13 / 46

14 / 46

t + 7 + v − 2 z 5 1 z x × / + + − −

15 / 46

16 / 46

16 / 46

17 / 46

◮ Express factorization as a task graph ◮ Scheduled using specialized runtime

18 / 46

3 3 2 2 8 1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3 3 2

8 1 2

3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3 3 2

8 1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3 3

8 1

2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3 3

8 1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3

8

2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3

2 2 8 1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

2 2

1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

2 2 8 1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

2 2 8 1 2 2

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3 3 2 2 8 1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3 2 2

1 2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3

2 8 1

2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

3

8 1 2

3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

8

2 2 3

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

2 2 8 1 2 2

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

2 2 8 1 2 2

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

18 / 46

2 2 8 1 2 2

◮ Node weight: temporary data (mi)

◮ Edge weight: data size (di,j)

◮ Best post-order traversal ◮ Optimal traversal

◮ Parallel processing ◮ Goal: minimize processing time

19 / 46

19 / 46

19 / 46

19 / 46

19 / 46

19 / 46

19 / 46

20 / 46

20 / 46

20 / 46

20 / 46

20 / 46

20 / 46

21 / 46

◮ Peak memory ◮ Makespan (total processing time)

22 / 46

23 / 46

24 / 46

◮ When data sizes ≪ memory bound:

◮ When approaching memory bound, limit parallelism

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

25 / 46

◮ From [Agullo, Buttari, Guermouche & Lopez 2013] ◮ Choose a sequential task order (e.g. best post-order) ◮ While memory available, activate tasks in this order:

◮ Process only activated tasks (with given scheduling priority)

◮ Free inputs ◮ Activate as many new tasks as possible ◮ Then, start scheduling activated tasks

◮ Can cope with very small memory bound ◮ No memory reuse

26 / 46

activated completed running

◮ Check how much memory is already booked by its subtree ◮ Book only what is missing (if needed)

◮ Distribute the booked memory to all activated ancestors ◮ Then, release the remaining memory (if any)

◮ Based on a sequential schedule using less than the memory

◮ Process the whole tree without going out of memory

27 / 46

28 / 46

◮ Activation from [Agullo et al, Europar 2013] ◮ MemBooking

29 / 46

1.0 1.2 1.4 1.6 1.8 5 10 15 20

Normalized memory bound Normalized makespan

Heuristics: Activation MemBooking

30 / 46

◮ Complexity and inapproximability ◮ Efficient booking heuristics (guaranteed termination)

32 / 46

33 / 46

◮ During the collection ◮ By a previous computation

34 / 46

◮ Ex: 2D-cyclic

34 / 46

◮ Ex: 2D-cyclic

34 / 46

◮ Ex: 2D-cyclic

34 / 46

◮ Ex: 2D-cyclic

35 / 46

36 / 46

◮ Total volume of communication: the total number of data

◮ Number of parallel communication steps: one-port

37 / 46

38 / 46

39 / 46

40 / 46

◮ Processor i gets part i

◮ Total volume (vol) ◮ Redistribution steps (steps)

41 / 46

◮ Owner compute (default heuristics of Parsec) ◮ Canonical redistribution to Ptar ◮ Best redistribution for total volume (vol) ◮ Best redistribution for number of steps (steps)

42 / 46

43 / 46

45 / 46

46 / 46

◮ Lower scheduling complexity ◮ Make the algorithms dynamic (graph gradually uncovered) ◮ Distributing scheduling decisions

◮ Hierarchical scheduling ◮ Precompute memory information on the graph