Costin Iancu Lawrence Berkeley National Laboratory

WPSE 2009

- Unified Parallel C

– SPMD programming model, shared memory space abstraction

– Communication is either implicit or explicit – one-sided – Memory model: relaxed and strict

- Ubiquitous UPC implementation

– Compiler based on the Open64 framework

–Source to source translation

– GASNet communication libraries

- PUT/GET primitives

- Vector/Index/Strided (VIS) primitives

- Synchronization, collective operations

- Provide integration across all levels of the software stack

- Mechanisms for finer grained control over system resources

- Application level resource usage policies

- Language and compiler support

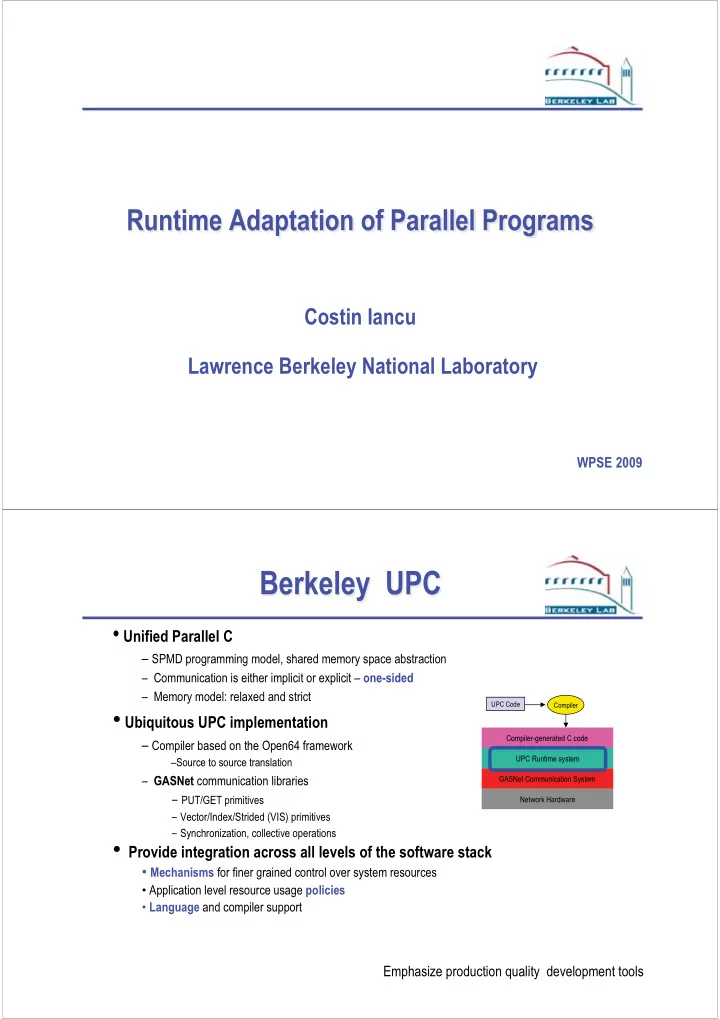

Compiler-generated C code UPC Runtime system GASNet Communication System Network Hardware

UPC Code Compiler

UPC Runtime system

Emphasize production quality development tools